Dre Slam Save

RGB-D Encoder SLAM for a Differential-Drive Robot in Dynamic Environments

招人啦!!!【长期有效,欢迎交流】【2023-12更新】

嗨,大家好!

我是蔚来汽车[NIO]自动驾驶团队的一员,负责多传感器融合定位、SLAM等领域的研发工作。目前,我们正在寻找新的队友加入我们。

我们团队研发的技术已经在多个功能场景成功量产。例如,高速城快领航辅助驾驶[NOP+] 功能于2022年发布,截至2023年10月,已在累积服务里程超过1亿公里。今年,还推出了技术更为复杂的城区领航功能,并通过群体智能不断拓展其可用范围。同时,在11月份发布了独特的高速服务区领航[PSP] 体验,实现了高速到服务区换电场景的全流程自动化和全程领航体验。此外,我们团队也参与了一些基础功能背后的研发,如AEB、LCC等。未来还有更多令人期待的功能发发布,敬请期待。

如果你对计算机视觉、深度学习、SLAM、多传感器融合、组合惯导等技术有着扎实的背景,不论是全职还是实习,我们都欢迎你加入我们的团队。有兴趣的话,可以通过微信联系我们:YDSF16。

NIO社招内推码: B89PQMZ 投递链接: https://nio.jobs.feishu.cn/referral/m/position/detail/?token=MTsxNzAzMjY0NzE2NTYyOzY5ODI0NTE1OTI5OTgxOTI2NDg7NzI2MDc4NjA0ODI2Mjk2NTU0MQ

DRE-SLAM

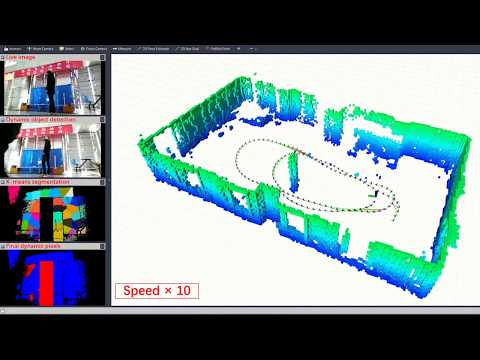

Dynamic RGB-D Encoder SLAM for a Differential-Drive Robot

Authors: Dongsheng Yang, Shusheng Bi, Wei Wang, Chang Yuan, Wei Wang, Xianyu Qi, and Yueri Cai

DRE-SLAM is developed for a differential-drive robot that runs in dynamic indoor scenarios. It takes the information of an RGB-D camera and two wheel-encoders as inputs. The outputs are the 2D pose of the robot and a static background OctoMap.

Video: Youtube or Dropbox or Pan.Baidu

Paper: DRE-SLAM: Dynamic RGB-D Encoder SLAM for a Differential-Drive Robot, Dongsheng Yang, Shusheng Bi, Wei Wang, Chang Yuan, Wei Wang, Xianyu Qi, and Yueri Cai. (Remote Sensing, 2019) PDF, WEB

Prerequisites

1. Ubuntu 16.04

2. ROS Kinetic

Follow the instructions in: http://wiki.ros.org/kinetic/Installation/Ubuntu

3. ROS pacakges

sudo apt-get install ros-kinetic-cv-bridge ros-kinetic-tf ros-kinetic-message-filters ros-kinetic-image-transport ros-kinetic-octomap ros-kinetic-octomap-msgs ros-kinetic-octomap-ros ros-kinetic-octomap-rviz-plugins ros-kinetic-octomap-server ros-kinetic-pcl-ros ros-kinetic-pcl-msgs ros-kinetic-pcl-conversions ros-kinetic-geometry-msgs

4. OpenCV 4.0

We use the YOLOv3 implemented in OpenCV 4.0.

Follow the instructions in: https://opencv.org/opencv-4-0-0.html

5. Ceres

Follow the instructions in: http://www.ceres-solver.org/installation.html

Build DRE-SLAM

1. Clone the repository

cd ~/catkin_ws/src

git clone https://github.com/ydsf16/dre_slam.git

2. Build DBow2

cd dre_slam/third_party/DBoW2

mkdir build

cd build

cmake ..

make -j4

3. Build Sophus

cd ../../Sophus

mkdir build

cd build

cmake ..

make -j4

4. Build object detector

cd ../../../object_detector

mkdir build

cd build

cmake ..

make -j4

5. Download the YOLOv3 model

cd ../../config

mkdir yolov3

cd yolov3

wget https://pjreddie.com/media/files/yolov3.weights

wget https://github.com/pjreddie/darknet/blob/master/cfg/yolov3.cfg?raw=true -O ./yolov3.cfg

wget https://github.com/pjreddie/darknet/blob/master/data/coco.names?raw=true -O ./coco.names

6. Catkin_make

cd ~/catkin_ws

catkin_make

source ~/catkin_ws/devel/setup.bash

Example

Dataset

We collected several data sequences in our lab using our Redbot robot. The dataset is available at Pan.Baidu or Dropbox.

Run

1. Open a terminal and launch dre_slam

roslaunch dre_slam comparative_test.launch

2. Open a terminal and play one rosbag

rosbag play <bag_name>.bag

Run on your own robot

You need to do three things:

-

Calibrate the intrinsic parameter of the camera, the robot odometry parameter, and the rigid transformation from the camera to the robot.

-

Prepare a parameter configuration file, refer to the config folder.

-

Prepare a launch file, refer to the launch folder.

Contact us

For any issues, please feel free to contact Dongsheng Yang: [email protected]