Yolov5 Versions Save

YOLOv5 🚀 in PyTorch > ONNX > CoreML > TFLite

v7.0

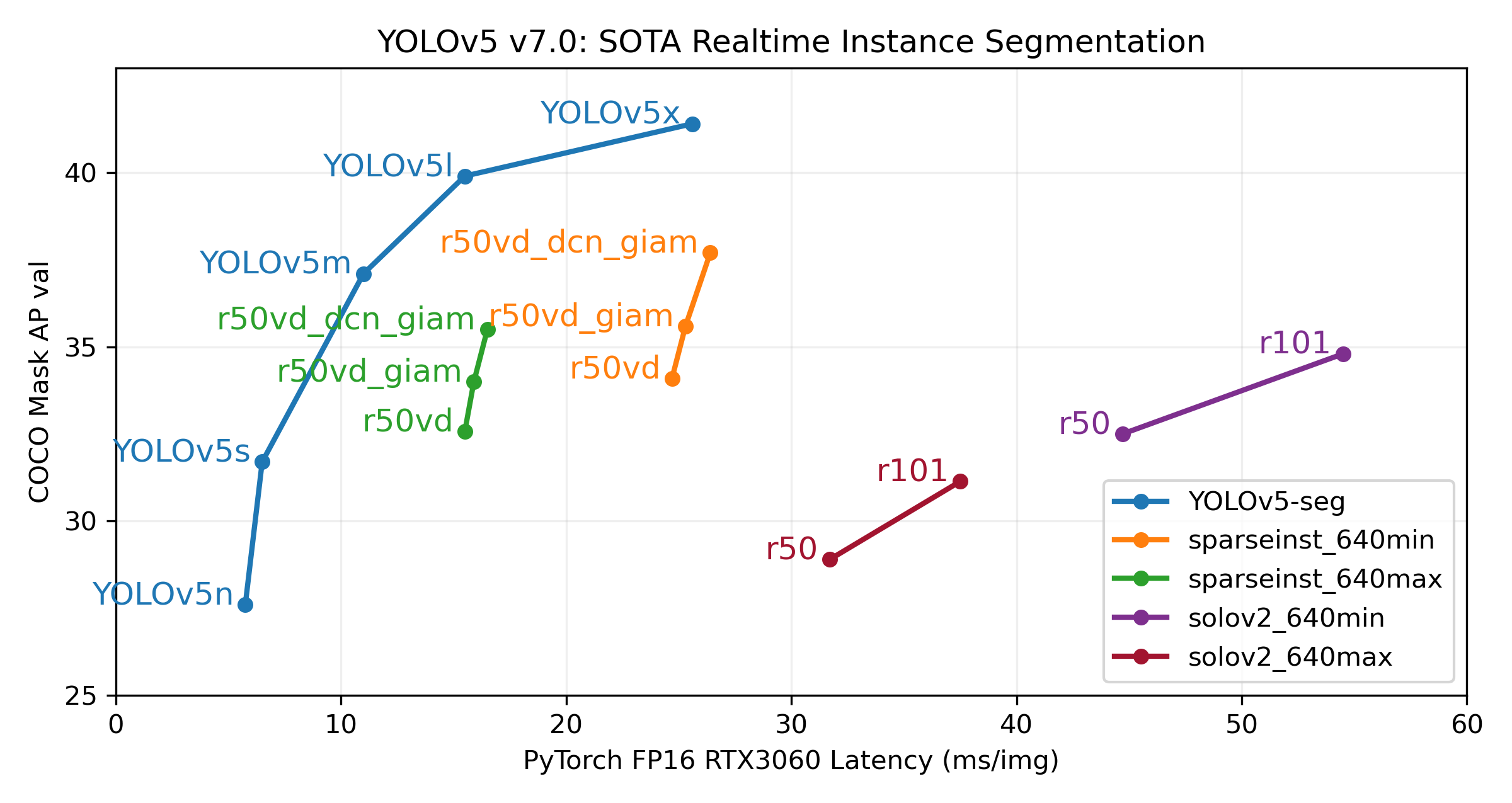

1 year agoOur new YOLOv5 v7.0 instance segmentation models are the fastest and most accurate in the world, beating all current SOTA benchmarks. We've made them super simple to train, validate and deploy. See full details in our Release Notes and visit our YOLOv5 Segmentation Colab Notebook for quickstart tutorials.

Our primary goal with this release is to introduce super simple YOLOv5 segmentation workflows just like our existing object detection models. The new v7.0 YOLOv5-seg models below are just a start, we will continue to improve these going forward together with our existing detection and classification models. We'd love your feedback and contributions on this effort!

This release incorporates 280 PRs from 41 contributors since our last release in August 2022.

Important Updates

- Segmentation Models ⭐ NEW: SOTA YOLOv5-seg COCO-pretrained segmentation models are now available for the first time (https://github.com/ultralytics/yolov5/pull/9052 by @glenn-jocher, @AyushExel and @Laughing-q)

- Paddle Paddle Export: Export any YOLOv5 model (cls, seg, det) to Paddle format with python export.py --include paddle (https://github.com/ultralytics/yolov5/pull/9459 by @glenn-jocher)

-

YOLOv5 AutoCache: Use

python train.py --cache ramwill now scan available memory and compare against predicted dataset RAM usage. This reduces risk in caching and should help improve adoption of the dataset caching feature, which can significantly speed up training. (https://github.com/ultralytics/yolov5/pull/10027 by @glenn-jocher) - Comet Logging and Visualization Integration: Free forever, Comet lets you save YOLOv5 models, resume training, and interactively visualise and debug predictions. (https://github.com/ultralytics/yolov5/pull/9232 by @DN6)

New Segmentation Checkpoints

We trained YOLOv5 segmentations models on COCO for 300 epochs at image size 640 using A100 GPUs. We exported all models to ONNX FP32 for CPU speed tests and to TensorRT FP16 for GPU speed tests. We ran all speed tests on Google Colab Pro notebooks for easy reproducibility.

| Model | size (pixels) |

mAPbox 50-95 |

mAPmask 50-95 |

Train time 300 epochs A100 (hours) |

Speed ONNX CPU (ms) |

Speed TRT A100 (ms) |

params (M) |

FLOPs @640 (B) |

|---|---|---|---|---|---|---|---|---|

| YOLOv5n-seg | 640 | 27.6 | 23.4 | 80:17 | 62.7 | 1.2 | 2.0 | 7.1 |

| YOLOv5s-seg | 640 | 37.6 | 31.7 | 88:16 | 173.3 | 1.4 | 7.6 | 26.4 |

| YOLOv5m-seg | 640 | 45.0 | 37.1 | 108:36 | 427.0 | 2.2 | 22.0 | 70.8 |

| YOLOv5l-seg | 640 | 49.0 | 39.9 | 66:43 (2x) | 857.4 | 2.9 | 47.9 | 147.7 |

| YOLOv5x-seg | 640 | 50.7 | 41.4 | 62:56 (3x) | 1579.2 | 4.5 | 88.8 | 265.7 |

- All checkpoints are trained to 300 epochs with SGD optimizer with

lr0=0.01andweight_decay=5e-5at image size 640 and all default settings.

Runs logged to https://wandb.ai/glenn-jocher/YOLOv5_v70_official -

Accuracy values are for single-model single-scale on COCO dataset.

Reproduce bypython segment/val.py --data coco.yaml --weights yolov5s-seg.pt -

Speed averaged over 100 inference images using a Colab Pro A100 High-RAM instance. Values indicate inference speed only (NMS adds about 1ms per image).

Reproduce bypython segment/val.py --data coco.yaml --weights yolov5s-seg.pt --batch 1 -

Export to ONNX at FP32 and TensorRT at FP16 done with

export.py.

Reproduce bypython export.py --weights yolov5s-seg.pt --include engine --device 0 --half

New Segmentation Usage Examples

Train

YOLOv5 segmentation training supports auto-download COCO128-seg segmentation dataset with --data coco128-seg.yaml argument and manual download of COCO-segments dataset with bash data/scripts/get_coco.sh --train --val --segments and then python train.py --data coco.yaml.

# Single-GPU

python segment/train.py --model yolov5s-seg.pt --data coco128-seg.yaml --epochs 5 --img 640

# Multi-GPU DDP

python -m torch.distributed.run --nproc_per_node 4 --master_port 1 segment/train.py --model yolov5s-seg.pt --data coco128-seg.yaml --epochs 5 --img 640 --device 0,1,2,3

Val

Validate YOLOv5m-seg accuracy on ImageNet-1k dataset:

bash data/scripts/get_coco.sh --val --segments # download COCO val segments split (780MB, 5000 images)

python segment/val.py --weights yolov5s-seg.pt --data coco.yaml --img 640 # validate

Predict

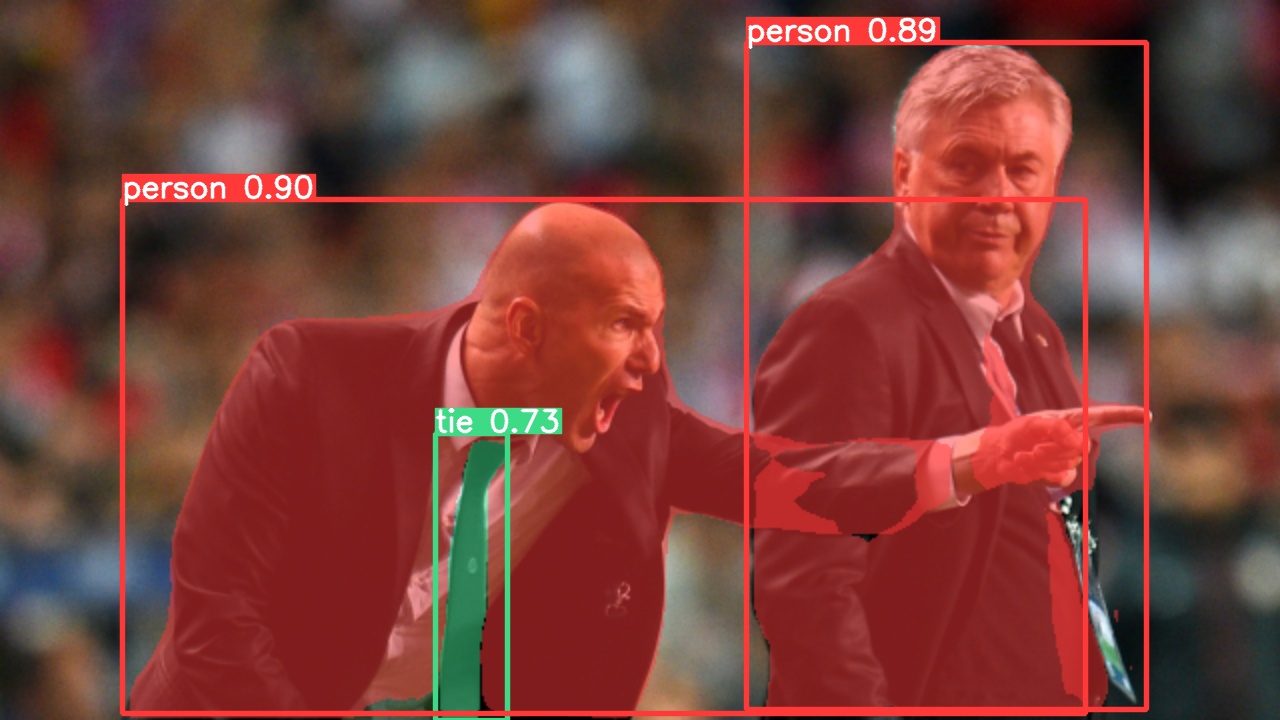

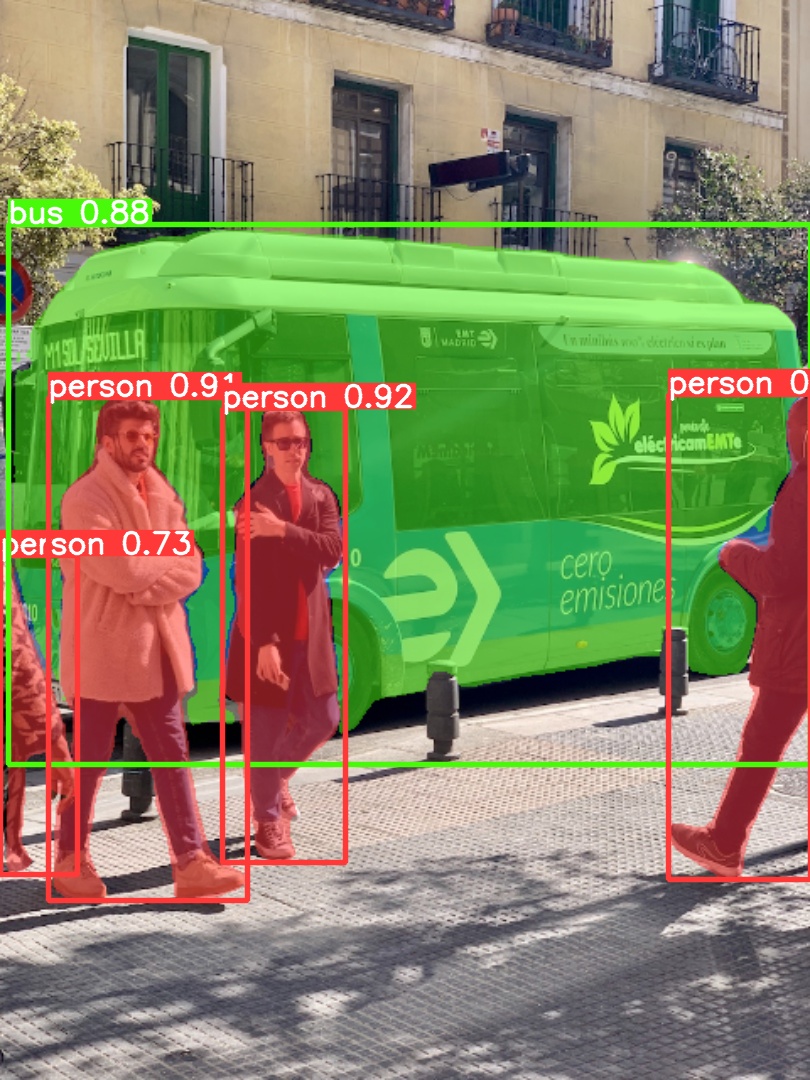

Use pretrained YOLOv5m-seg to predict bus.jpg:

python segment/predict.py --weights yolov5m-seg.pt --data data/images/bus.jpg

model = torch.hub.load('ultralytics/yolov5', 'custom', 'yolov5m-seg.pt') # load from PyTorch Hub (WARNING: inference not yet supported)

|

|

|---|

Export

Export YOLOv5s-seg model to ONNX and TensorRT:

python export.py --weights yolov5s-seg.pt --include onnx engine --img 640 --device 0

Changelog

- Changes between previous release and this release: https://github.com/ultralytics/yolov5/compare/v6.2...v7.0

- Changes since this release: https://github.com/ultralytics/yolov5/compare/v7.0...HEAD

🛠️ New Features and Bug Fixes (280)

* Improve classification comments by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/8997 * Update `attempt_download(release='v6.2')` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/8998 * Update README_cn.md by @KieraMengru0907 in https://github.com/ultralytics/yolov5/pull/9001 * Update dataset `names` from array to dictionary by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9000 * [segment]: Allow inference on dirs and videos by @AyushExel in https://github.com/ultralytics/yolov5/pull/9003 * DockerHub tag update Usage example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9005 * Add weight `decay` to argparser by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9006 * Add glob quotes to detect.py usage example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9007 * Fix TorchScript JSON string key bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9015 * EMA FP32 assert classification bug fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9016 * Faster pre-processing for gray image input by @cher-liang in https://github.com/ultralytics/yolov5/pull/9009 * Improved `Profile()` inference timing by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9024 * `torch.empty()` for speed improvements by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9025 * Remove unused `time_sync` import by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9026 * Add PyTorch Hub classification CI checks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9027 * Attach transforms to model by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9028 * Default --data `imagenette160` training (fastest) by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9033 * VOC `names` dictionary fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9034 * Update train.py `import val as validate` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9037 * AutoBatch protect from negative batch sizes by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9048 * Temporarily remove `macos-latest` from CI by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9049 * Add `--save-hybrid` mAP warning by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9050 * Refactor for simplification by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9054 * Refactor for simplification 2 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9055 * zero-mAP fix return `.detach()` to EMA by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9056 * zero-mAP fix 3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9058 * Daemon `plot_labels()` for faster start by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9057 * TensorBoard fix in tutorial.ipynb by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9064 * zero-mAP fix remove `torch.empty()` forward pass in `.train()` mode by @0zppd in https://github.com/ultralytics/yolov5/pull/9068 * Rename 'labels' to 'instances' by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9066 * Threaded TensorBoard graph logging by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9070 * De-thread TensorBoard graph logging by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9071 * Two dimensional `size=(h,w)` AutoShape support by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9072 * Remove unused Timeout import by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9073 * Improved Usage example docstrings by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9075 * Install `torch` latest stable by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9092 * New `@try_export` decorator by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9096 * Add optional `transforms` argument to LoadStreams() by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9105 * Streaming Classification support by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9106 * Fix numpy to torch cls streaming bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9112 * Infer Loggers project name by @AyushExel in https://github.com/ultralytics/yolov5/pull/9117 * Add CSV logging to GenericLogger by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9128 * New TryExcept decorator by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9154 * Fixed segment offsets by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9155 * New YOLOv5 v6.2 splash images by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9142 * Rename onnx_dynamic -> dynamic by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9168 * Inline `_make_grid()` meshgrid by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9170 * Comment EMA assert by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9173 * Fix confidence threshold for ClearML debug images by @HighMans in https://github.com/ultralytics/yolov5/pull/9174 * Update Dockerfile-cpu by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9184 * Update Dockerfile-cpu to libpython3-dev by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9185 * Update Dockerfile-arm64 to libpython3-dev by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9187 * Fix AutoAnchor MPS bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9188 * Skip AMP check on MPS by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9189 * ClearML's set_report_period's time is defined in minutes not seconds. by @HighMans in https://github.com/ultralytics/yolov5/pull/9186 * Add `check_git_status(..., branch='master')` argument by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9199 * `check_font()` on notebook init by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9200 * Comment `protobuf` in requirements.txt by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9207 * `check_font()` fstring update by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9208 * AutoBatch protect from extreme batch sizes by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9209 * Default AutoBatch 0.8 fraction by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9212 * Delete rebase.yml by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9202 * Duplicate segment verification fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9225 * New `LetterBox(size)` `CenterCrop(size)`, `ToTensor()` transforms (#9213) by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9213 * Add ClassificationModel TF export assert by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9226 * Remove usage of `pathlib.Path.unlink(missing_ok=...)` by @ymerkli in https://github.com/ultralytics/yolov5/pull/9227 * Add support for `*.pfm` images by @spacewalk01 in https://github.com/ultralytics/yolov5/pull/9230 * Python check warning emoji by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9238 * Add `url_getsize()` function by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9247 * Update dataloaders.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9250 * Refactor Loggers : Move code outside train.py by @AyushExel in https://github.com/ultralytics/yolov5/pull/9241 * Update general.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9252 * Add LoadImages._cv2_rotate() by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9249 * Move `cudnn.benchmarks(True)` to LoadStreams by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9258 * `cudnn.benchmark = True` on Seed 0 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9259 * Update `TryExcept(msg='...')`` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9261 * Make sure best.pt model file is preserved ClearML by @thepycoder in https://github.com/ultralytics/yolov5/pull/9265 * DetectMultiBackend improvements by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9269 * Update DetectMultiBackend for tuple outputs by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9274 * Update DetectMultiBackend for tuple outputs 2 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9275 * Update benchmarks CI with `--hard-fail` min metric floor by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9276 * Add new `--vid-stride` inference parameter for videos by @VELCpro in https://github.com/ultralytics/yolov5/pull/9256 * [pre-commit.ci] pre-commit suggestions by @pre-commit-ci in https://github.com/ultralytics/yolov5/pull/9295 * Replace deprecated `np.int` with `int` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9307 * Comet Logging and Visualization Integration by @DN6 in https://github.com/ultralytics/yolov5/pull/9232 * Comet changes by @DN6 in https://github.com/ultralytics/yolov5/pull/9328 * Train.py line 486 typo fix by @robinned in https://github.com/ultralytics/yolov5/pull/9330 * Add dilated conv support by @YellowAndGreen in https://github.com/ultralytics/yolov5/pull/9347 * Update `check_requirements()` single install by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9353 * Update `check_requirements(args, cmds='')` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9355 * Update `check_requirements()` multiple string by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9356 * Add PaddlePaddle export and inference by @kisaragychihaya in https://github.com/ultralytics/yolov5/pull/9240 * PaddlePaddle Usage examples by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9358 * labels.jpg names fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9361 * Exclude `ipython` from hubconf.py `check_requirements()` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9362 * `torch.jit.trace()` fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9363 * AMP Check fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9367 * Remove duplicate line in setup.cfg by @zldrobit in https://github.com/ultralytics/yolov5/pull/9380 * Remove `.train()` mode exports by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9429 * Continue on Docker arm64 failure by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9430 * Continue on Docker failure (all backends) by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9432 * Continue on Docker fail (all backends) fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9433 * YOLOv5 segmentation model support by @AyushExel in https://github.com/ultralytics/yolov5/pull/9052 * Fix val.py zero-TP bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9431 * New model.yaml `activation:` field by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9371 * Fix tick labels for background FN/FP by @hotohoto in https://github.com/ultralytics/yolov5/pull/9414 * Fix TensorRT exports to ONNX opset 12 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9441 * AutoShape explicit arguments fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9443 * Update Detections() instance printing by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9445 * AutoUpdate TensorFlow in export.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9447 * AutoBatch `cudnn.benchmark=True` fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9448 * Do not move downloaded zips by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9455 * Update general.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9454 * `Detect()` and `Segment()` fixes for CoreML and Paddle by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9458 * Add Paddle exports to benchmarks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9459 * Add `macos-latest` runner for CoreML benchmarks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9453 * Fix cutout bug by @Oswells in https://github.com/ultralytics/yolov5/pull/9452 * Optimize imports by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9464 * TensorRT SegmentationModel fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9465 * `Conv()` dilation argument fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9466 * Update ClassificationModel default training `imgsz=224` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9469 * Standardize warnings with `WARNING ⚠️ ...` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9467 * TensorFlow macOS AutoUpdate by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9471 * `segment/predict --save-txt` fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9478 * TensorFlow SegmentationModel support by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9472 * AutoBatch report include reserved+allocated by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9491 * Update Detect() grid init `for` loop by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9494 * Accelerate video inference by @mucunwuxian in https://github.com/ultralytics/yolov5/pull/9487 * Comet Image Logging Fix by @DN6 in https://github.com/ultralytics/yolov5/pull/9498 * Fix visualization title bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9500 * Add paddle tips by @Zengyf-CVer in https://github.com/ultralytics/yolov5/pull/9502 * Segmentation `polygons2masks_overlap()` in `np.int32` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9493 * Fix `random_perspective` param bug in segment by @FeiGeChuanShu in https://github.com/ultralytics/yolov5/pull/9512 * Remove `check_requirements('flatbuffers==1.12')` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9514 * Fix TF Lite exports by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9517 * TFLite fix 2 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9518 * FROM nvcr.io/nvidia/pytorch:22.08-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9520 * Remove scikit-learn constraint on coremltools 6.0 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9530 * Update scikit-learn constraint per coremltools 6.0 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9531 * Update `coremltools>=6.0` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9532 * Update albumentations by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9503 * import re by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9535 * TF.js fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9536 * Refactor dataset batch-size by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9551 * Add `--source screen` for screenshot inference by @zombob in https://github.com/ultralytics/yolov5/pull/9542 * Update `is_url()` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9566 * Detect.py supports running against a Triton container by @gaziqbal in https://github.com/ultralytics/yolov5/pull/9228 * New `scale_segments()` function by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9570 * generator seed fix for DDP mAP drop by @Forever518 in https://github.com/ultralytics/yolov5/pull/9545 * Update default GitHub assets by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9573 * Update requirements.txt comment https://pytorch.org/get-started/locally/ by @davidamacey in https://github.com/ultralytics/yolov5/pull/9576 * Add segment line predictions by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9571 * TensorRT detect.py inference fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9581 * Update Comet links by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9587 * Add global YOLOv5_DATASETS_DIR by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9586 * Add Paperspace Gradient badges by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9588 * #YOLOVISION22 announcement by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9590 * Bump actions/stale from 5 to 6 by @dependabot in https://github.com/ultralytics/yolov5/pull/9595 * #YOLOVISION22 update by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9598 * Apple MPS -> CPU NMS fallback strategy by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9600 * Updated Segmentation and Classification usage by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9607 * Update export.py Usage examples by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9609 * Fix `is_url('https://ultralytics.com')` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9610 * Add `results.save(save_dir='path', exist_ok=False)` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9617 * NMS MPS device wrapper by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9620 * Add SegmentationModel unsupported warning by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9632 * Disabled upload_dataset flag temporarily due to an artifact related bug by @soumik12345 in https://github.com/ultralytics/yolov5/pull/9652 * Add NVIDIA Jetson Nano Deployment tutorial by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9656 * Added cutout import from utils/augmentations.py to use Cutout Aug in … by @senhorinfinito in https://github.com/ultralytics/yolov5/pull/9668 * Simplify val.py benchmark mode with speed mode by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9674 * Allow list for Comet artifact class 'names' field by @KristenKehrer in https://github.com/ultralytics/yolov5/pull/9654 * [pre-commit.ci] pre-commit suggestions by @pre-commit-ci in https://github.com/ultralytics/yolov5/pull/9685 * TensorRT `--dynamic` fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9691 * FROM nvcr.io/nvidia/pytorch:22.09-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9711 * Error in utils/segment/general `masks2segments()` by @paulguerrie in https://github.com/ultralytics/yolov5/pull/9724 * Fix segment evolution keys by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9742 * Remove #YOLOVISION22 notice by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9751 * Update Loggers by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9760 * update mask2segments and saving results by @vladoossss in https://github.com/ultralytics/yolov5/pull/9785 * HUB VOC fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9792 * Update hubconf.py local repo Usage example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9803 * Fix xView dataloaders import by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9807 * Argoverse HUB fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9809 * `smart_optimizer()` revert to weight with decay by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9817 * Allow PyTorch Hub results to display in notebooks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9825 * Logger Cleanup by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9828 * Remove ipython from `check_requirements` exclude list by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9841 * Update HUBDatasetStats() usage examples by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9842 * Update ZipFile to context manager by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9843 * Update README.md by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9846 * Webcam show fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9847 * Fix OpenVINO Usage example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9874 * ClearML Dockerfile fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9876 * Windows Python 3.7 .isfile() fix by @SSTato in https://github.com/ultralytics/yolov5/pull/9879 * Add TFLite Metadata to TFLite and Edge TPU models by @paradigmn in https://github.com/ultralytics/yolov5/pull/9903 * Add `gnupg` to Dockerfile-cpu by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9932 * Add ClearML minimum version requirement by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9933 * Update Comet Integrations table text by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9937 * Update README.md by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9957 * Update README.md by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9958 * Update README.md by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9961 * Switch from suffix checks to archive checks by @kalenmike in https://github.com/ultralytics/yolov5/pull/9963 * FROM nvcr.io/nvidia/pytorch:22.10-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9966 * Full-size proto code (optional) by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9980 * Update README.md by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9970 * Segmentation Tutorial by @paulguerrie in https://github.com/ultralytics/yolov5/pull/9521 * Fix `is_colab()` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9994 * Check online twice on AutoUpdate by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9999 * Add `min_items` filter option by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9997 * Improved `check_online()` robustness by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10000 * Fix `min_items` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10001 * Update default `--epochs 100` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10024 * YOLOv5 AutoCache Update by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10027 * IoU `eps` adjustment by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10051 * Update get_coco.sh by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10057 * [pre-commit.ci] pre-commit suggestions by @pre-commit-ci in https://github.com/ultralytics/yolov5/pull/10068 * Use MNIST160 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10069 * Update Dockerfile keep default torch installation by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10071 * Add `ultralytics` pip package by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10103 * AutoShape integer image-size fix by @janus-zheng in https://github.com/ultralytics/yolov5/pull/10090 * YouTube Usage example comments by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10106 * Mapped project and name to ClearML by @thepycoder in https://github.com/ultralytics/yolov5/pull/10100 * Update IoU functions by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10123 * Add Ultralytics HUB to README by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10070 * Fix benchmark.py usage comment by @rusamentiaga in https://github.com/ultralytics/yolov5/pull/10131 * Update HUB banner image by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10134 * Copy-Paste zero value fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10152 * Add Copy-Paste to `mosaic9()` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10165 * Add `join_threads()` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10086 * Fix dataloader filepath modification to perform replace only once and not for all occurences of string by @adumrewal in https://github.com/ultralytics/yolov5/pull/10163 * fix: prevent logging config clobbering by @rkechols in https://github.com/ultralytics/yolov5/pull/10133 * Filter PyTorch 1.13 UserWarnings by @triple-Mu in https://github.com/ultralytics/yolov5/pull/10166 * Revert "fix: prevent logging config clobbering" by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10177 * Apply make_divisible for ONNX models in Autoshape by @janus-zheng in https://github.com/ultralytics/yolov5/pull/10172 * data.yaml `names.keys()` integer assert by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10190 * fix: try 2 - prevent logging config clobbering by @rkechols in https://github.com/ultralytics/yolov5/pull/10192 * Segment prediction labels normalization fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10205 * Simplify dataloader tqdm descriptions by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10210 * New global `TQDM_BAR_FORMAT` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10211 * Feature/classification tutorial refactor by @paulguerrie in https://github.com/ultralytics/yolov5/pull/10039 * Remove Colab notebook High-Memory notices by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10212 * Revert `--save-txt` to default False by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10213 * Add `--source screen` Usage example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10215 * Add `git` info to training checkpoints by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/9655 * Add git info to cls, seg checkpoints by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10217 * Update Comet preview image by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10220 * Scope gitpyhon import in `check_git_info()` by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10221 * Squeezenet reshape outputs fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/10222😃 New Contributors (30)

* @KieraMengru0907 made their first contribution in https://github.com/ultralytics/yolov5/pull/9001 * @cher-liang made their first contribution in https://github.com/ultralytics/yolov5/pull/9009 * @0zppd made their first contribution in https://github.com/ultralytics/yolov5/pull/9068 * @HighMans made their first contribution in https://github.com/ultralytics/yolov5/pull/9174 * @ymerkli made their first contribution in https://github.com/ultralytics/yolov5/pull/9227 * @spacewalk01 made their first contribution in https://github.com/ultralytics/yolov5/pull/9230 * @VELCpro made their first contribution in https://github.com/ultralytics/yolov5/pull/9256 * @DN6 made their first contribution in https://github.com/ultralytics/yolov5/pull/9232 * @robinned made their first contribution in https://github.com/ultralytics/yolov5/pull/9330 * @kisaragychihaya made their first contribution in https://github.com/ultralytics/yolov5/pull/9240 * @hotohoto made their first contribution in https://github.com/ultralytics/yolov5/pull/9414 * @Oswells made their first contribution in https://github.com/ultralytics/yolov5/pull/9452 * @mucunwuxian made their first contribution in https://github.com/ultralytics/yolov5/pull/9487 * @FeiGeChuanShu made their first contribution in https://github.com/ultralytics/yolov5/pull/9512 * @zombob made their first contribution in https://github.com/ultralytics/yolov5/pull/9542 * @gaziqbal made their first contribution in https://github.com/ultralytics/yolov5/pull/9228 * @Forever518 made their first contribution in https://github.com/ultralytics/yolov5/pull/9545 * @davidamacey made their first contribution in https://github.com/ultralytics/yolov5/pull/9576 * @soumik12345 made their first contribution in https://github.com/ultralytics/yolov5/pull/9652 * @senhorinfinito made their first contribution in https://github.com/ultralytics/yolov5/pull/9668 * @KristenKehrer made their first contribution in https://github.com/ultralytics/yolov5/pull/9654 * @paulguerrie made their first contribution in https://github.com/ultralytics/yolov5/pull/9724 * @vladoossss made their first contribution in https://github.com/ultralytics/yolov5/pull/9785 * @SSTato made their first contribution in https://github.com/ultralytics/yolov5/pull/9879 * @janus-zheng made their first contribution in https://github.com/ultralytics/yolov5/pull/10090 * @rusamentiaga made their first contribution in https://github.com/ultralytics/yolov5/pull/10131 * @adumrewal made their first contribution in https://github.com/ultralytics/yolov5/pull/10163 * @rkechols made their first contribution in https://github.com/ultralytics/yolov5/pull/10133 * @triple-Mu made their first contribution in https://github.com/ultralytics/yolov5/pull/10166v6.2

1 year agov6.1

2 years agoThis release incorporates many new features and bug fixes (271 PRs from 48 contributors) since our last release in October 2021. It adds TensorRT, Edge TPU and OpenVINO support, and provides retrained models at --batch-size 128 with new default one-cycle linear LR scheduler. YOLOv5 now officially supports 11 different formats, not just for export but for inference (both detect.py and PyTorch Hub), and validation to profile mAP and speed results after export.

| Format | export.py --include |

Model |

|---|---|---|

| PyTorch | - | yolov5s.pt |

| TorchScript | torchscript |

yolov5s.torchscript |

| ONNX | onnx |

yolov5s.onnx |

| OpenVINO | openvino |

yolov5s_openvino_model/ |

| TensorRT | engine |

yolov5s.engine |

| CoreML | coreml |

yolov5s.mlmodel |

| TensorFlow SavedModel | saved_model |

yolov5s_saved_model/ |

| TensorFlow GraphDef | pb |

yolov5s.pb |

| TensorFlow Lite | tflite |

yolov5s.tflite |

| TensorFlow Edge TPU | edgetpu |

yolov5s_edgetpu.tflite |

| TensorFlow.js | tfjs |

yolov5s_web_model/ |

Usage examples (ONNX shown):

Export: python export.py --weights yolov5s.pt --include onnx

Detect: python detect.py --weights yolov5s.onnx

PyTorch Hub: model = torch.hub.load('ultralytics/yolov5', 'custom', 'yolov5s.onnx')

Validate: python val.py --weights yolov5s.onnx

Visualize: https://netron.app

Important Updates

-

TensorRT support: TensorFlow, Keras, TFLite, TF.js model export now fully integrated using

python export.py --include saved_model pb tflite tfjs(https://github.com/ultralytics/yolov5/pull/5699 by @imyhxy) - Tensorflow Edge TPU support ⭐ NEW: New smaller YOLOv5n (1.9M params) model below YOLOv5s (7.5M params), exports to 2.1 MB INT8 size, ideal for ultralight mobile solutions. (https://github.com/ultralytics/yolov5/pull/3630 by @zldrobit)

- OpenVINO support: YOLOv5 ONNX models are now compatible with both OpenCV DNN and ONNX Runtime (https://github.com/ultralytics/yolov5/pull/6057 by @glenn-jocher).

-

Export Benchmarks: Benchmark (mAP and speed) all YOLOv5 export formats with

python utils/benchmarks.py --weights yolov5s.pt. Currently operates on CPU, future updates will implement GPU support. (https://github.com/ultralytics/yolov5/pull/6613 by @glenn-jocher). - Architecture: no changes

-

Hyperparameters: minor change

- hyp-scratch-large.yaml

lrfreduced from 0.2 to 0.1 (https://github.com/ultralytics/yolov5/pull/6525 by @glenn-jocher).

- hyp-scratch-large.yaml

-

Training: Default Learning Rate (LR) scheduler updated

- One-cycle with cosine replace with one-cycle linear for improved results (https://github.com/ultralytics/yolov5/pull/6729 by @glenn-jocher).

New Results

All model trainings logged to https://wandb.ai/glenn-jocher/YOLOv5_v61_official

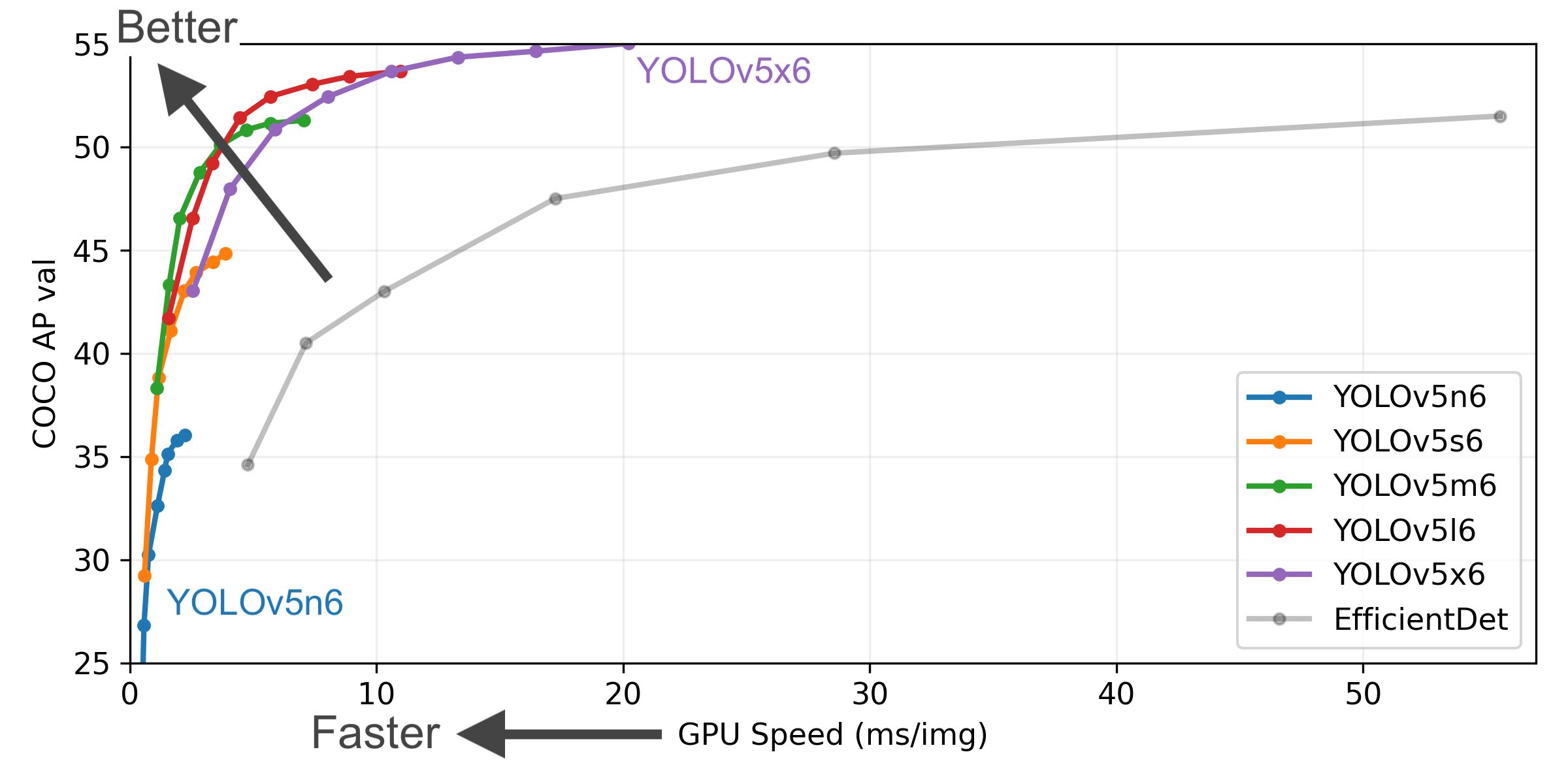

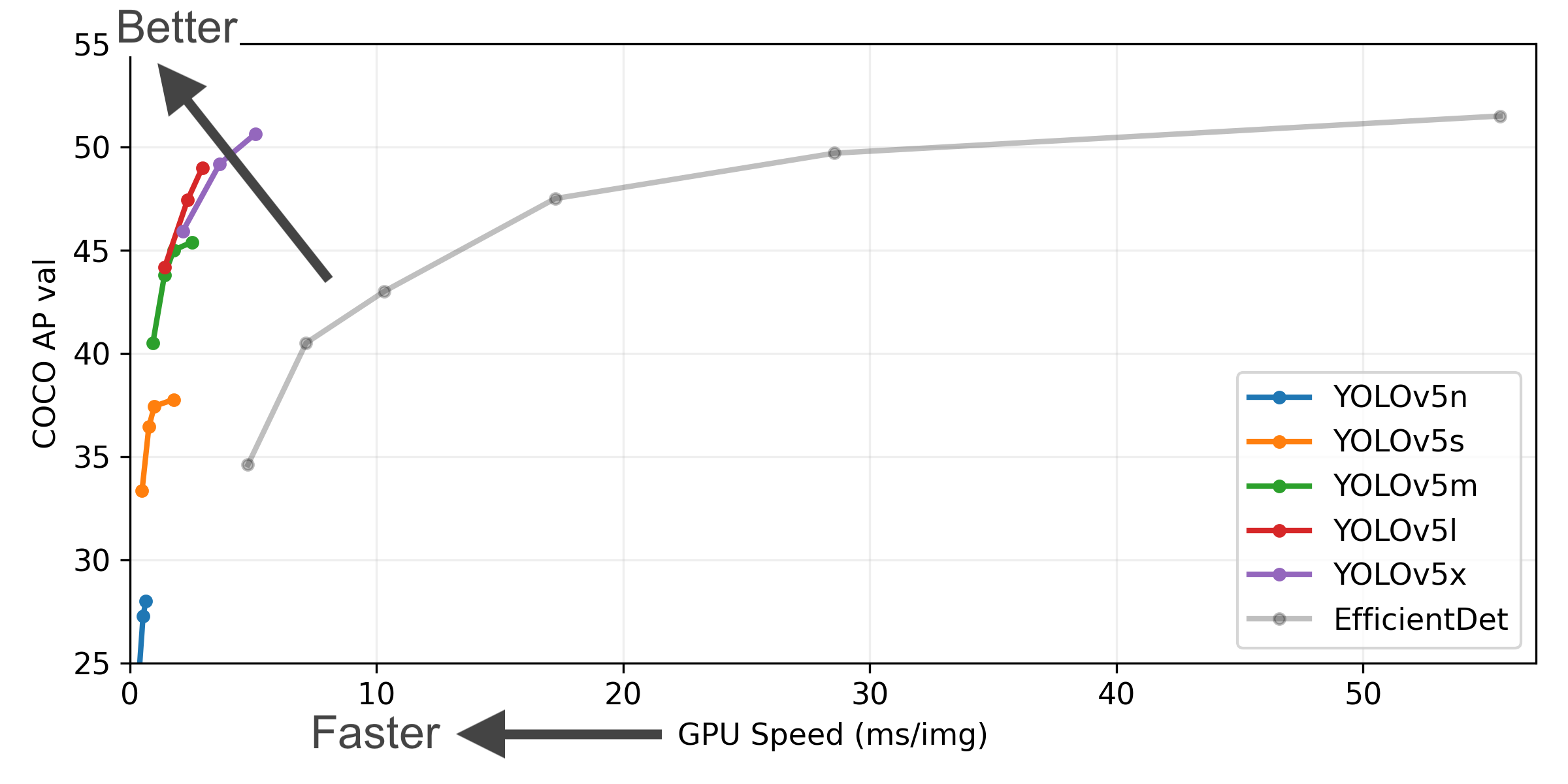

YOLOv5-P5 640 Figure (click to expand)

Figure Notes (click to expand)

- COCO AP val denotes [email protected]:0.95 metric measured on the 5000-image COCO val2017 dataset over various inference sizes from 256 to 1536.

- GPU Speed measures average inference time per image on COCO val2017 dataset using a AWS p3.2xlarge V100 instance at batch-size 32.

- EfficientDet data from google/automl at batch size 8.

-

Reproduce by

python val.py --task study --data coco.yaml --iou 0.7 --weights yolov5n6.pt yolov5s6.pt yolov5m6.pt yolov5l6.pt yolov5x6.pt

Example YOLOv5l before and after metrics:

| YOLOv5l Large |

size (pixels) |

mAPval 0.5:0.95 |

mAPval 0.5 |

Speed CPU b1 (ms) |

Speed V100 b1 (ms) |

Speed V100 b32 (ms) |

params (M) |

FLOPs @640 (B) |

|---|---|---|---|---|---|---|---|---|

| v5.0 | 640 | 48.2 | 66.9 | 457.9 | 11.6 | 2.8 | 47.0 | 115.4 |

| v6.0 (previous) | 640 | 48.8 | 67.2 | 424.5 | 10.9 | 2.7 | 46.5 | 109.1 |

| v6.1 (this release) | 640 | 49.0 | 67.3 | 430.0 | 10.1 | 2.7 | 46.5 | 109.1 |

Pretrained Checkpoints

| Model | size (pixels) |

mAPval 0.5:0.95 |

mAPval 0.5 |

Speed CPU b1 (ms) |

Speed V100 b1 (ms) |

Speed V100 b32 (ms) |

params (M) |

FLOPs @640 (B) |

|---|---|---|---|---|---|---|---|---|

| YOLOv5n | 640 | 28.0 | 45.7 | 45 | 6.3 | 0.6 | 1.9 | 4.5 |

| YOLOv5s | 640 | 37.4 | 56.8 | 98 | 6.4 | 0.9 | 7.2 | 16.5 |

| YOLOv5m | 640 | 45.4 | 64.1 | 224 | 8.2 | 1.7 | 21.2 | 49.0 |

| YOLOv5l | 640 | 49.0 | 67.3 | 430 | 10.1 | 2.7 | 46.5 | 109.1 |

| YOLOv5x | 640 | 50.7 | 68.9 | 766 | 12.1 | 4.8 | 86.7 | 205.7 |

| YOLOv5n6 | 1280 | 36.0 | 54.4 | 153 | 8.1 | 2.1 | 3.2 | 4.6 |

| YOLOv5s6 | 1280 | 44.8 | 63.7 | 385 | 8.2 | 3.6 | 12.6 | 16.8 |

| YOLOv5m6 | 1280 | 51.3 | 69.3 | 887 | 11.1 | 6.8 | 35.7 | 50.0 |

| YOLOv5l6 | 1280 | 53.7 | 71.3 | 1784 | 15.8 | 10.5 | 76.8 | 111.4 |

| YOLOv5x6 + TTA |

1280 1536 |

55.0 55.8 |

72.7 72.7 |

3136 - |

26.2 - |

19.4 - |

140.7 - |

209.8 - |

Table Notes (click to expand)

- All checkpoints are trained to 300 epochs with default settings. Nano and Small models use hyp.scratch-low.yaml hyps, all others use hyp.scratch-high.yaml.

-

mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce bypython val.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65 -

Speed averaged over COCO val images using a AWS p3.2xlarge instance. NMS times (~1 ms/img) not included.

Reproduce bypython val.py --data coco.yaml --img 640 --task speed --batch 1 -

TTA Test Time Augmentation includes reflection and scale augmentations.

Reproduce bypython val.py --data coco.yaml --img 1536 --iou 0.7 --augment

Changelog

Changes between previous release and this release: https://github.com/ultralytics/yolov5/compare/v6.0...v6.1 Changes since this release: https://github.com/ultralytics/yolov5/compare/v6.1...HEAD

New Features and Bug Fixes (271)

- fix

tfconversion in new v6 models by @YoniChechik in https://github.com/ultralytics/yolov5/pull/5153 - Use YOLOv5n for CI testing by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5154

- Update stale.yml by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5156

- Check

'onnxruntime-gpu' if torch.has_cudaby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5087 - Add class filtering to

LoadImagesAndLabels()dataloader by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5172 - W&B: fix dpp with wandb disabled by @AyushExel in https://github.com/ultralytics/yolov5/pull/5163

- Update autodownload fallbacks to v6.0 assets by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5177

- W&B: DDP fix by @AyushExel in https://github.com/ultralytics/yolov5/pull/5176

- Adjust legend labels for classes without instances by @NauchtanRobotics in https://github.com/ultralytics/yolov5/pull/5174

- Improved check_suffix() robustness to

''and""by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5192 - Highlight contributors in README by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5173

- Add hyp.scratch-med.yaml by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5196

- Update Objects365.yaml to include the official validation set by @farleylai in https://github.com/ultralytics/yolov5/pull/5194

- Autofix duplicate label handling by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5210

- Update Objects365.yaml val count by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5212

- Update/inplace ops by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5233

- Add

on_fit_epoch_endcallback by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5232 - Update rebase.yml by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5245

- Add dependabot for GH actions by @zhiqwang in https://github.com/ultralytics/yolov5/pull/5250

- Bump cirrus-actions/rebase from 1.4 to 1.5 by @dependabot in https://github.com/ultralytics/yolov5/pull/5251

- Bump actions/cache from 1 to 2.1.6 by @dependabot in https://github.com/ultralytics/yolov5/pull/5252

- Bump actions/stale from 3 to 4 by @dependabot in https://github.com/ultralytics/yolov5/pull/5253

- Update rebase.yml with workflows permissions by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5255

- autosplit: take image files with uppercase extensions into account by @jdfr in https://github.com/ultralytics/yolov5/pull/5269

- take EXIF orientation tags into account when fixing corrupt images by @jdfr in https://github.com/ultralytics/yolov5/pull/5270

- More informative

EarlyStopping()message by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5303 - Uncomment OpenCV 4.5.4 requirement in detect.py by @SamFC10 in https://github.com/ultralytics/yolov5/pull/5305

- Weights download script minor improvements by @CristiFati in https://github.com/ultralytics/yolov5/pull/5213

- Small fixes to docstrings by @zhiqwang in https://github.com/ultralytics/yolov5/pull/5313

- W&B: Media panel fix by @AyushExel in https://github.com/ultralytics/yolov5/pull/5317

- Add

autobatchfeature for bestbatch-sizeestimation by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5092 - Update

AutoShape.forward()model.classes example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5324 - DDP

nlfix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5332 - Add pre-commit CI action by @Borda in https://github.com/ultralytics/yolov5/pull/4982

- W&B: Fix sweep by @AyushExel in https://github.com/ultralytics/yolov5/pull/5402

- Update GitHub issues templates by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5404

- Fix

MixConv2d()remove shortcut + apply depthwise by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5410 - Meshgrid

indexing='ij'for PyTorch 1.10 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5309 - Update

get_loggers()by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/4854 - Update README.md by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5438

- Fixed a small typo in CONTRIBUTING.md by @pranathlcp in https://github.com/ultralytics/yolov5/pull/5445

- Update

check_git_status()to run underROOTworking directory by @MrinalJain17 in https://github.com/ultralytics/yolov5/pull/5441 - Fix tf.py

LoadImages()dataloader return values by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5455 - Remove

check_requirements(('tensorflow>=2.4.1',))by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5476 - Improve GPU name by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5478

- Update torch_utils.py import

LOGGERby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5483 - Add tf.py verification printout by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5484

- Keras CI fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5486

- Delete code-format.yml by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5487

- Fix float zeros format by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5491

- Handle edgetpu model inference by @Namburger in https://github.com/ultralytics/yolov5/pull/5372

- precommit: isort by @Borda in https://github.com/ultralytics/yolov5/pull/5493

- Fix

increment_path()with multiple-suffix filenames by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5518 - Write date in checkpoint file by @developer0hye in https://github.com/ultralytics/yolov5/pull/5514

- Update plots.py feature_visualization path issues by @ys31jp in https://github.com/ultralytics/yolov5/pull/5519

- Update cls bias init by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5520

- Common

is_cocologic betwen train.py and val.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5521 - Fix

increment_path()explicit file vs dir handling by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5523 - Fix detect.py URL inference by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5525

- Update

check_file()avoid repeat URL downloads by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5526 - Update export.py by @nanmi in https://github.com/ultralytics/yolov5/pull/5471

- Update train.py by @wonbeomjang in https://github.com/ultralytics/yolov5/pull/5451

- Suppress ONNX export trace warning by @deepsworld in https://github.com/ultralytics/yolov5/pull/5437

- Update autobatch.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5536

- Update autobatch.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5538

- Update Issue Templates with 💡 ProTip! by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5539

- Update

models/hub/*.yamlfiles for v6.0n release by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5540 -

intersect_dicts()in hubconf.py fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5542 - Fix for *.yaml emojis on load by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5543

- Fix

save_one_box()by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5545 - Inside Ultralytics video https://youtu.be/Zgi9g1ksQHc by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5546

- Add

--conf-thres>> 0.001 warning by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5567 -

LOGGERconsolidation by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5569 - New

DetectMultiBackend()class by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5549 - FROM nvcr.io/nvidia/pytorch:21.10-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5592

- Add

notebook_init()to utils/init.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5488 - Fix

check_requirements()resource warning allocation open file by @ayman-saleh in https://github.com/ultralytics/yolov5/pull/5602 - Update train, val

tqdmto fixed width by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5367 - Update val.py

speedandstudytasks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5608 -

np.unique()sort fix for segments by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5609 - Improve plots.py robustness by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5616

- HUB dataset previews to JPEG by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5627

- DDP

WORLD_SIZE-safe dataloader workers by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5631 - Default DataLoader

shuffle=Truefor training by @werner-duvaud in https://github.com/ultralytics/yolov5/pull/5623 - AutoAnchor and AutoBatch

LOGGERby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5635 - W&B refactor, handle exceptions, CI example by @AyushExel in https://github.com/ultralytics/yolov5/pull/5618

- Replace 2

transpose()with 1permutein TransformerBlock()` by @dingyiwei in https://github.com/ultralytics/yolov5/pull/5645 - Bump pip from 19.2 to 21.1 in /utils/google_app_engine by @dependabot in https://github.com/ultralytics/yolov5/pull/5661

- Update ci-testing.yml to Python 3.9 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5660

- TFDetect dynamic anchor count assignment fix by @nrupatunga in https://github.com/ultralytics/yolov5/pull/5668

- Update train.py comment to 'Model attributes' by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5670

- Update export.py docstring by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5689

-

NUM_THREADSleave at least 1 CPU free by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5706 - Prune unused imports by @Borda in https://github.com/ultralytics/yolov5/pull/5711

- Explicitly compute TP, FP in val.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5727

- Remove

.autoshape()method by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5694 - SECURITY.md by @IL2006 in https://github.com/ultralytics/yolov5/pull/5695

- Save *.npy features on detect.py

--visualizeby @Zengyf-CVer in https://github.com/ultralytics/yolov5/pull/5701 - Export, detect and validation with TensorRT engine file by @imyhxy in https://github.com/ultralytics/yolov5/pull/5699

- Do not save hyp.yaml and opt.yaml on evolve by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5775

- fix the path error in export.py by @miknyko in https://github.com/ultralytics/yolov5/pull/5778

- TorchScript

torch==1.7.0Path support by @miknyko in https://github.com/ultralytics/yolov5/pull/5781 - W&B: refactor W&B tables by @AyushExel in https://github.com/ultralytics/yolov5/pull/5737

- Scope TF imports in

DetectMultiBackend()by @phodgers in https://github.com/ultralytics/yolov5/pull/5792 - Remove NCOLS from tqdm by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5804

- Refactor new

model.warmup()method by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5810 - GCP VM from Image example by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5814

- Bump actions/cache from 2.1.6 to 2.1.7 by @dependabot in https://github.com/ultralytics/yolov5/pull/5816

- Update

dataset_stats()tocv2.INTER_AREAby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5821 - Fix TensorRT potential unordered binding addresses by @imyhxy in https://github.com/ultralytics/yolov5/pull/5826

- Handle non-TTY

wandb.errors.UsageErrorby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5839 - Avoid inplace modifying

imgsinLoadStreamsby @passerbythesun in https://github.com/ultralytics/yolov5/pull/5850 - Update

LoadImagesret_val=Falsehandling by @gmt710 in https://github.com/ultralytics/yolov5/pull/5852 - Update val.py by @pradeep-vishnu in https://github.com/ultralytics/yolov5/pull/5838

- Update TorchScript suffix to

*.torchscriptby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5856 - Add

--workers 8argument to val.py by @iumyx2612 in https://github.com/ultralytics/yolov5/pull/5857 - Update

plot_lr_scheduler()by @daikankan in https://github.com/ultralytics/yolov5/pull/5864 - Update

nlaftercutout()by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5873 -

AutoShape()models asDetectMultiBackend()instances by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5845 - Single-command multiple-model export by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5882

-

Detections().tolist()explicit argument fix by @lizeng614 in https://github.com/ultralytics/yolov5/pull/5907 - W&B: Fix bug in upload dataset module by @AyushExel in https://github.com/ultralytics/yolov5/pull/5908

- Add *.engine (TensorRT extensions) to .gitignore by @greg2451 in https://github.com/ultralytics/yolov5/pull/5911

- Add ONNX inference providers by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5918

- Add hardware checks to

notebook_init()by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5919 - Revert "Update

plot_lr_scheduler()" by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5920 - Absolute '/content/sample_data' by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5922

- Default PyTorch Hub to

autocast(False)by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5926 - Fix ONNX opset inconsistency with parseargs and run args by @d57montes in https://github.com/ultralytics/yolov5/pull/5937

- Make

select_device()robust tobatch_size=-1by @youyuxiansen in https://github.com/ultralytics/yolov5/pull/5940 - fix .gitignore not tracking existing folders by @pasmai in https://github.com/ultralytics/yolov5/pull/5946

- Update

strip_optimizer()by @iumyx2612 in https://github.com/ultralytics/yolov5/pull/5949 - Add nms and agnostic nms to export.py by @d57montes in https://github.com/ultralytics/yolov5/pull/5938

- Refactor

NUM_THREADSby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5954 - Fix Detections class

tolist()method by @yonomitt in https://github.com/ultralytics/yolov5/pull/5945 - Fix

imgszbug by @d57montes in https://github.com/ultralytics/yolov5/pull/5948 -

pretrained=Falsefix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5966 - make parameter ignore epochs by @jinmc in https://github.com/ultralytics/yolov5/pull/5972

- YOLOv5s6 params and FLOPs fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5977

- Update callbacks.py with

__init__()by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5979 - Increase

ar_thrfrom 20 to 100 for better detection on slender (high aspect ratio) objects by @MrinalJain17 in https://github.com/ultralytics/yolov5/pull/5556 - Allow

--weights URLby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5991 - Recommend

jar xf file.zipfor zips by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/5993 - *.torchscript inference

self.jitfix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6007 - Check TensorRT>=8.0.0 version by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6021

- Multi-layer capable

--freezeargument by @youyuxiansen in https://github.com/ultralytics/yolov5/pull/6019 - train -> val comment fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6024

- Add dataset source citations by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6032

- Kaggle

LOGGERfix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6041 - Simplify

set_logging()indexing by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6042 -

--freezefix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6044 - OpenVINO Export by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6057

- Reduce G/D/CIoU logic operations by @jedi007 in https://github.com/ultralytics/yolov5/pull/6074

- Init tensor directly on device by @deepsworld in https://github.com/ultralytics/yolov5/pull/6068

- W&B: track batch size after autobatch by @AyushExel in https://github.com/ultralytics/yolov5/pull/6039

- W&B: Log best results after training ends by @AyushExel in https://github.com/ultralytics/yolov5/pull/6120

- Log best results by @awsaf49 in https://github.com/ultralytics/yolov5/pull/6085

- Refactor/reduce G/C/D/IoU

if: elsestatements by @cmoseses in https://github.com/ultralytics/yolov5/pull/6087 - Add EdgeTPU support by @zldrobit in https://github.com/ultralytics/yolov5/pull/3630

- Enable AdamW optimizer by @bilzard in https://github.com/ultralytics/yolov5/pull/6152

- Update export format docstrings by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6151

- Update greetings.yml by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6165

- [pre-commit.ci] pre-commit suggestions by @pre-commit-ci in https://github.com/ultralytics/yolov5/pull/6177

- Update NMS

max_wh=7680for 8k images by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6178 - Add OpenVINO inference by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6179

- Ignore

*_openvino_model/dir by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6180 - Global export format sort by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6182

- Fix TorchScript on mobile export by @yinrong in https://github.com/ultralytics/yolov5/pull/6183

- TensorRT 7

anchor_gridcompatibility fix by @imyhxy in https://github.com/ultralytics/yolov5/pull/6185 - Add

tensorrt>=7.0.0checks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6193 - Add CoreML inference by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6195

- Fix

nan-robust stream FPS by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6198 - Edge TPU compiler comment by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6196

- TFLite

--int8'flatbuffers==1.12' fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6216 - TFLite

--int8'flatbuffers==1.12' fix 2 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6217 - Add

edgetpu_compilerchecks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6218 - Attempt

edgetpu-compilerautoinstall by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6223 - Update README speed reproduction command by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6228

- Update P2-P7

models/hubvariants by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6230 - TensorRT 7 export fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6235

- Fix

cmdstring ontfjsexport by @dart-bird in https://github.com/ultralytics/yolov5/pull/6243 - Enable ONNX

--halfFP16 inference by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6268 - Update export.py with Detect, Validate usages by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6280

- Add

is_kaggle()function by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6285 - Fix

devicecount check by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6290 - Fixing bug multi-gpu training by @hdnh2006 in https://github.com/ultralytics/yolov5/pull/6299

-

select_device()cleanup by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6302 - Fix

train.pyparameter groups desc error by @Otfot in https://github.com/ultralytics/yolov5/pull/6318 - Remove

dataset_stats()autodownload capability by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6303 - Console corrupted -> corrupt by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6338

- TensorRT

assert im.device.type != 'cpu'on export by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6340 -

export.pyreturn exported files/dirs by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6343 -

export.pyautomaticforward_exportby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6352 - Add optional

VERBOSEenvironment variable by @johnk2hawaii in https://github.com/ultralytics/yolov5/pull/6353 - Reuse

de_parallel()rather thanis_parallel()by @imyhxy in https://github.com/ultralytics/yolov5/pull/6354 -

DEVICE_COUNTinstead ofWORLD_SIZEto calculatenwby @sitecao in https://github.com/ultralytics/yolov5/pull/6324 - Flush callbacks when on

--evolveby @AyushExel in https://github.com/ultralytics/yolov5/pull/6374 - FROM nvcr.io/nvidia/pytorch:21.12-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6377

- FROM nvcr.io/nvidia/pytorch:21.10-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6379

- Add

albumentationsto Dockerfile by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6392 - Add

stop_training=Falseflag to callbacks by @haimat in https://github.com/ultralytics/yolov5/pull/6365 - Add

detect.pyGIF video inference by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6410 - Update

greetings.yamlemail address by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6412 - Rename logger from 'utils.logger' to 'yolov5' by @JonathanSamelson in https://github.com/ultralytics/yolov5/pull/6421

- Prefer

tflite_runtimefor TFLite inference if installed by @motokimura in https://github.com/ultralytics/yolov5/pull/6406 - Update workflows by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6427

- Namespace

VERBOSEenv variable toYOLOv5_VERBOSEby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6428 - Add

*.asfvideo support by @toschi23 in https://github.com/ultralytics/yolov5/pull/6436 - Revert "Remove

dataset_stats()autodownload capability" by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6442 - Fix

select_device()for Multi-GPU by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6434 - Fix2

select_device()for Multi-GPU by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6461 - Add Product Hunt social media icon by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6464

- Resolve dataset paths by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6489

- Simplify TF normalized to pixels by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6494

- Improved

export.pyusage examples by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6495 - CoreML inference fix

list()->sorted()by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6496 - Suppress

torch.jit.TracerWarningon export by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6498 - Suppress

export.run()TracerWarningby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6499 - W&B: Remember

batch_sizeon resuming by @AyushExel in https://github.com/ultralytics/yolov5/pull/6512 - Update hyp.scratch-high.yaml

lrf: 0.1by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6525 - TODO issues exempt from stale action by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6530

- Update val_batch*.jpg for Chinese fonts by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6526

- Social icons after text by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6473

- Edge TPU compiler

sudofix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6531 - Edge TPU export 'list index out of range' fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6533

- Edge TPU

tf.lite.experimental.load_delegatefix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6536 - Fixing minor multi-streaming issues with TensoRT engine by @greg2451 in https://github.com/ultralytics/yolov5/pull/6504

- Load checkpoint on CPU instead of on GPU by @bilzard in https://github.com/ultralytics/yolov5/pull/6516

- flake8: code meanings by @Borda in https://github.com/ultralytics/yolov5/pull/6481

- Fix 6 Flake8 issues by @Borda in https://github.com/ultralytics/yolov5/pull/6541

- Edge TPU TF imports fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6542

- Move trainloader functions to class methods by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6559

- Improved AutoBatch DDP error message by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6568

- Fix zero-export handling with

if any(f):by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6569 - Fix

plot_labels()colored histogram bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6574 - Allow custom

--evolveproject names by @MattVAD in https://github.com/ultralytics/yolov5/pull/6567 - Add

DATASETS_DIRglobal in general.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6578 - return

optfromtrain.run()by @chf4850 in https://github.com/ultralytics/yolov5/pull/6581 - Fix YouTube dislike button bug in

pafypackage by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6603 - Fix

hyp_evolve.yamlindexing bug by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6604 - Fix

ROOT / datawhen running W&Blog_dataset()by @or-toledano in https://github.com/ultralytics/yolov5/pull/6606 - YouTube dependency fix

youtube_dl==2020.12.2by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6612 - Add YOLOv5n to Reproduce section by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6619

- W&B: Improve resume stability by @AyushExel in https://github.com/ultralytics/yolov5/pull/6611

- W&B: don't log media in evolve by @AyushExel in https://github.com/ultralytics/yolov5/pull/6617

- YOLOv5 Export Benchmarks by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6613

- Fix ConfusionMatrix scale

vmin=0.0by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6638 - Fixed wandb logger

KeyErrorby @imyhxy in https://github.com/ultralytics/yolov5/pull/6637 - Fix yolov3.yaml remove list by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6655

- Validate with 2x

--workersby @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6658 - Validate with 2x

--workerssingle-GPU/CPU fix by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6659 - Add

--cache valoption by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6663 - Robust

scipy.cluster.vq.kmeanstoo few points by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6668 - Update Dockerfile

torch==1.10.2+cu113by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6669 - FROM nvcr.io/nvidia/pytorch:22.01-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6670

- FROM nvcr.io/nvidia/pytorch:21.10-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6671

- Update Dockerfile reorder installs by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6672

- FROM nvcr.io/nvidia/pytorch:22.01-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6673

- FROM nvcr.io/nvidia/pytorch:21.10-py3 by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6677

- Fix TF exports >= 2GB by @zldrobit in https://github.com/ultralytics/yolov5/pull/6292

- Fix

--evolve --bucket gs://...by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6698 - Fix CoreML P6 inference by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6700

- Fix floating point in number of workers by @SamuelYvon in https://github.com/ultralytics/yolov5/pull/6701

- Edge TPU inference fix by @RaffaeleGalliera in https://github.com/ultralytics/yolov5/pull/6686

- Use

export_formats()in export.py by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6705 - Suppress

torchAMP-CPU warnings by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6706 - Update

nwtomax(nd, 1)by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6714 - GH: add PR template by @Borda in https://github.com/ultralytics/yolov5/pull/6482

- Switch default LR scheduler from cos to linear by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6729

- Updated VOC hyperparameters by @glenn-jocher in https://github.com/ultralytics/yolov5/pull/6732

New Contributors (48)

- @YoniChechik made their first contribution in https://github.com/ultralytics/yolov5/pull/5153

- @farleylai made their first contribution in https://github.com/ultralytics/yolov5/pull/5194

- @jdfr made their first contribution in https://github.com/ultralytics/yolov5/pull/5269

- @pranathlcp made their first contribution in https://github.com/ultralytics/yolov5/pull/5445

- @MrinalJain17 made their first contribution in https://github.com/ultralytics/yolov5/pull/5441

- @Namburger made their first contribution in https://github.com/ultralytics/yolov5/pull/5372

- @ys31jp made their first contribution in https://github.com/ultralytics/yolov5/pull/5519

- @nanmi made their first contribution in https://github.com/ultralytics/yolov5/pull/5471

- @wonbeomjang made their first contribution in https://github.com/ultralytics/yolov5/pull/5451

- @deepsworld made their first contribution in https://github.com/ultralytics/yolov5/pull/5437

- @ayman-saleh made their first contribution in https://github.com/ultralytics/yolov5/pull/5602

- @werner-duvaud made their first contribution in https://github.com/ultralytics/yolov5/pull/5623

- @nrupatunga made their first contribution in https://github.com/ultralytics/yolov5/pull/5668

- @IL2006 made their first contribution in https://github.com/ultralytics/yolov5/pull/5695

- @Zengyf-CVer made their first contribution in https://github.com/ultralytics/yolov5/pull/5701

- @miknyko made their first contribution in https://github.com/ultralytics/yolov5/pull/5778

- @phodgers made their first contribution in https://github.com/ultralytics/yolov5/pull/5792

- @passerbythesun made their first contribution in https://github.com/ultralytics/yolov5/pull/5850

- @gmt710 made their first contribution in https://github.com/ultralytics/yolov5/pull/5852

- @pradeep-vishnu made their first contribution in https://github.com/ultralytics/yolov5/pull/5838

- @iumyx2612 made their first contribution in https://github.com/ultralytics/yolov5/pull/5857

- @daikankan made their first contribution in https://github.com/ultralytics/yolov5/pull/5864

- @lizeng614 made their first contribution in https://github.com/ultralytics/yolov5/pull/5907

- @greg2451 made their first contribution in https://github.com/ultralytics/yolov5/pull/5911

- @youyuxiansen made their first contribution in https://github.com/ultralytics/yolov5/pull/5940

- @pasmai made their first contribution in https://github.com/ultralytics/yolov5/pull/5946

- @yonomitt made their first contribution in https://github.com/ultralytics/yolov5/pull/5945

- @jinmc made their first contribution in https://github.com/ultralytics/yolov5/pull/5972

- @jedi007 made their first contribution in https://github.com/ultralytics/yolov5/pull/6074

- @awsaf49 made their first contribution in https://github.com/ultralytics/yolov5/pull/6085

- @cmoseses made their first contribution in https://github.com/ultralytics/yolov5/pull/6087

- @bilzard made their first contribution in https://github.com/ultralytics/yolov5/pull/6152

- @pre-commit-ci made their first contribution in https://github.com/ultralytics/yolov5/pull/6177

- @yinrong made their first contribution in https://github.com/ultralytics/yolov5/pull/6183

- @dart-bird made their first contribution in https://github.com/ultralytics/yolov5/pull/6243

- @hdnh2006 made their first contribution in https://github.com/ultralytics/yolov5/pull/6299

- @Otfot made their first contribution in https://github.com/ultralytics/yolov5/pull/6318

- @johnk2hawaii made their first contribution in https://github.com/ultralytics/yolov5/pull/6353

- @sitecao made their first contribution in https://github.com/ultralytics/yolov5/pull/6324

- @haimat made their first contribution in https://github.com/ultralytics/yolov5/pull/6365

- @JonathanSamelson made their first contribution in https://github.com/ultralytics/yolov5/pull/6421

- @motokimura made their first contribution in https://github.com/ultralytics/yolov5/pull/6406

- @toschi23 made their first contribution in https://github.com/ultralytics/yolov5/pull/6436

- @MattVAD made their first contribution in https://github.com/ultralytics/yolov5/pull/6567

- @chf4850 made their first contribution in https://github.com/ultralytics/yolov5/pull/6581

- @or-toledano made their first contribution in https://github.com/ultralytics/yolov5/pull/6606

- @SamuelYvon made their first contribution in https://github.com/ultralytics/yolov5/pull/6701

- @RaffaeleGalliera made their first contribution in https://github.com/ultralytics/yolov5/pull/6686

Full Changelog: https://github.com/ultralytics/yolov5/compare/v6.0...v6.1

v6.0

2 years agov5.0

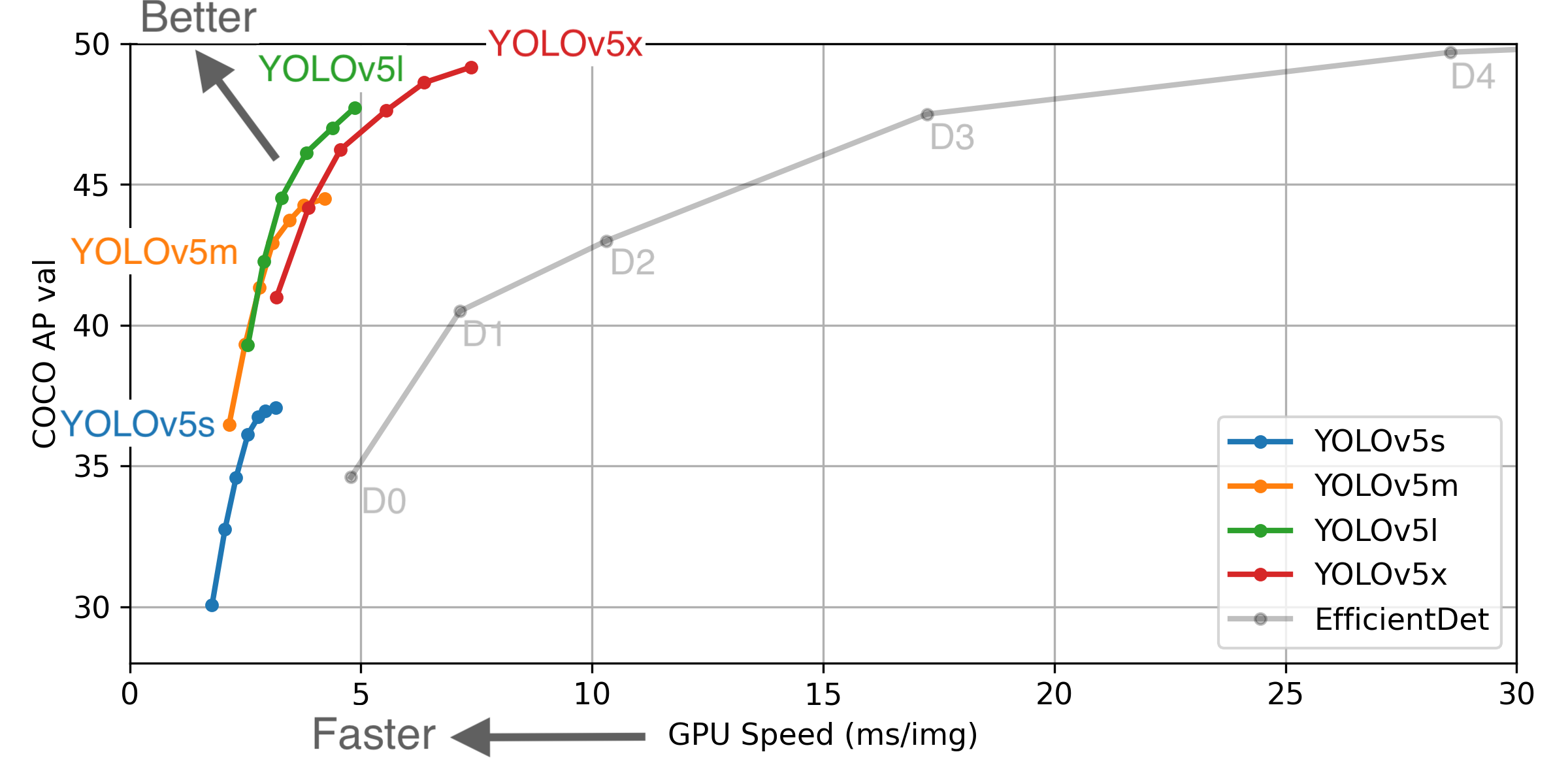

3 years agoThis release implements YOLOv5-P6 models and retrained YOLOv5-P5 models. All model sizes YOLOv5s/m/l/x are now available in both P5 and P6 architectures:

-

YOLOv5-P5 models (same architecture as v4.0 release): 3 output layers P3, P4, P5 at strides 8, 16, 32, trained at

--img 640

python detect.py --weights yolov5s.pt # P5 models

yolov5m.pt

yolov5l.pt

yolov5x.pt

-

YOLOv5-P6 models: 4 output layers P3, P4, P5, P6 at strides 8, 16, 32, 64 trained at

--img 1280

python detect.py --weights yolov5s6.pt # P6 models

yolov5m6.pt

yolov5l6.pt

yolov5x6.pt

Example usage:

# Command Line

python detect.py --weights yolov5m.pt --img 640 # P5 model at 640

python detect.py --weights yolov5m6.pt --img 640 # P6 model at 640

python detect.py --weights yolov5m6.pt --img 1280 # P6 model at 1280

# PyTorch Hub

model = torch.hub.load('ultralytics/yolov5', 'yolov5m6') # P6 model

results = model(imgs, size=1280) # inference at 1280

Notable Updates

-

YouTube Inference: Direct inference from YouTube videos, i.e.

python detect.py --source 'https://youtu.be/NUsoVlDFqZg'. Live streaming videos and normal videos supported. (https://github.com/ultralytics/yolov5/pull/2752) - AWS Integration: Amazon AWS integration and new AWS Quickstart Guide for simple EC2 instance YOLOv5 training and resuming of interrupted Spot instances. (https://github.com/ultralytics/yolov5/pull/2185)

- Supervise.ly Integration: New integration with the Supervisely Ecosystem for training and deploying YOLOv5 models with Supervise.ly (https://github.com/ultralytics/yolov5/issues/2518)

- Improved W&B Integration: Allows saving datasets and models directly to Weights & Biases. This allows for --resume directly from W&B (useful for temporary environments like Colab), as well as enhanced visualization tools. See this blog by @AyushExel for details. (https://github.com/ultralytics/yolov5/pull/2125)

Updated Results

P6 models include an extra P6/64 output layer for detection of larger objects, and benefit the most from training at higher resolution. For this reason we trained all P5 models at 640, and all P6 models at 1280.

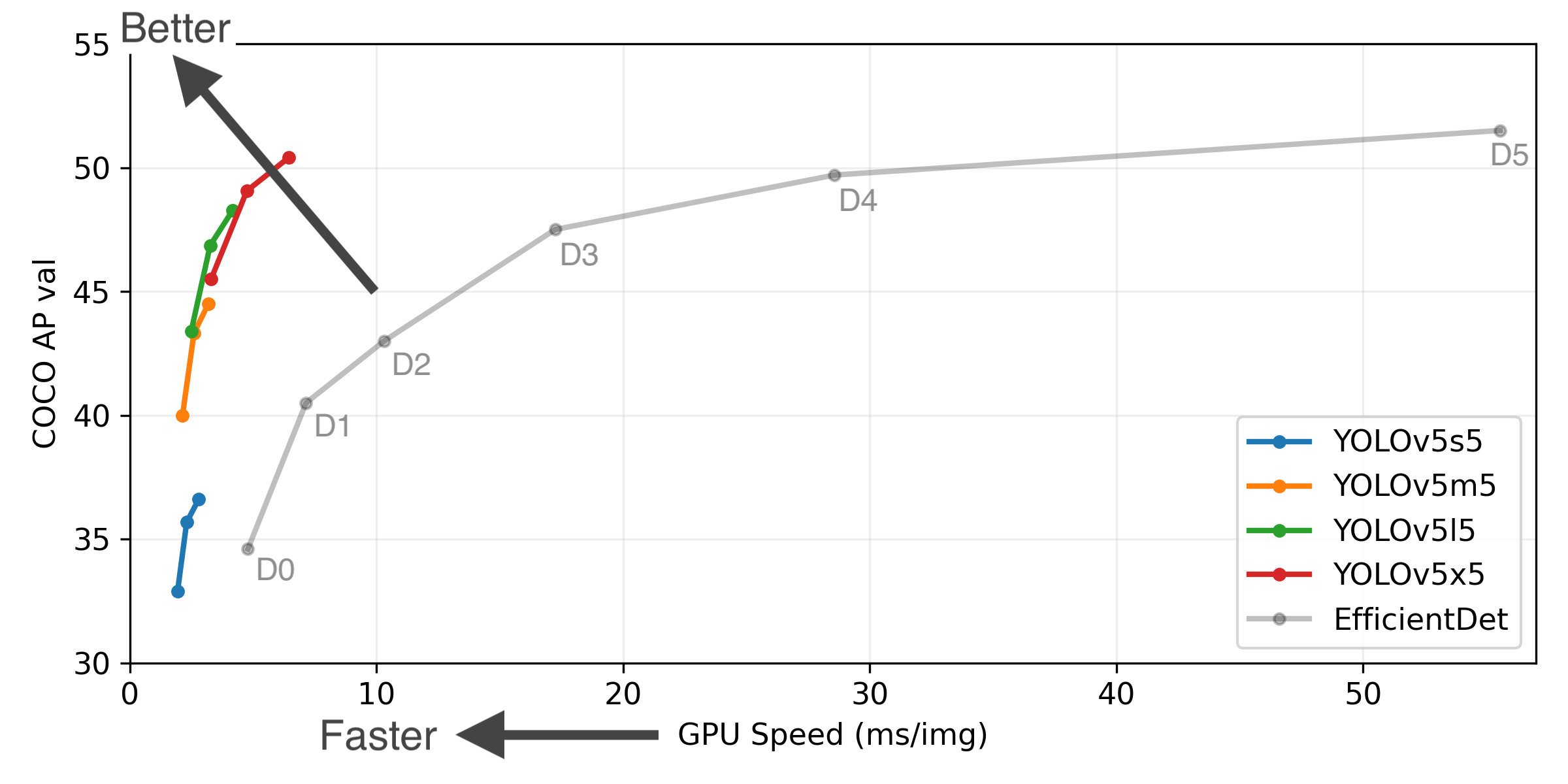

YOLOv5-P5 640 Figure (click to expand)

Figure Notes (click to expand)

- GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS.

- EfficientDet data from google/automl at batch size 8.

-

Reproduce by

python test.py --task study --data coco.yaml --iou 0.7 --weights yolov5s6.pt yolov5m6.pt yolov5l6.pt yolov5x6.pt

- April 11, 2021: v5.0 release: YOLOv5-P6 1280 models, AWS, Supervise.ly and YouTube integrations.

- January 5, 2021: v4.0 release: nn.SiLU() activations, Weights & Biases logging, PyTorch Hub integration.

- August 13, 2020: v3.0 release: nn.Hardswish() activations, data autodownload, native AMP.

- July 23, 2020: v2.0 release: improved model definition, training and mAP.

Pretrained Checkpoints

| Model | size (pixels) |

mAPval 0.5:0.95 |

mAPtest 0.5:0.95 |

mAPval 0.5 |

Speed V100 (ms) |

params (M) |

FLOPS 640 (B) |

|

|---|---|---|---|---|---|---|---|---|

| YOLOv5s | 640 | 36.7 | 36.7 | 55.4 | 2.0 | 7.3 | 17.0 | |

| YOLOv5m | 640 | 44.5 | 44.5 | 63.1 | 2.7 | 21.4 | 51.3 | |

| YOLOv5l | 640 | 48.2 | 48.2 | 66.9 | 3.8 | 47.0 | 115.4 | |

| YOLOv5x | 640 | 50.4 | 50.4 | 68.8 | 6.1 | 87.7 | 218.8 | |

| YOLOv5s6 | 1280 | 43.3 | 43.3 | 61.9 | 4.3 | 12.7 | 17.4 | |

| YOLOv5m6 | 1280 | 50.5 | 50.5 | 68.7 | 8.4 | 35.9 | 52.4 | |

| YOLOv5l6 | 1280 | 53.4 | 53.4 | 71.1 | 12.3 | 77.2 | 117.7 | |

| YOLOv5x6 | 1280 | 54.4 | 54.4 | 72.0 | 22.4 | 141.8 | 222.9 | |

| YOLOv5x6 TTA | 1280 | 55.0 | 55.0 | 72.0 | 70.8 | - | - |

Table Notes (click to expand)

- APtest denotes COCO test-dev2017 server results, all other AP results denote val2017 accuracy.

- AP values are for single-model single-scale unless otherwise noted. Reproduce mAP by

python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65 - SpeedGPU averaged over 5000 COCO val2017 images using a GCP n1-standard-16 V100 instance, and includes FP16 inference, postprocessing and NMS. Reproduce speed by

python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45 - All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

- Test Time Augmentation (TTA) includes reflection and scale augmentation. Reproduce TTA by

python test.py --data coco.yaml --img 1536 --iou 0.7 --augment

Changelog

Changes between previous release and this release: https://github.com/ultralytics/yolov5/compare/v4.0...v5.0 Changes since this release: https://github.com/ultralytics/yolov5/compare/v5.0...HEAD

Click a section below to expand details:

Implemented Enhancements (26)

- Return predictions as json #2703

- Single channel image training? #2609

- Images in MPO Format are considered corrupted #2446

- Improve Validation Visualization #2384