SRResCGAN Save

Code repo for "Deep Generative Adversarial Residual Convolutional Networks for Real-World Super-Resolution" (CVPRW NTIRE2020).

Super-Resolution Residual Convolutional Generative Adversarial Network

Update: Our improved Real-Image SR method titled "Deep Cyclic Generative Adversarial Residual Convolutional Networks for Real Image Super-Resolution (SRResCycGAN)" is appeared in the AIM-2020 ECCV workshop. [Project Website]

An official PyTorch implementation of the SRResCGAN model as described in the paper Deep Generative Adversarial Residual Convolutional Networks for Real-World Super-Resolution. This work is participated in the CVPRW NTIRE 2020 RWSR challenges on the Real-World Super-Resolution.

✨ Visual examples:

- Abstract

- Spotlight Video

- Paper

- Pre-trained Models

- Citation

- Quick Test

- Train models

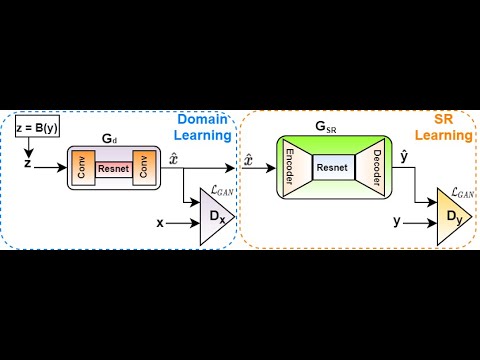

- SRResCGAN Architecture

- Quantitative Results

- Visual Results

- Code Acknowledgement

Abstract

Most current deep learning based single image super-resolution (SISR) methods focus on designing deeper / wider models to learn the non-linear mapping between low-resolution (LR) inputs and the high-resolution (HR) outputs from a large number of paired (LR/HR) training data. They usually take as assumption that the LR image is a bicubic down-sampled version of the HR image. However, such degradation process is not available in real-world settings i.e. inherent sensor noise, stochastic noise, compression artifacts, possible mismatch between image degradation process and camera device. It reduces significantly the performance of current SISR methods due to real-world image corruptions. To address these problems, we propose a deep Super-Resolution Residual Convolutional Generative Adversarial Network (SRResCGAN) to follow the real-world degradation settings by adversarial training the model with pixel-wise supervision in the HR domain from its generated LR counterpart. The proposed network exploits the residual learning by minimizing the energy-based objective function with powerful image regularization and convex optimization techniques. We demonstrate our proposed approach in quantitative and qualitative experiments that generalize robustly to real input and it is easy to deploy for other down-scaling operators and mobile/embedded devices.

Spotlight Video

Paper

Pre-trained Models

| DSGAN | SRResCGAN | |

|---|---|---|

| NTIRE2020 RWSR | Source-Domain-Learning | SR-learning |

BibTeX

@InProceedings{Umer_2020_CVPR_Workshops,

author = {Muhammad Umer, Rao and Luca Foresti, Gian and Micheloni, Christian},

title = {Deep Generative Adversarial Residual Convolutional Networks for Real-World Super-Resolution},

booktitle = {The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {June},

year = {2020}

}

Cite the code repos

Use the following CITATION.cff file to cite the SRResCGAN code repository.

Quick Test

This model can be run on arbitrary images with a Docker image hosted on Replicate: https://beta.replicate.ai/RaoUmer/SRResCGAN. Below are instructions for how to run the model without Docker:

Dependencies

- Python 3.7 (version >= 3.0)

- PyTorch >= 1.0 (CUDA version >= 8.0 if installing with CUDA.)

- Python packages:

pip install numpy opencv-python

Test models

- Clone this github repository.

git clone https://github.com/RaoUmer/SRResCGAN

cd SRResCGAN

cd srrescgan_code_demo

- Place your own low-resolution images in

./srrescgan_code_demo/LRfolder. (There are two sample images i.e. 0815 and 0829). - Download pretrained models from Google Drive. Place the models in

./srrescgan_code_demo/trained_nets_x4. We provide two models with source domain learning and SR learning. - Run test. We provide SRResCGAN/SRResCGAN+ and you can config in the

test_srrescgan.py (without self-ensemble strategy)/test_srrescgan_plus.py (with self-ensemble strategy).

python test_srrescgan.py

python test_srrescgan_plus.py

- The results are in

./srrescgan_code_demo/sr_results_x4folder.

Train models

- The SR training code is available in the

training_codesdirectory.

SRResCGAN Architecture

Overall Representative diagram

SR Generator Network

Quantitative Results

| Dataset (HR/LR pairs) | SR methods | #Params | PSNR↑ | SSIM↑ | LPIPS↓ | Artifacts |

|---|---|---|---|---|---|---|

| Bicubic | EDSR | 43M | 24.48 | 0.53 | 0.6800 | Sensor noise (σ = 8) |

| Bicubic | EDSR | 43M | 23.75 | 0.62 | 0.5400 | JPEG compression (quality=30) |

| Bicubic | ESRGAN | 16.7M | 17.39 | 0.19 | 0.9400 | Sensor noise (σ = 8) |

| Bicubic | ESRGAN | 16.7M | 22.43 | 0.58 | 0.5300 | JPEG compression (quality=30) |

| CycleGAN | ESRGAN-FT | 16.7M | 22.42 | 0.55 | 0.3645 | Sensor noise (σ = 8) |

| CycleGAN | ESRGAN-FT | 16.7M | 22.80 | 0.57 | 0.3729 | JPEG compression (quality=30) |

| DSGAN | ESRGAN-FS | 16.7M | 22.52 | 0.52 | 0.3300 | Sensor noise (σ = 8) |

| DSGAN | ESRGAN-FS | 16.7M | 20.39 | 0.50 | 0.4200 | JPEG compression (quality=30) |

| DSGAN | SRResCGAN (ours) | 380K | 25.46 | 0.67 | 0.3604 | Sensor noise (σ = 8) |

| DSGAN | SRResCGAN (ours) | 380K | 23.34 | 0.59 | 0.4431 | JPEG compression (quality=30) |

| DSGAN | SRResCGAN+ (ours) | 380K | 26.01 | 0.71 | 0.3871 | Sensor noise (σ = 8) |

| DSGAN | SRResCGAN+ (ours) | 380K | 23.69 | 0.62 | 0.4663 | JPEG compression (quality=30) |

| DSGAN | SRResCGAN (ours) | 380K | 25.05 | 0.67 | 0.3357 | unknown (validset) |

| DSGAN | SRResCGAN+ (ours) | 380K | 25.96 | 0.71 | 0.3401 | unknown (validset) |

| DSGAN | ESRGAN-FS | 16.7M | 20.72 | 0.52 | 0.4000 | unknown (testset) |

| DSGAN | SRResCGAN (ours) | 380K | 24.87 | 0.68 | 0.3250 | unknown (testset) |

The NTIRE2020 RWSR Challenge Results (Track-1)

| Team | PSNR↑ | SSIM↑ | LPIPS↓ | MOS↓ |

|---|---|---|---|---|

| Impressionism | 24.67 (16) | 0.683 (13) | 0.232 (1) | 2.195 |

| Samsung-SLSI-MSL | 25.59 (12) | 0.727 (9) | 0.252 (2) | 2.425 |

| BOE-IOT-AIBD | 26.71 (4) | 0.761 (4) | 0.280 (4) | 2.495 |

| MSMers | 23.20 (18) | 0.651 (17) | 0.272 (3) | 2.530 |

| KU-ISPL | 26.23 (6) | 0.747 (7) | 0.327 (8) | 2.695 |

| InnoPeak-SR | 26.54 (5) | 0.746 (8) | 0.302 (5) | 2.740 |

| ITS425 | 27.08 (2) | 0.779 (1) | 0.325 (6) | 2.770 |

| MLP-SR (ours) | 24.87 (15) | 0.681 (14) | 0.325 (7) | 2.905 |

| Webbzhou | 26.10 (9) | 0.764 (3) | 0.341 (9) | - |

| SR-DL | 25.67 (11) | 0.718 (10) | 0.364 (10) | - |

| TeamAY | 27.09 (1) | 0.773 (2) | 0.369 (11) | - |

| BIGFEATURE-CAMERA | 26.18 (7) | 0.750 (6) | 0.372 (12) | - |

| BMIPL-UNIST-YH-1 | 26.73 (3) | 0.752 (5) | 0.379 (13) | - |

| SVNIT1-A | 21.22 (19) | 0.576 (19) | 0.397 (14) | - |

| KU-ISPL2 | 25.27 (14) | 0.680 (15) | 0.460 (15) | - |

| SuperT | 25.79 (10) | 0.699 (12) | 0.469 (16) | - |

| GDUT-wp | 26.11 (8) | 0.706 (11) | 0.496 (17) | - |

| SVNIT1-B | 24.21 (17) | 0.617 (18) | 0.562 (18) | - |

| SVNIT2 | 25.39 (13) | 0.674 (16) | 0.615 (19) | - |

| AITA-Noah-A | 24.65 (-) | 0.699 (-) | 0.222 (-) | 2.245 |

| AITA-Noah-B | 25.72 (-) | 0.737 (-) | 0.223 (-) | 2.285 |

| Bicubic | 25.48 (-) | 0.680 (-) | 0.612 (-) | 3.050 |

| ESRGAN Supervised | 24.74 (-) | 0.695 (-) | 0.207 (-) | 2.300 |

Visual Results

Validation-set (Track-1)

You can download all the SR resutls of our method on the validation-set from Google Drive: SRResCGAN, SRResCGAN+.

Test-set (Track-1)

You can download all the SR resutls of our method on the test-set from Google Drive: SRResCGAN, SRResCGAN+.

Real-World Smartphone images (Track-2)

You can download all the SR resutls of our method on the smartphone images from Google Drive: SRResCGAN, SRResCGAN+.

Code Acknowledgement

The training and testing codes are somewhat based on ESRGAN, DSGAN, and deep_demosaick.