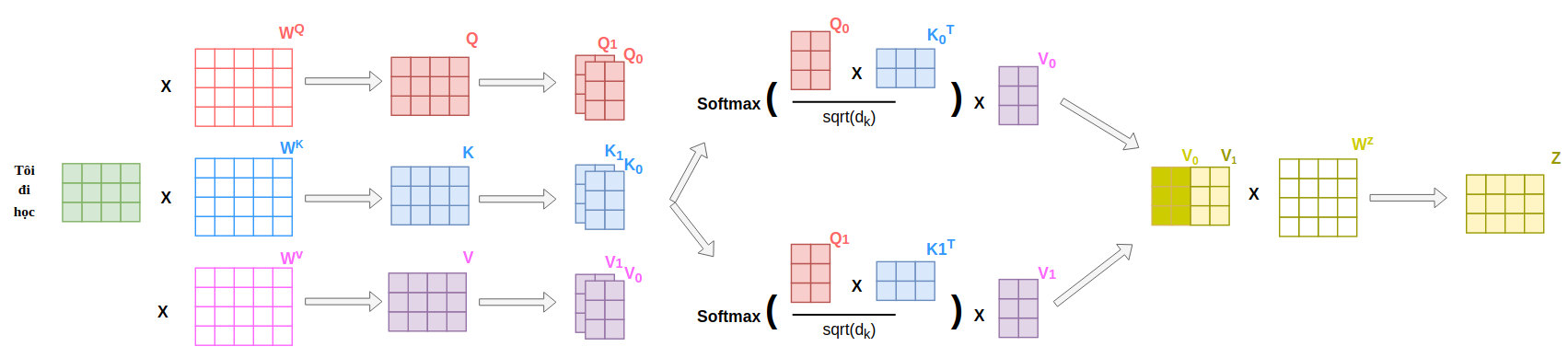

Multi Head Self Attention Save

A Faster Pytorch Implementation of Multi-Head Self-Attention

Project README

multi-head_self-attention

A Faster Pytorch Implementation of Multi-Head Self-Attention

Open Source Agenda is not affiliated with "Multi Head Self Attention" Project. README Source: datnnt1997/multi-head_self-attention

Stars

67

Open Issues

1

Last Commit

1 year ago

Repository