Mage Ai Versions Save

🧙 The modern replacement for Airflow. Build, run, and manage data pipelines for integrating and transforming data.

0.8.93

10 months ago

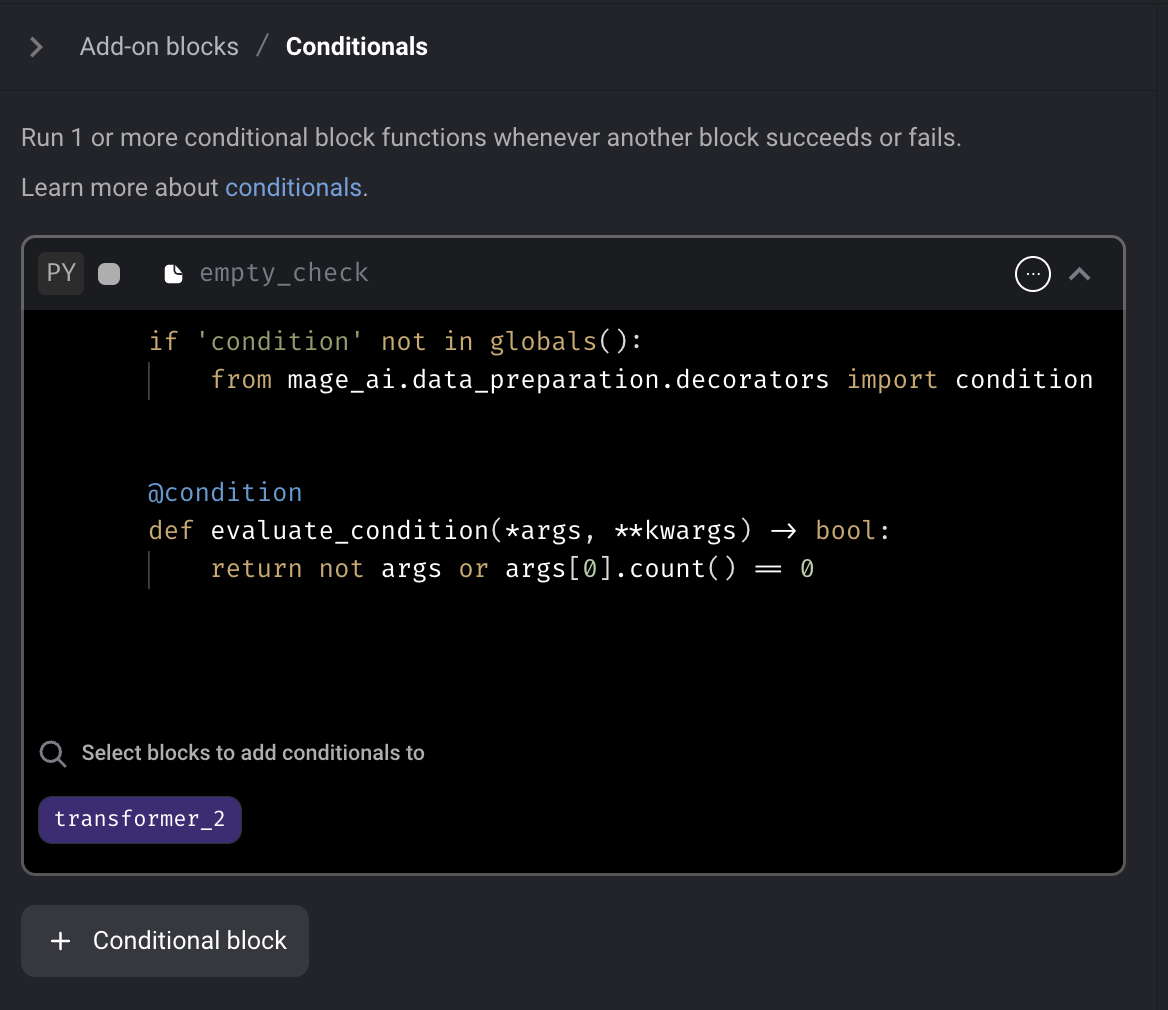

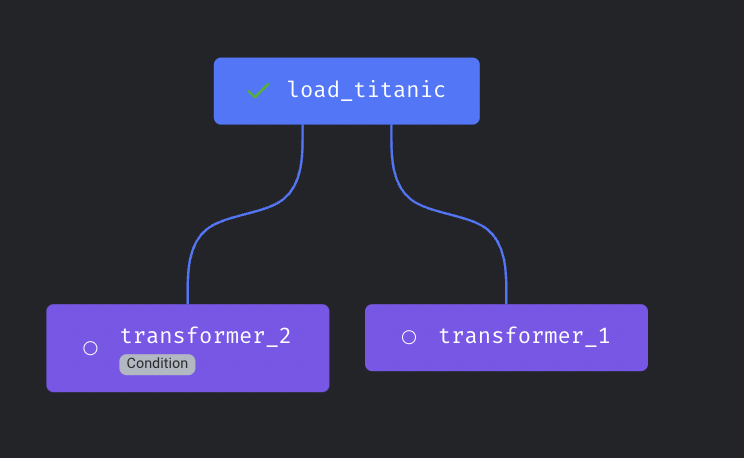

Conditional block

Add conditional block to Mage. The conditional block is an "Add-on" block that can be added to an existing block within a pipeline. If the conditional block evaluates as False, the parent block will not be executed.

Doc: https://docs.mage.ai/development/blocks/conditionals/overview

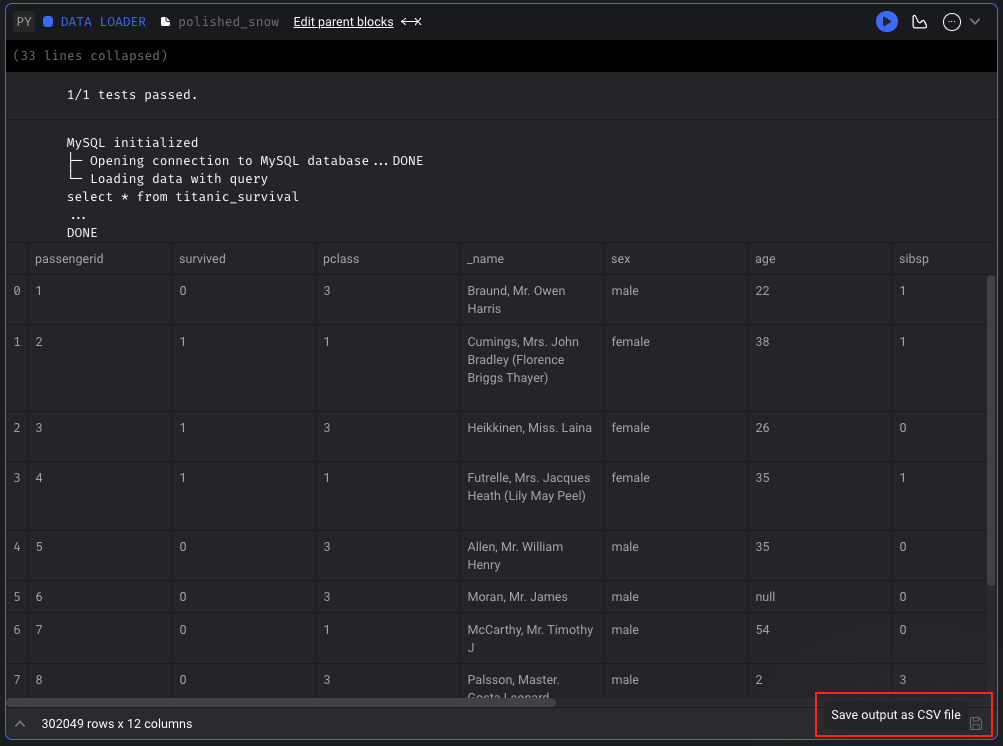

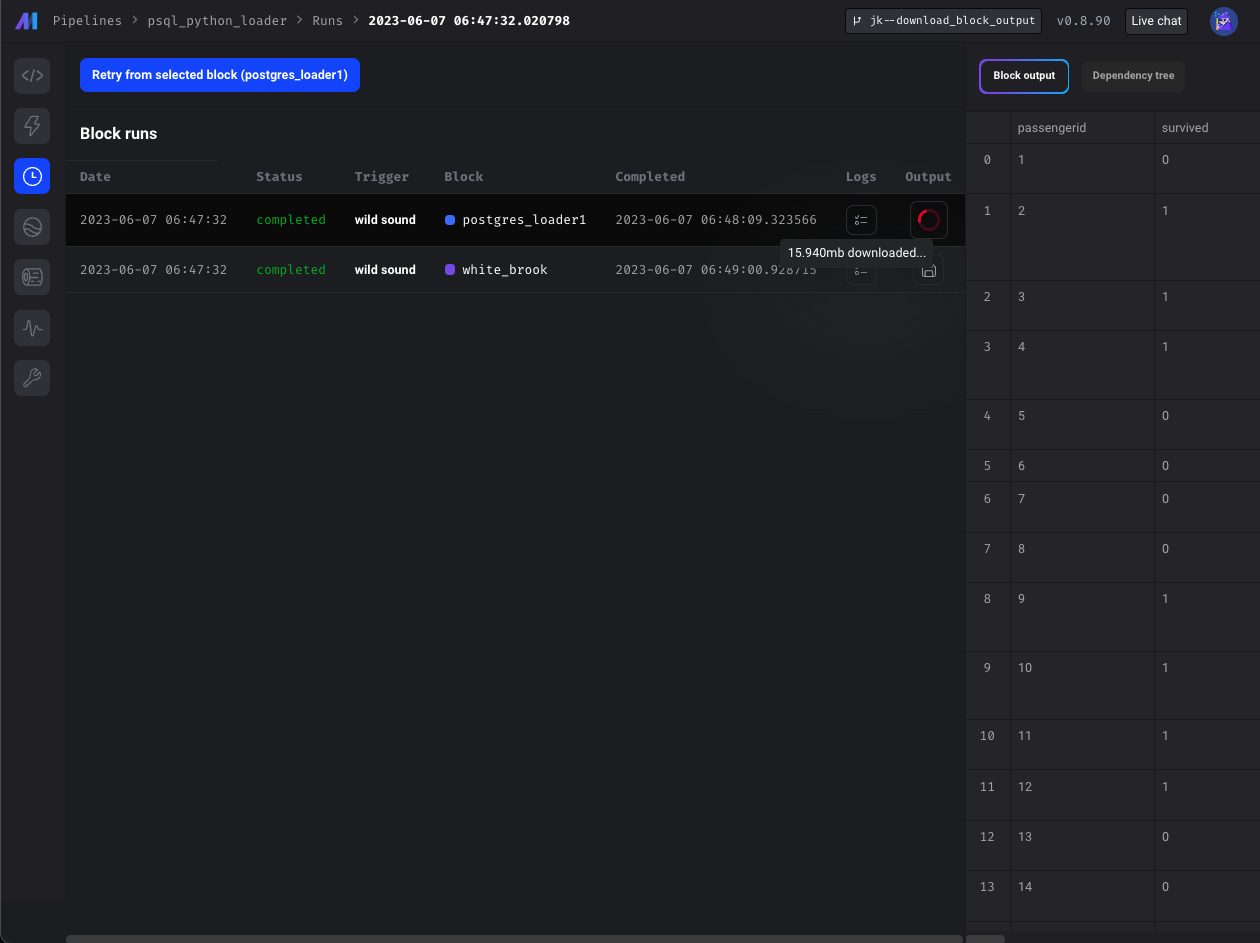

Download block output

For standard pipelines (not currently supported in integration or streaming pipelines), you can save the output of a block that has been run as a CSV file. You can save the block output in Pipeline Editor page or Block Runs page.

Doc: https://docs.mage.ai/orchestration/pipeline-runs/saving-block-output-as-csv

Customize Pipeline level spark config

Mage supports customizing Spark session for a pipeline by specifying the spark_config in the pipeline metadata.yaml file. The pipeline level spark_config will override the project level spark_config if specified.

Doc: https://docs.mage.ai/integrations/spark-pyspark#custom-spark-session-at-the-pipeline-level

Data integration pipeline

Oracle DB source

Download file data in the API source

Doc: https://github.com/mage-ai/mage-ai/tree/master/mage_integrations/mage_integrations/sources/api

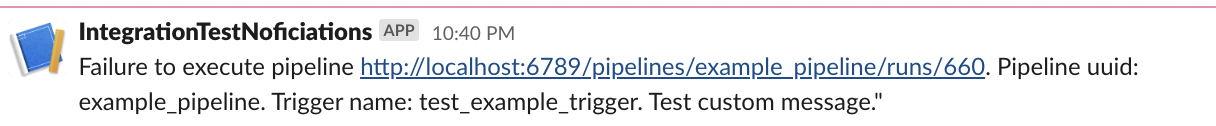

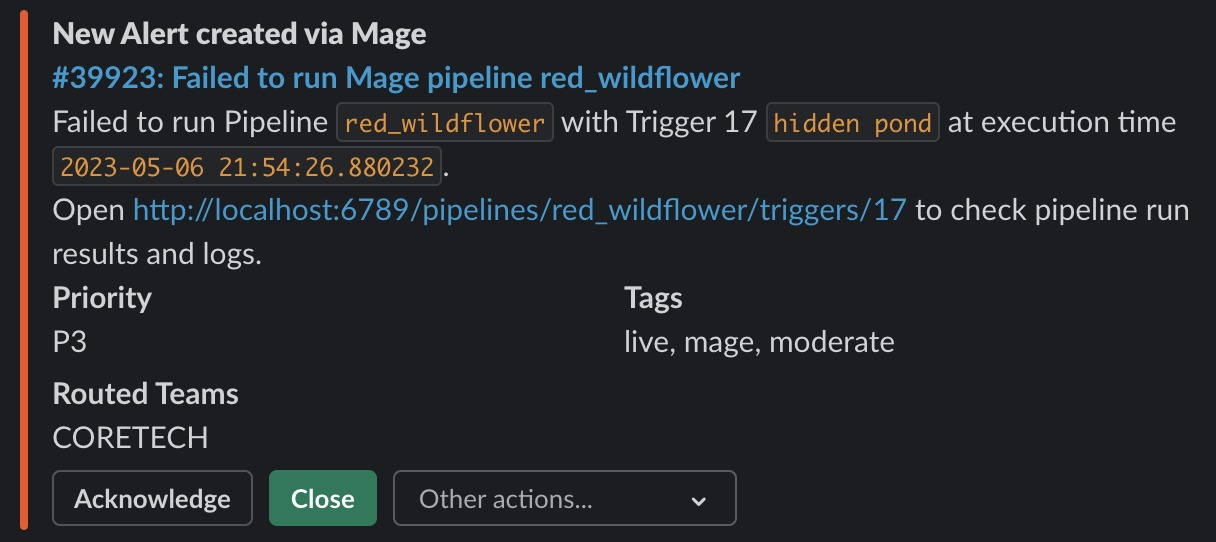

Personalize notification messages

Users can customize the notification templates of different channels (slack, email, etc.) in project metadata.yaml. Hare are the supported variables that can be interpolated in the message templates: execution_time , pipeline_run_url , pipeline_schedule_id, pipeline_schedule_name, pipeline_uuid

Example config in project's metadata.yaml

notification_config:

slack_config:

webhook_url: "{{ env_var('MAGE_SLACK_WEBHOOK_URL') }}"

message_templates:

failure:

details: >

Failure to execute pipeline {pipeline_run_url}.

Pipeline uuid: {pipeline_uuid}. Trigger name: {pipeline_schedule_name}.

Test custom message."

Doc: https://docs.mage.ai/production/observability/alerting-slack#customize-message-templates

Support MSSQL and MySQL as the database engine

Mage stores orchestration data, user data, and secrets data in a database. In addition to SQLite and Postgres, Mage supports using MSSQL and MySQL as the database engine now.

MSSQL docs:

- https://docs.mage.ai/production/databases/default#mssql

- https://docs.mage.ai/getting-started/setup#using-mssql-as-database

MySQL docs:

- https://docs.mage.ai/production/databases/default#mysql

- https://docs.mage.ai/getting-started/setup#using-mysql-as-database

Add MinIO and Wasabi support via S3 data loader block

Mage supports connecting to MinIO and Wasabi by specifying the AWS_ENDPOINT field in S3 config now.

Doc: https://docs.mage.ai/integrations/databases/S3#minio-support

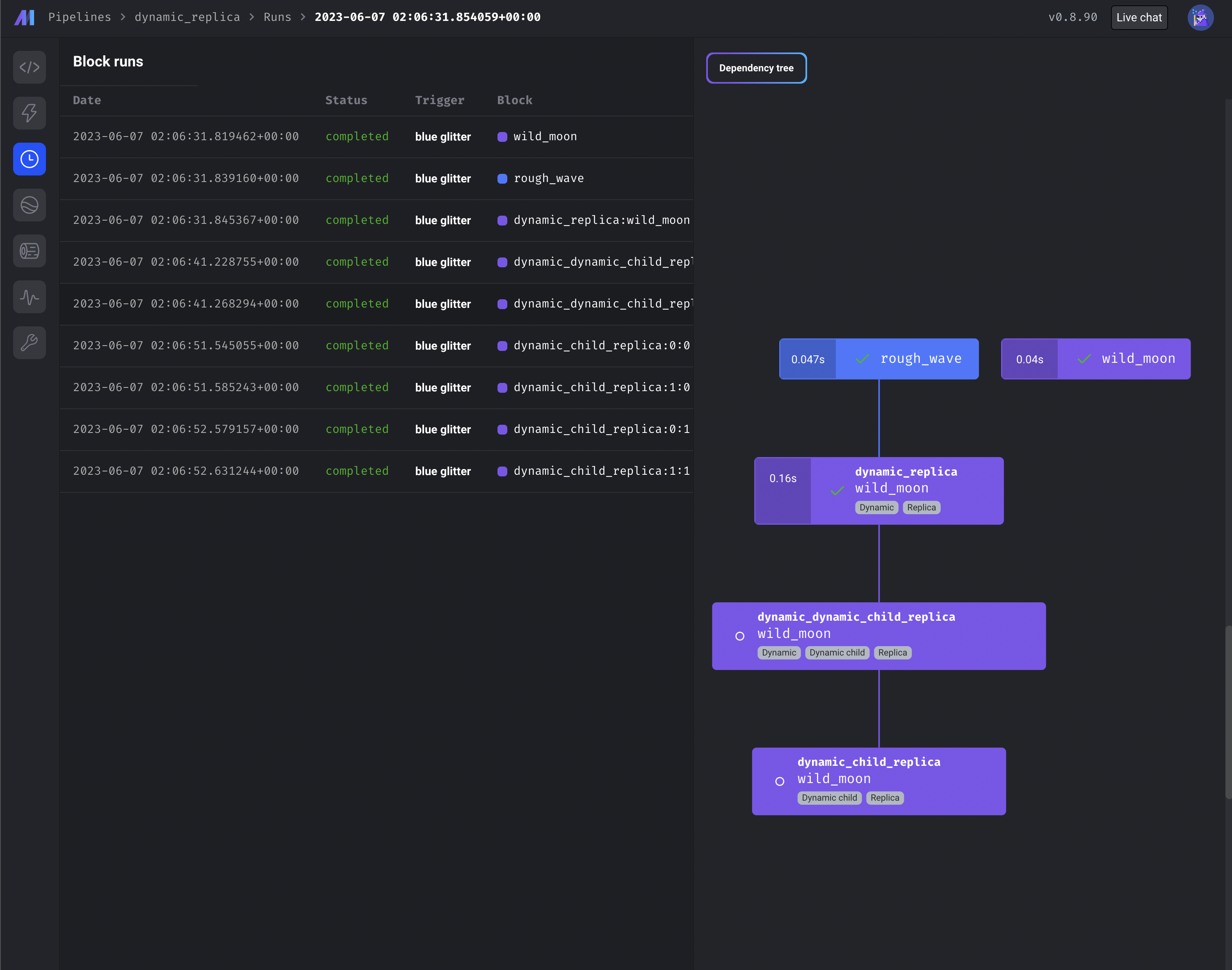

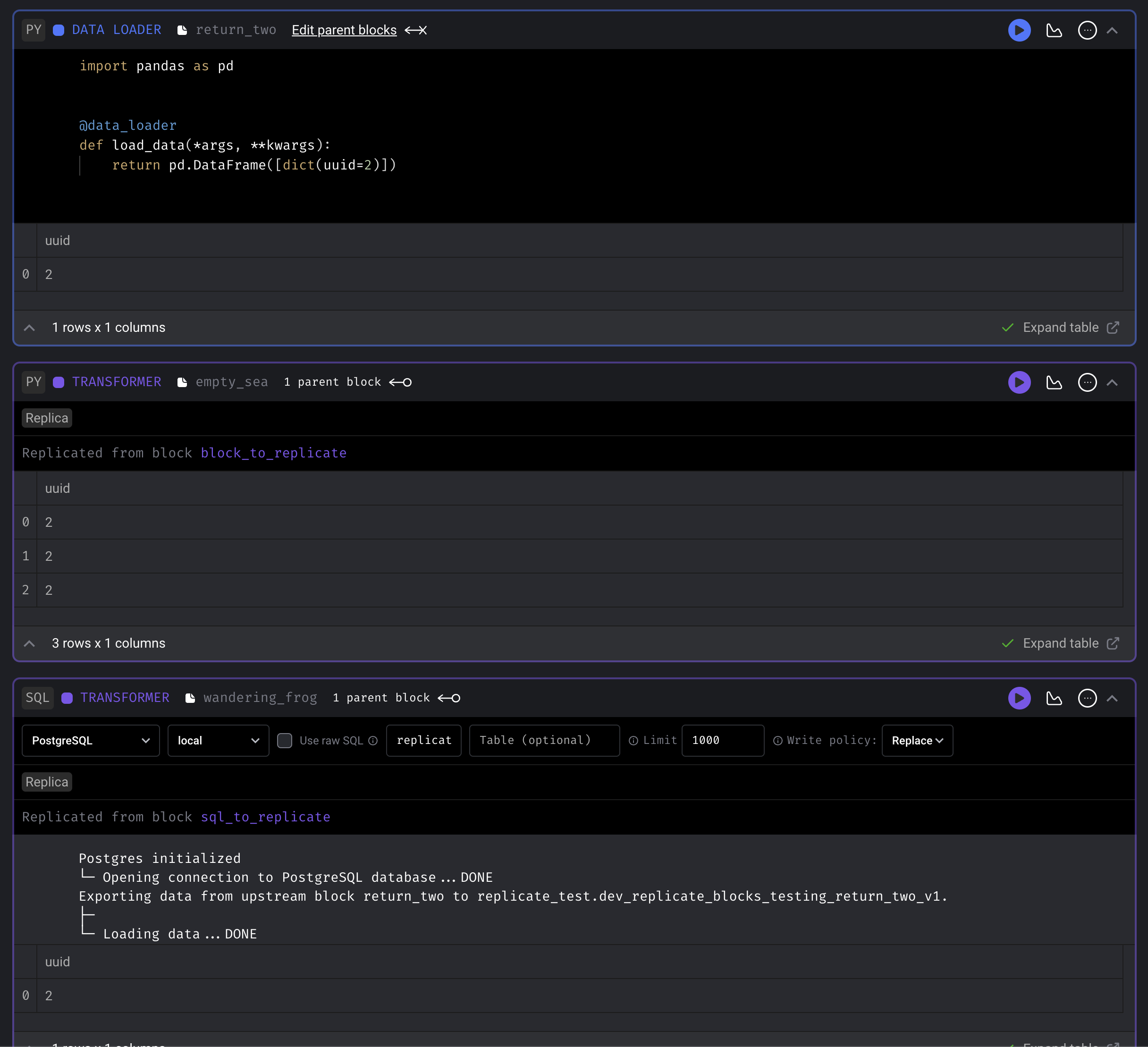

Use dynamic blocks with replica blocks

To maximize block reuse, you can use dynamic and replica blocks in combination.

- https://docs.mage.ai/design/blocks/dynamic-blocks

- https://docs.mage.ai/design/blocks/replicate-blocks

Other bug fixes & polish

- The command

CREATE SCHEMA IF NOT EXISTSis not supported by MSSQL. Provided a default command in BaseSQL -> build_create_schema_command, and an overridden implementation in MSSQL -> build_create_schema_command containing compatible syntax. (Kudos to gjvanvuuren) - Fix streaming pipeline

kwargspassing so that RabbitMQ messages can be acknowledged correctly. - Interpolate variables in streaming configs.

- Git integration: Create known hosts if it doesn't exist.

- Do not create duplicate triggers when DB query fails on checking existing triggers.

- Fix bug: when there are multiple downstream replica blocks, those blocks are not getting queued.

- Fix block uuid formatting for logs.

- Update WidgetPolicy to allow editing and creating widgets without authorization errors.

- Update sensor block to accept positional arguments.

- Fix variables for GCP Cloud Run executor.

- Fix MERGE command for Snowflake destination.

- Fix encoding issue of file upload.

- Always delete the temporary DBT profiles dir to prevent file browser performance degrade.

0.8.86

11 months ago

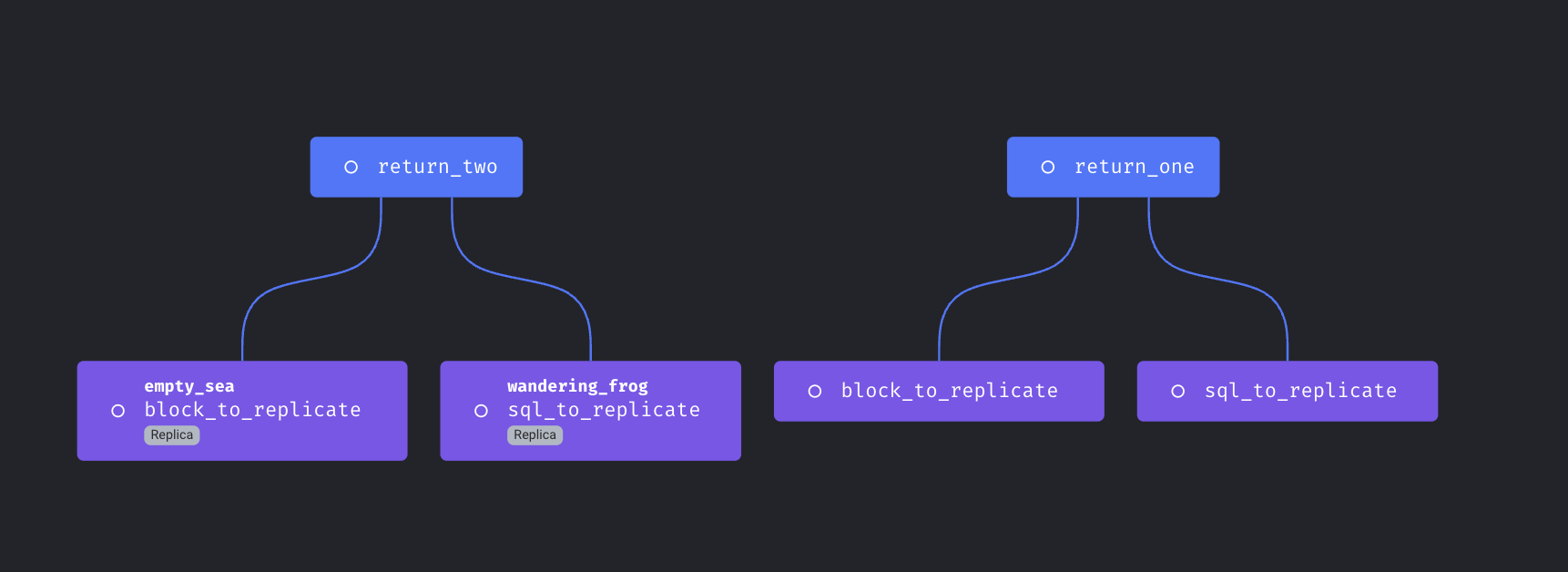

Replicate blocks

Support reusing same block multiple times in a single pipeline.

Doc: https://docs.mage.ai/design/blocks/replicate-blocks

Spark on Yarn

Support running Spark code on Yarn cluster with Mage.

Doc: https://docs.mage.ai/integrations/spark-pyspark#hadoop-and-yarn-cluster-for-spark

Customize retry config

Mage supports configuring automatic retry for block runs with the following ways

- Add

retry_configto project’smetadata.yaml. Thisretry_configwill be applied to all block runs. - Add

retry_configto the block config in pipeline’smetadata.yaml. The block levelretry_configwill override the globalretry_config.

Example config:

retry_config:

# Number of retry times

retries: 0

# Initial delay before retry. If exponential_backoff is true,

# the delay time is multiplied by 2 for the next retry

delay: 5

# Maximum time between the first attempt and the last retry

max_delay: 60

# Whether to use exponential backoff retry

exponential_backoff: true

Doc: https://docs.mage.ai/orchestration/pipeline-runs/retrying-block-runs#automatic-retry

DBT improvements

-

When running DBT block with language YAML, interpolate and merge the user defined --vars in the block’s code into the variables that Mage automatically constructs

- Example block code of different formats

--select demo/models --vars '{"demo_key": "demo_value", "date": 20230101}' --select demo/models --vars {"demo_key":"demo_value","date":20230101} --select demo/models --vars '{"global_var": {{ test_global_var }}, "env_var": {{ test_env_var }}}' --select demo/models --vars {"refresh":{{page_refresh}},"env_var":{{env}}} -

Support

dbt_project.ymlcustom project names and custom profile names that are different than the DBT folder name -

Allow user to configure block to run DBT snapshot

Dynamic SQL block

Support using dynamic child blocks for SQL blocks

Doc: https://docs.mage.ai/design/blocks/dynamic-blocks#dynamic-sql-blocks

Run blocks concurrently in separate containers on Azure

If your Mage app is deployed on Microsoft Azure with Mage’s terraform scripts, you can choose to launch separate Azure container instances to execute blocks.

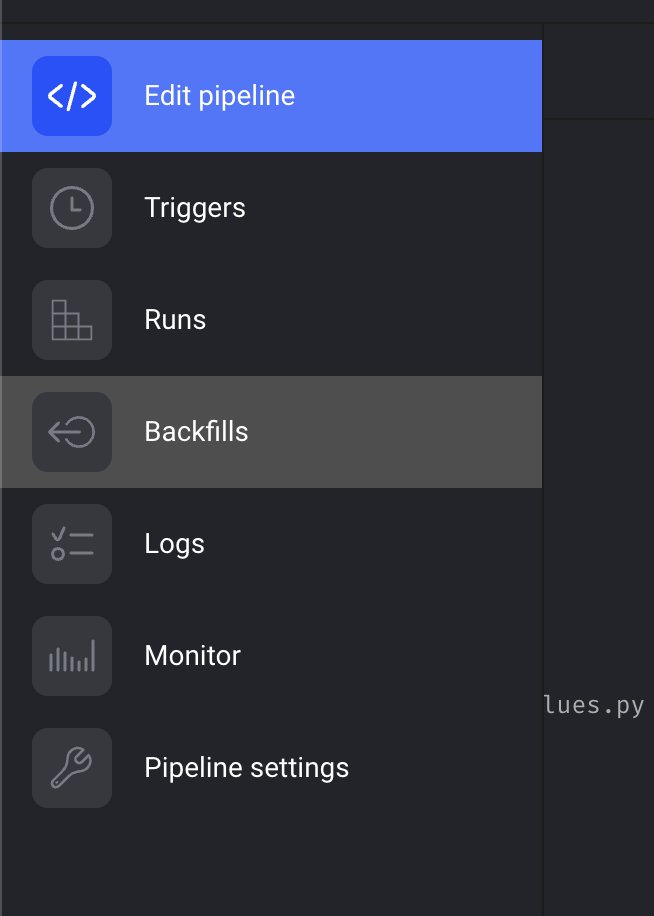

Run the scheduler and the web server in separate containers or pods

- Run scheduler only:

mage start project_name --instance-type scheduler - Run web server only:

mage start project_name --instance-type web_server- web server can be run in multiple containers or pods

- Run both server and scheduler:

mage start project_name --instance-type server_and_scheduler

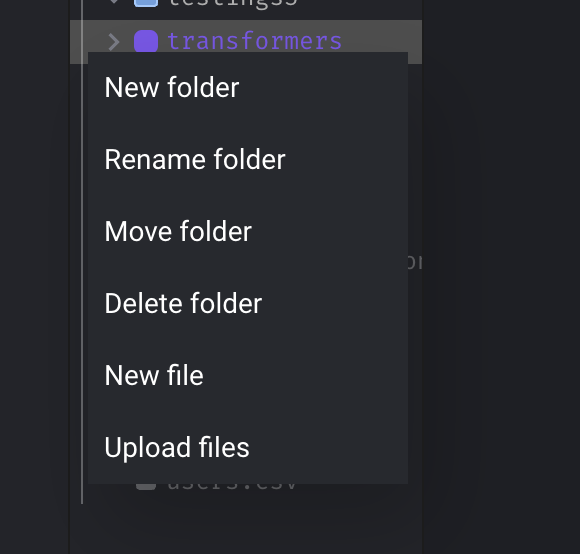

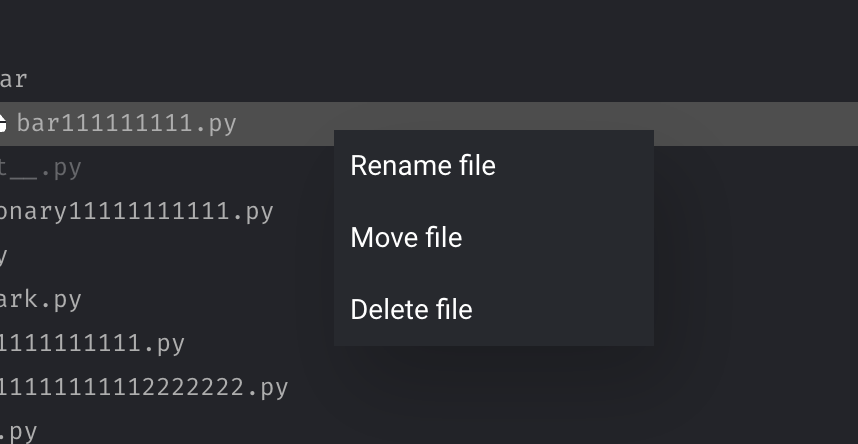

Support all operations on folder

Support “Add”, “Rename”, “Move”, “Delete” operations on folder.

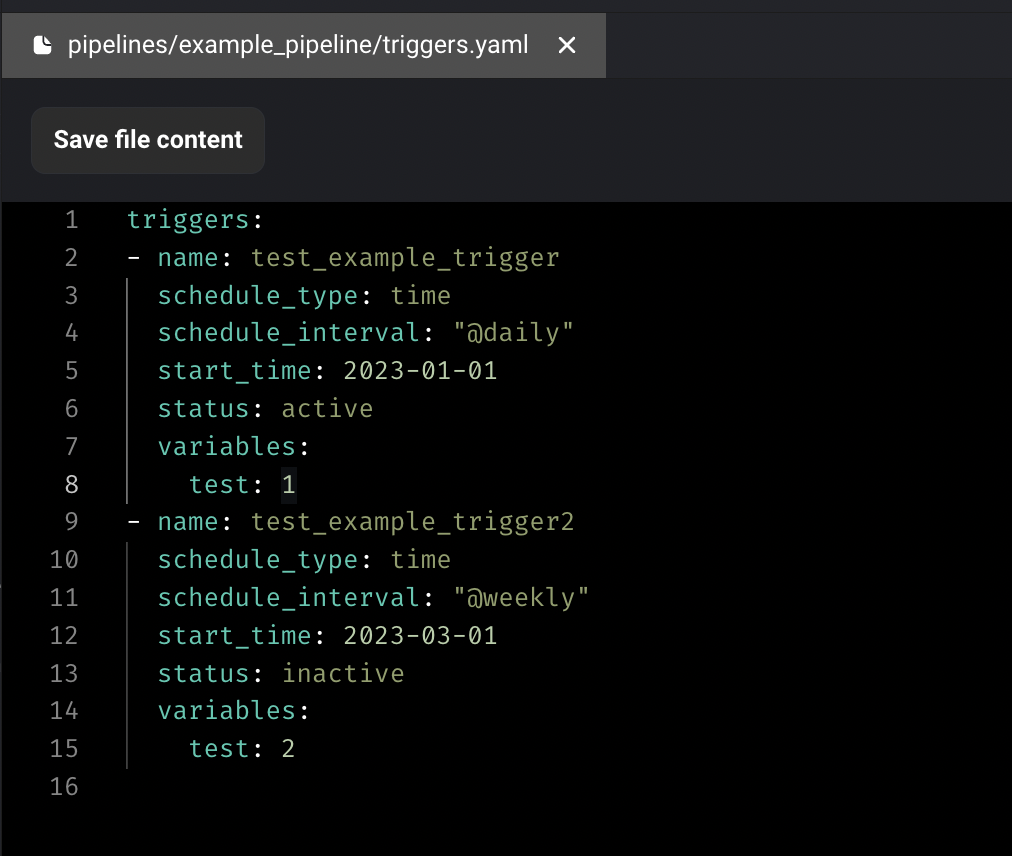

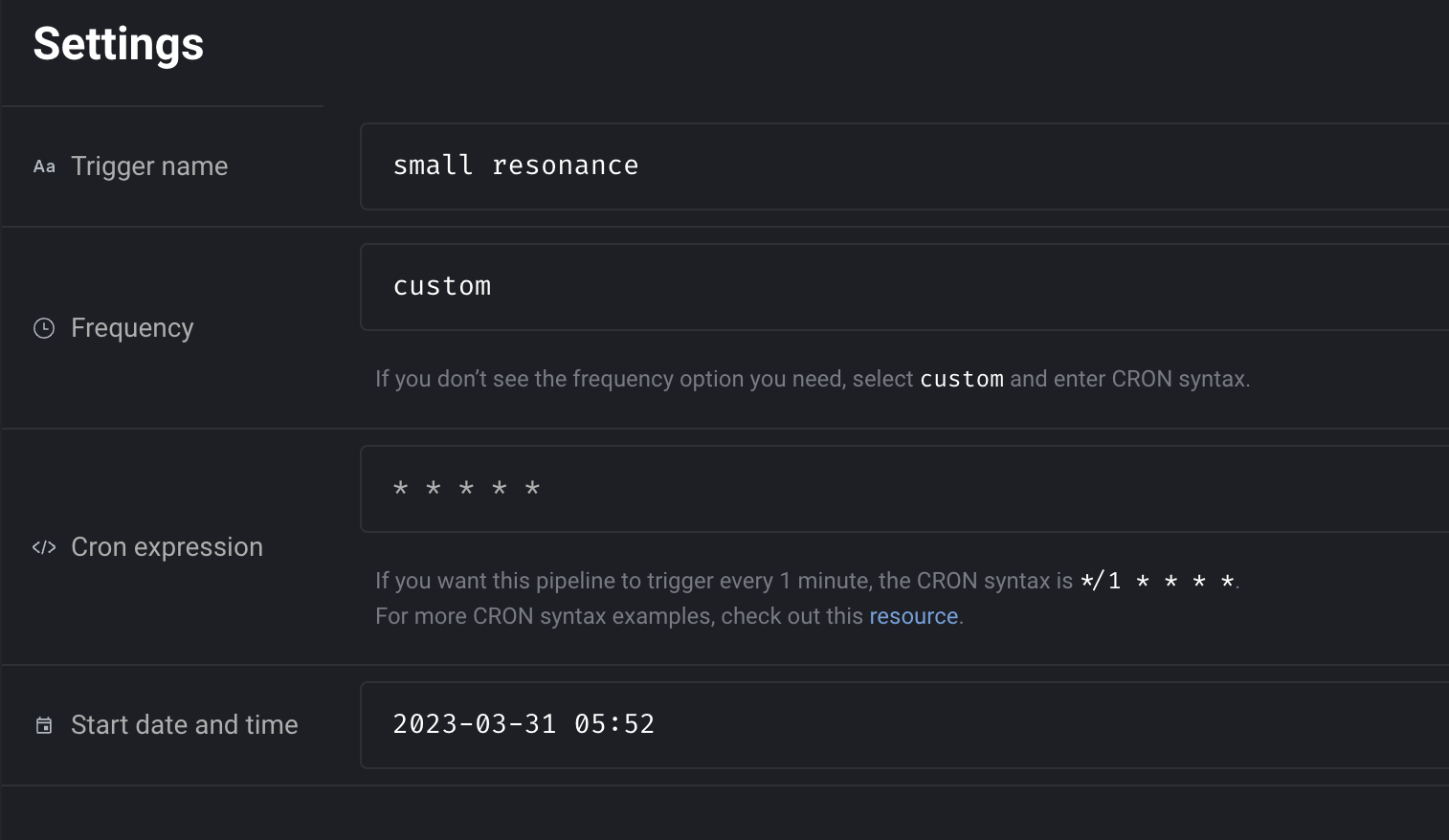

Configure environments for triggers in code

Allow specifying envs value to apply triggers only in certain environments.

Example:

triggers:

- name: test_example_trigger_in_prod

schedule_type: time

schedule_interval: "@daily"

start_time: 2023-01-01

status: active

envs:

- prod

- name: test_example_trigger_in_dev

schedule_type: time

schedule_interval: "@hourly"

start_time: 2023-03-01

status: inactive

settings:

skip_if_previous_running: true

allow_blocks_to_fail: true

envs:

- dev

Doc: https://docs.mage.ai/guides/triggers/configure-triggers-in-code#create-and-configure-triggers

Replace current logs table with virtualized table for better UI performance

- Use virtual table to render logs so that loading thousands of rows won't slow down browser performance.

- Fix formatting of logs table rows when a log is selected (the log detail side panel would overly condense the main section, losing the place of which log you clicked).

- Pin logs page header and footer.

- Tested performance using Lighthouse Chrome browser extension, and performance increased 12 points.

Other bug fixes & polish

-

Add indices to schedule models to speed up DB queries.

-

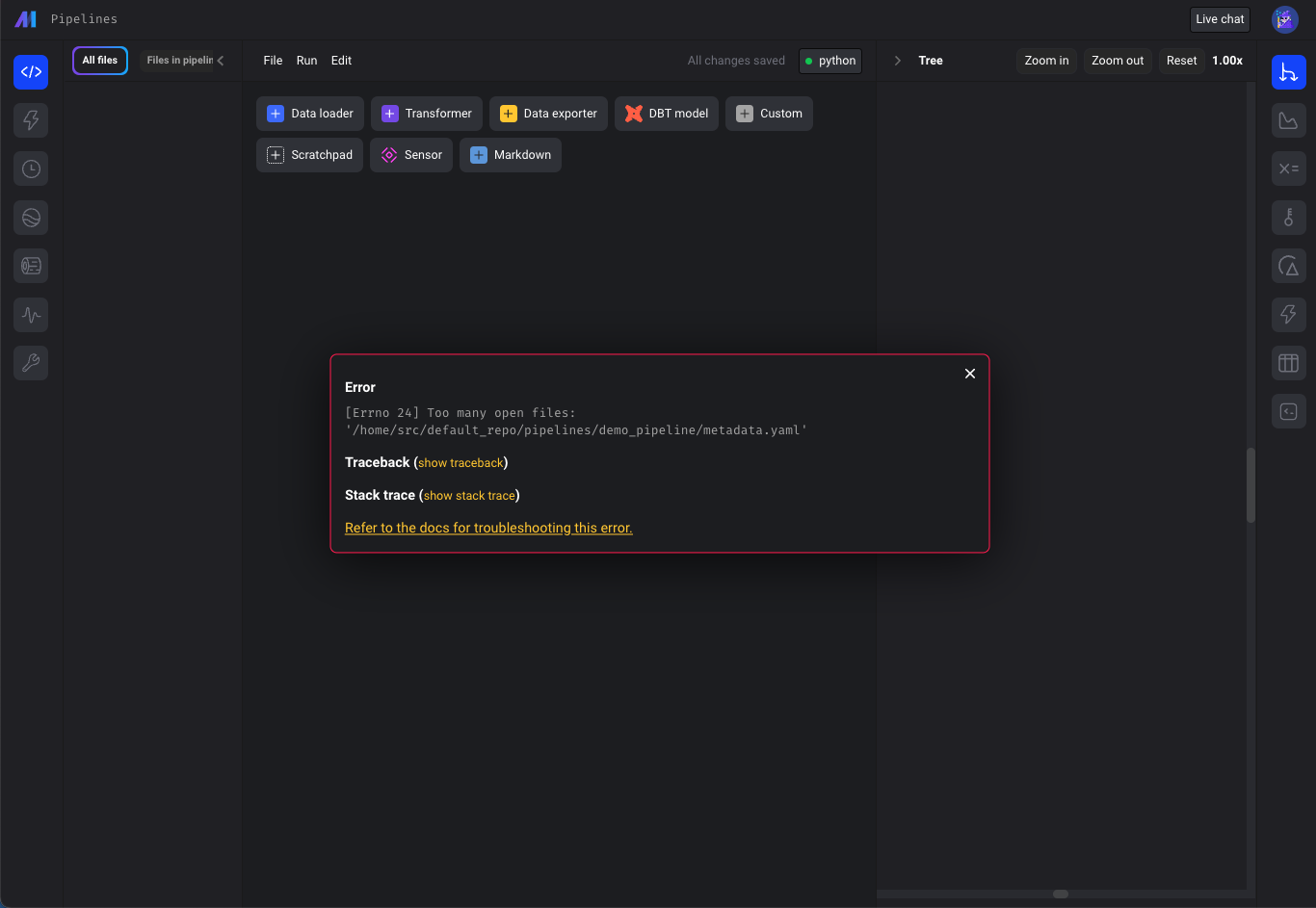

“Too many open files issue”

- Check for "Too many open files" error on all pages calling "displayErrorFromReadResponse" util method (e.g. pipeline edit page), not just Pipelines Dashboard.

- Update terraform scripts to set the

ULIMIT_NO_FILEenvironment variable to increase maximum number of open files in Mage deployed on AWS, GCP and Azure.

-

Fix git_branch resource blocking page loads. The

git clonecommand could cause the entire app to hang if the host wasn't added to known hosts.git clonecommand is updated to run as a separate process with the timeout, so it won't block the entire app if it's stuck. -

Fix bug: when adding a block in between blocks in pipeline with two separate root nodes, the downstream connections are removed.

-

Fix DBT error:

KeyError: 'file_path'. Check forfile_pathbefore callingparse_attributesmethod to avoid KeyError. -

Improve the coding experience when working with Snowflake data provider credentials. Allow more flexibility in Snowflake SQL block queries. Doc: https://docs.mage.ai/integrations/databases/Snowflake#methods-for-configuring-database-and-schema

-

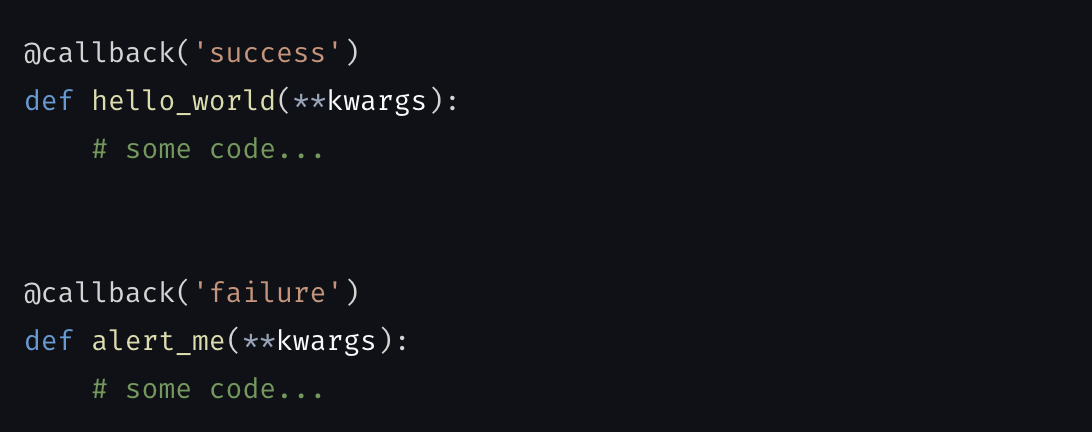

Pass parent block’s output and variables to its callback blocks.

-

Fix missing input field and select field descriptions in charts.

-

Fix bug: Missing values template chart doesn’t render.

-

Convert

numpy.ndarraytolistif column type is list when fetching input variables for blocks. -

Fix runtime and global variables not available in the keyword arguments when executing block with upstream blocks from the edit pipeline page.

View full Changelog

0.8.83

11 months ago

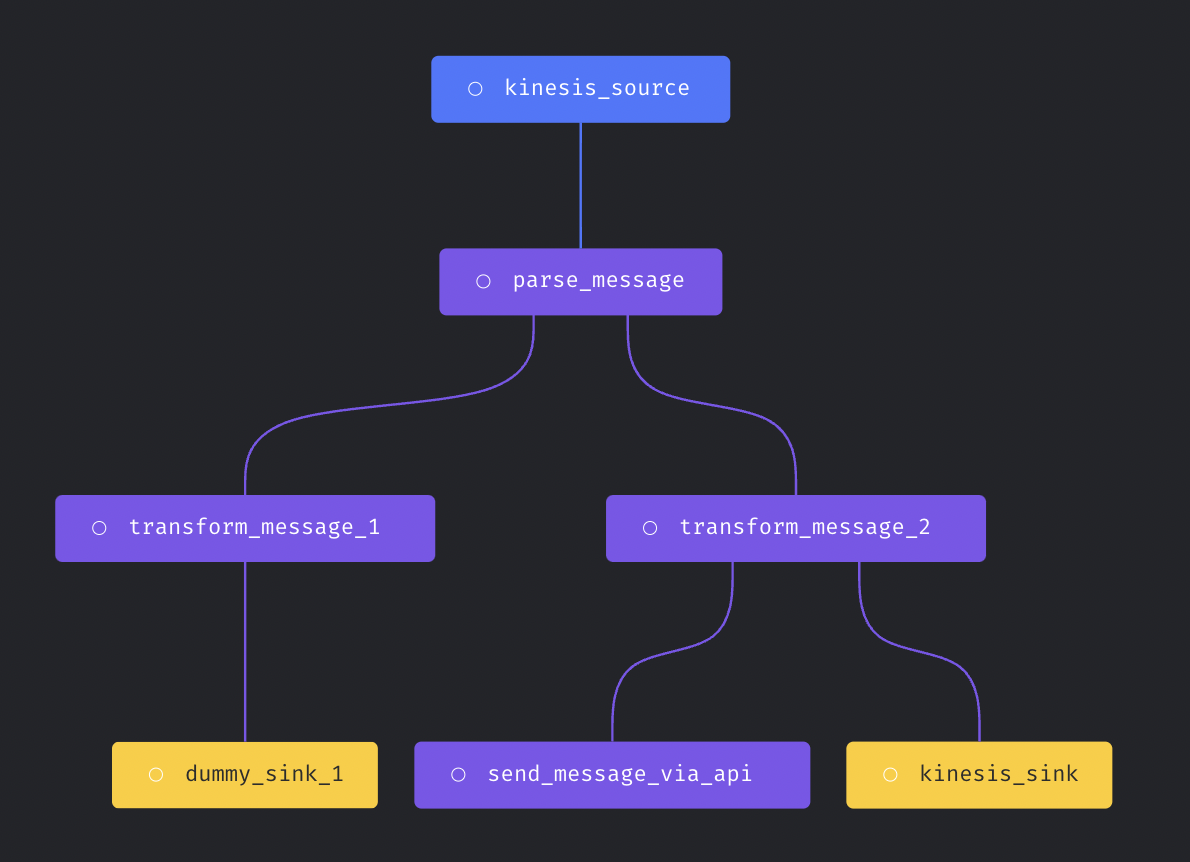

Support more complex streaming pipeline

More complex streaming pipeline is supported in Mage now. You can use more than transformer and more than one sinks in the streaming pipeline.

Here is an example streaming pipeline with multiple transformers and sinks.

Doc for streaming pipeline: https://docs.mage.ai/guides/streaming/overview

Custom Spark configuration

Allow using custom Spark configuration to create Spark session used in the pipeline.

spark_config:

# Application name

app_name: 'my spark app'

# Master URL to connect to

# e.g., spark_master: 'spark://host:port', or spark_master: 'yarn'

spark_master: 'local'

# Executor environment variables

# e.g., executor_env: {'PYTHONPATH': '/home/path'}

executor_env: {}

# Jar files to be uploaded to the cluster and added to the classpath

# e.g., spark_jars: ['/home/path/example1.jar']

spark_jars: []

# Path where Spark is installed on worker nodes,

# e.g. spark_home: '/usr/lib/spark'

spark_home: null

# List of key-value pairs to be set in SparkConf

# e.g., others: {'spark.executor.memory': '4g', 'spark.executor.cores': '2'}

others: {}

Doc for running PySpark pipeline: https://docs.mage.ai/integrations/spark-pyspark#standalone-spark-cluster

Data integration pipeline

DynamoDB source

New data integration source DynamoDB is added.

Bug fixes

- Use

timestamptzas data type for datetime column in Postgres destination. - Fix BigQuery batch load error.

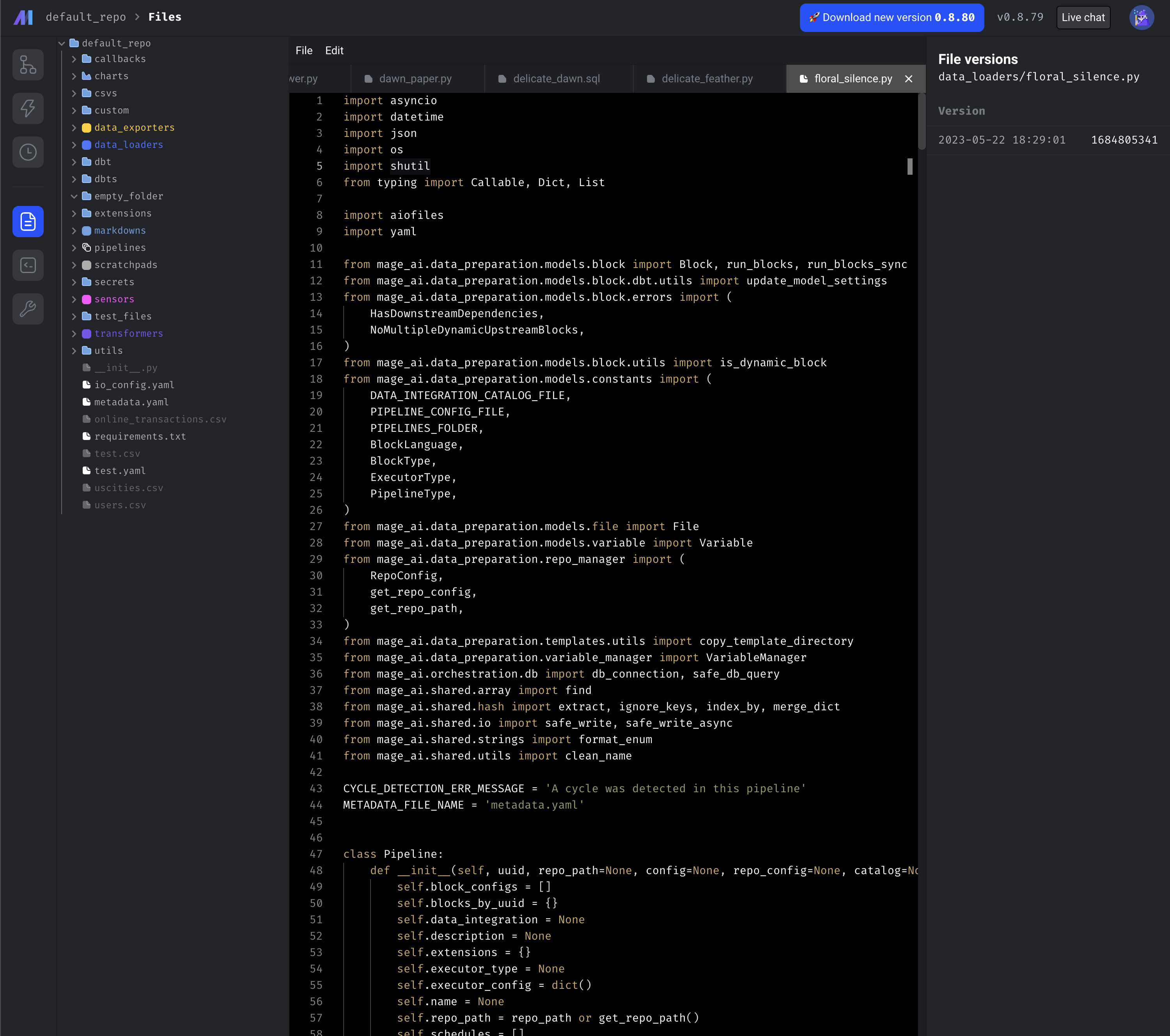

Show file browser outside edit pipeline

Improved the file editor of Mage so that user can edit the files without going into a pipeline.

Add all file operations

Speed up writing block output to disk

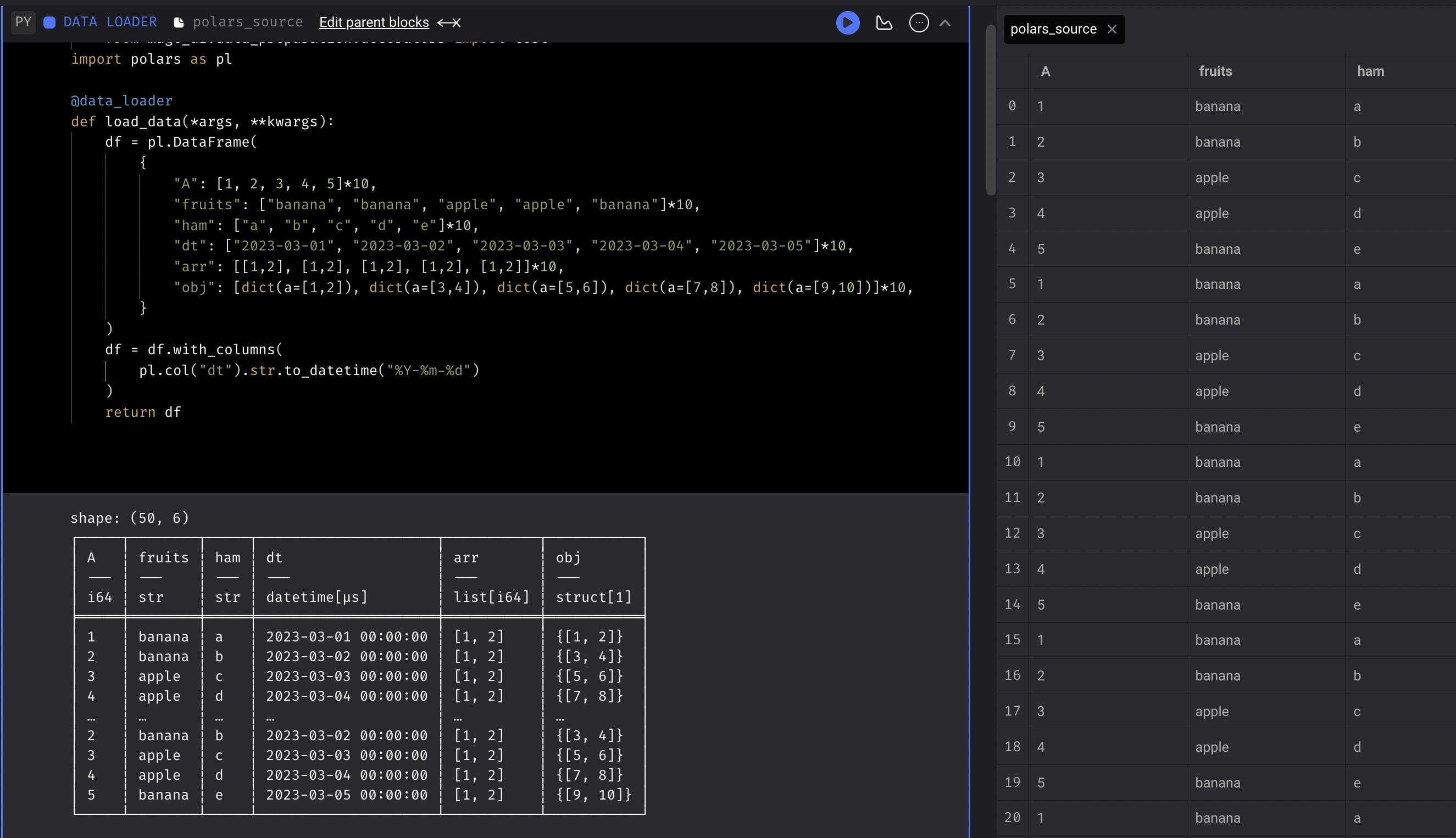

Mage uses Polars to speed up writing block output (DataFrame) to disk, reducing the time of fetching and writing a DataFrame with 2 million rows from 90s to 15s.

Add default .gitignore

Mage automatically adds the default .gitignore file when initializing project

.DS_Store

.file_versions

.gitkeep

.log

.logs/

.preferences.yaml

.variables/

__pycache__/

docker-compose.override.yml

logs/

mage-ai.db

mage_data/

secrets/

Other bug fixes & polish

- Include trigger URL in slack alert.

- Fix race conditions for multiple runs within one second

- If DBT block is language YAML, hide the option to add upstream dbt refs

- Include event_variables in individual pipeline run retry

- Callback block

- Include parent block uuid in callback block kwargs

- Pass parent block’s output and variables to its callback blocks

- Delete GCP cloud run job after it's completed.

- Limit the code block output from print statements to avoid sending excessively large payload request bodies when saving the pipeline.

- Lock typing extension version to fix error

TypeError: Instance and class checks can only be used with @runtime protocols. - Fix git sync and also updates how we save git settings for users in the backend.

- Fix MySQL ssh tunnel: close ssh tunnel connection after testing connection.

View full Changelog

0.8.78

11 months ago

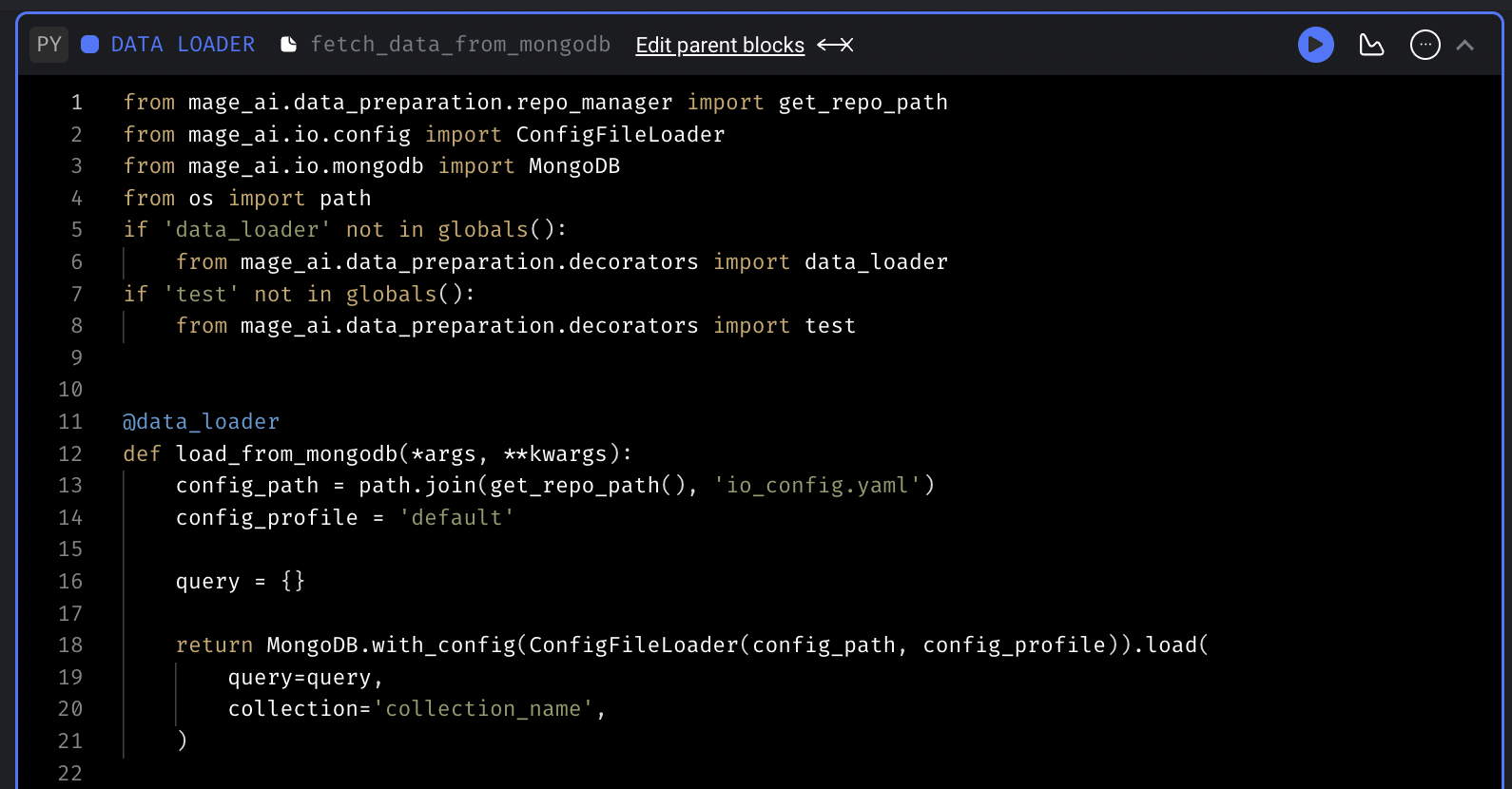

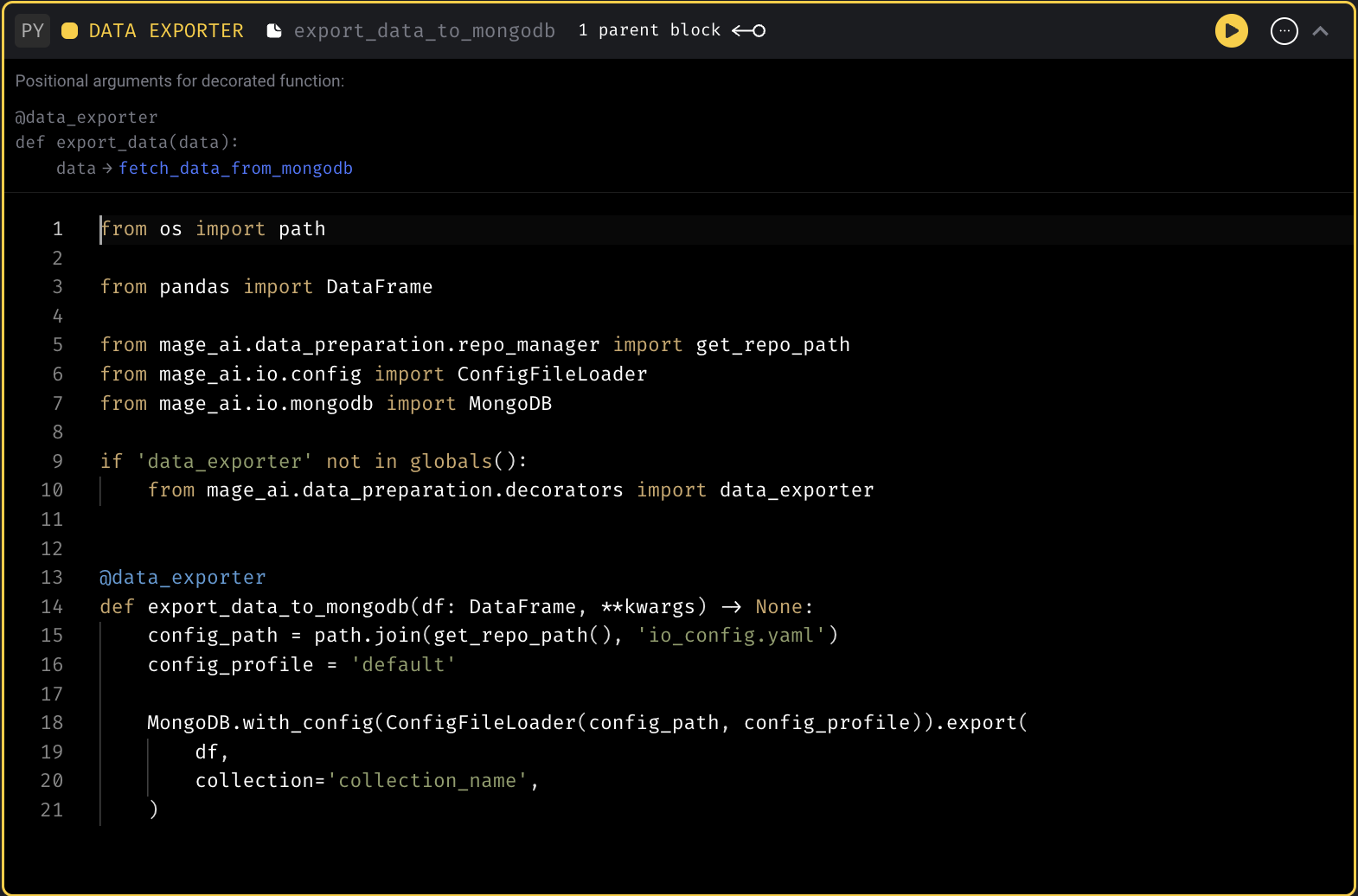

MongoDB code templates

Add code templates to fetch data from and export data to MongoDB.

Example MongoDB config in io_config.yaml :

version: 0.1.1

default:

MONGODB_DATABASE: database

MONGODB_HOST: host

MONGODB_PASSWORD: password

MONGODB_PORT: 27017

MONGODB_COLLECTION: collection

MONGODB_USER: user

Data loader template

Data exporter template

Support using renv for R block

renv is installed in Mage docker image by default. User can use renv package to manage R dependency for your project.

Doc for renv package: https://cran.r-project.org/web/packages/renv/vignettes/renv.html

Run streaming pipeline in separate k8s pod

Support running streaming pipeline in k8s executor to scale up streaming pipeline execution.

It can be configured in pipeline metadata.yaml with executor_type field. Here is an example:

blocks:

- ...

- ...

executor_count: 1

executor_type: k8s

name: test_streaming_kafka_kafka

uuid: test_streaming_kafka_kafka

When cancelling the pipeline run in Mage UI, Mage will kill the k8s job.

DBT support for Spark

Support running Spark DBT models in Mage. Currently, only the connection method session is supported.

Follow this doc to set up Spark environment in Mage. Follow the instructions in https://docs.mage.ai/tutorials/setup-dbt to set up the DBT. Here is an example DBT Spark profiles.yml

spark_demo:

target: dev

outputs:

dev:

type: spark

method: session

schema: default

host: local

Doc for staging/production deployment

- Add doc for setting up the CI/CD pipeline to deploy Mage to staging and production environments: https://docs.mage.ai/production/ci-cd/staging-production/github-actions

- Provide example Github Action template for deployment on AWS ECS: https://github.com/mage-ai/mage-ai/blob/master/templates/github_actions/build_and_deploy_to_aws_ecs_staging_production.yml

Enable user authentication for multi-development environment

Update the multi-development environment to go through the user authentication flow. Multi-development environment is used to manage development instances on cloud.

Doc for multi-development environment: https://docs.mage.ai/developing-in-the-cloud/cloud-dev-environments/overview

Refined dependency graph

- Add buttons for zooming in/out of and resetting dependency graph.

- Add shortcut to reset dependency graph view (double-clicking anywhere on the canvas).

Add new block with downstream blocks connected

- If a new block is added between two blocks, include the downstream connection.

- If a new block is added after a block that has multiple downstream blocks, the downstream connections will not be added since it is unclear which downstream connections should be made.

- Hide Add transformer block button in integration pipelines if a transformer block already exists (Mage currently only supports 1 transformer block for integration pipelines).

Improve UI performance

- Reduce number of API requests made when refocusing browser.

- Decrease notebook CPU and memory consumption in the browser by removing unnecessary code block re-renders in Pipeline Editor.

Add pre-commit and improve contributor friendliness

Shout out to Joseph Corrado for his contribution of adding pre-commit hooks to Mage to run code checks before committing and pushing the code.

Doc: https://github.com/mage-ai/mage-ai/blob/master/README_dev.md

Create method for deleting secret keys

Shout out to hjhdaniel for his contribution of adding the method for deleting secret keys to Mage.

Example code:

from mage_ai.data_preparation.shared.secrets import delete_secret

delete_secret('secret_name')

Retry block

Retry from selected block in integration pipeline

If a block is selected in an integration pipeline to retry block runs, only the block runs for the selected block's stream will be ran.

Automatic retry for blocks

Mage now automatically retries blocks twice on failures (3 total attempts).

Other bug fixes & polish

-

Display error popup with link to docs for “too many open files” error.

-

Fix DBT block limit input field: the limit entered through the UI wasn’t taking effect when previewing the model results. Fix this and set a default limit of 1000.

-

Fix BigQuery table id issue for batch load.

-

Fix unique conflict handling for BigQuery batch load.

-

Remove startup_probe in GCP cloud run executor.

-

Fix run command for AWS and GCP job runs so that job run logs can be shown in Mage UI correctly.

-

Pass block configuration to

kwargsin the method. -

Fix SQL block execution when using different schemas between upstream block and current block.

View full Changelog

0.8.75

1 year ago

Polars integration

Support using Polars DataFrame in Mage blocks.

Opsgenie integration

Shout out to Sergio Santiago for his contribution of integrating Opsgenie as an alerting option in Mage.

Doc: https://docs.mage.ai/production/observability/alerting-opsgenie

Data integration

Speed up exporting data to BigQuery destination

Add support for using batch load jobs instead of the query API in BigQuery destination. You can enable it by setting use_batch_load to true in BigQuery destination config.

When loading ~150MB data to BigQuery, using batch loading reduces the time from 1 hour to around 2 minutes (30x the speed).

Microsoft SQL Server destination improvements

- Support ALTER command to add new columns

- Support MERGE command with multiple unique columns (use AND to connect the columns)

- Add MSSQL config fields to

io_config.yaml - Support multiple keys in MSSQL destination

Other improvements

- Fix parsing int timestamp in intercom source.

- Remove the “Execute” button from transformer block in data integration pipelines.

- Support using ssh tunnel for MySQL source with private key content

Git integration improvements

- Pass in Git settings through environment variables

- Use

git switchto switch branches - Fix git ssh key generation

- Save cwd as repo path if user leaves the field blank

Update disable notebook edit mode to allow certain operations

Add another value to DISABLE_NOTEBOOK_EDIT_ACCESS environment variable to allow users to create secrets, variables, and run blocks.

The available values are

- 0: this is the same as omitting the variable

- 1: no edit/execute access to the notebook within Mage. Users will not be able to use the notebook to edit pipeline content, execute blocks, create secrets, or create variables.

- 2: no edit access for pipelines. Users will not be able to edit pipeline/block metadata or content.

Doc: https://docs.mage.ai/production/configuring-production-settings/overview#read-only-access

Update Admin abilities

- Allow admins to view and update existing users' usernames/emails/roles/passwords (except for owners and other admins).

- Admins can only view Viewers/Editors and adjust their roles between those two.

- Admins cannot create or delete users (only owners can).

- Admins cannot make other users owners or admins (only owners can).

Retry block runs from specific block

For standard python pipelines, retry block runs from a selected block. The selected block and all downstream blocks will be re-ran after clicking the Retry from selected block button.

Other bug fixes & polish

-

Fix terminal user authentication. Update terminal authentication to happen on message.

-

Fix a potential authentication issue for the Google Cloud PubSub publisher client

-

Dependency graph improvements

- Update dependency graph connection depending on port side

- Show all ports for data loader and exporter blocks in dependency graph

-

DBT

- Support DBT alias and schema model config

- Fix

limitproperty in DBT block PUT request payload.

-

Retry pipeline run

- Fix bug: Individual pipeline run retries does not work on sqlite.

- Allow bulk retry runs when DISABLE_NOTEBOOK_EDIT_ACCESS enabled

- Fix bug: Retried pipeline runs and errors don’t appear in Backfill detail page.

-

Fix bug: When Mage fails to fetch a pipeline due to a backend exception, it doesn't show the actual error. It uses "undefined" in the pipeline url instead, which makes it hard to debug the issue.

-

Improve job scheduling: If jobs with QUEUED status are not in queue, re-enqueue them.

-

Pass

imagePullSecretsto k8s job when usingk8sas the executor. -

Fix streaming pipeline cancellation.

-

Fix the version of google-cloud-run package.

-

Fix query permissions for block resource

-

Catch

sqlalchemy.exc.InternalErrorin server and roll back transaction.

View full Changelog

0.8.69

1 year ago

Markdown blocks aka Note blocks or Text blocks

Added Markdown block to Pipeline Editor.

Doc: https://docs.mage.ai/guides/blocks/markdown-blocks

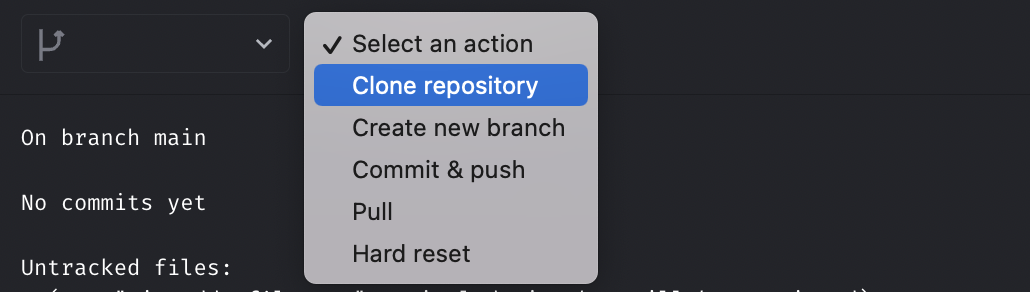

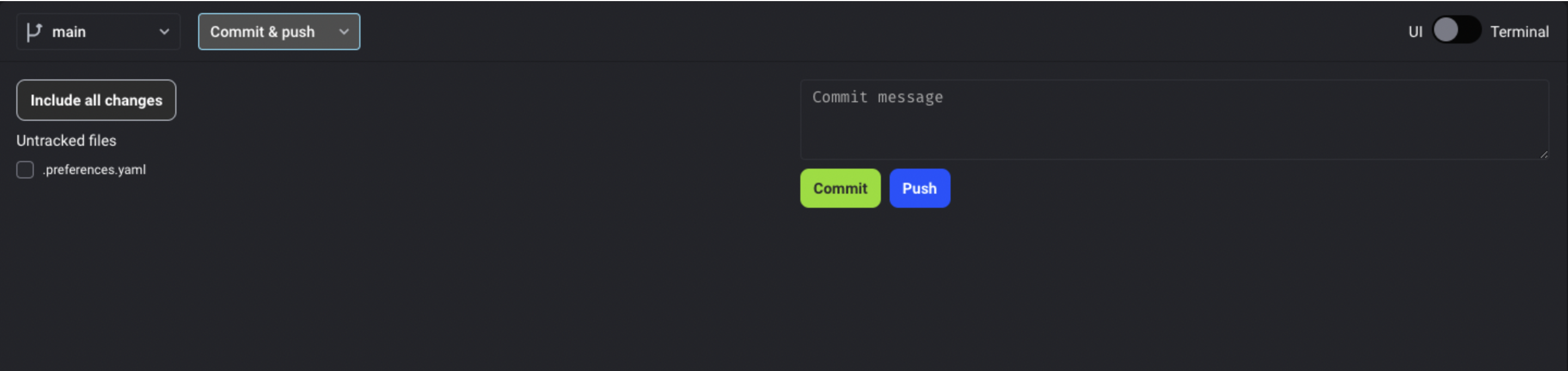

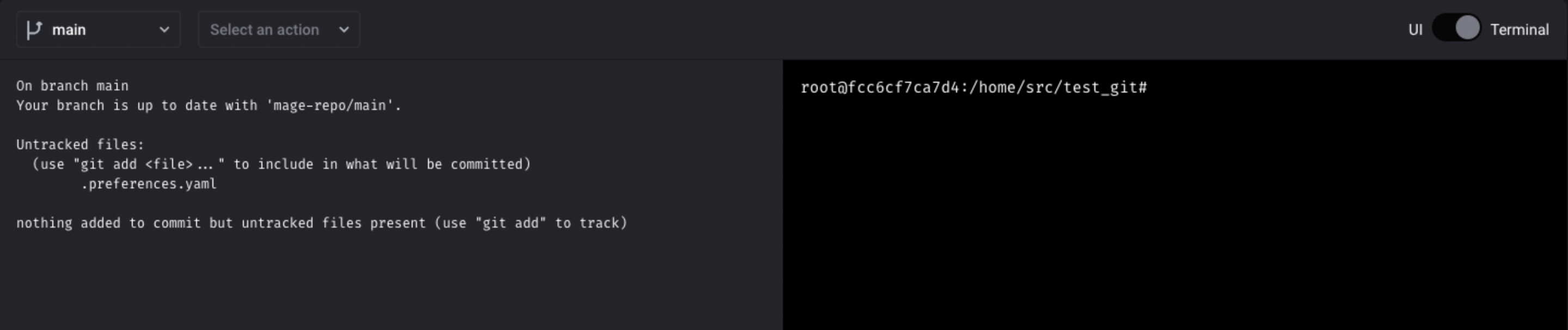

Git integration improvements

Add git clone action

Allow users to select which files to commit

Add HTTPS authentication

Doc: https://docs.mage.ai/production/data-sync/git#https-token-authentication

Add a terminal toggle, so that users have easier access to the terminal

Callback block improvements

Doc: https://docs.mage.ai/development/blocks/callbacks/overview

Make callback block more generic and support it in data integration pipeline.

Keyword arguments available in data integration pipeline callback blocks: https://docs.mage.ai/development/blocks/callbacks/overview#data-integration-pipelines-only

Transfer owner status or edit the owner account email

- Owners can make other users owners.

- Owners can edit other users' emails.

- Users can edit their emails.

Bulk retry pipeline runs.

Support bulk retrying pipeline runs for a pipeline.

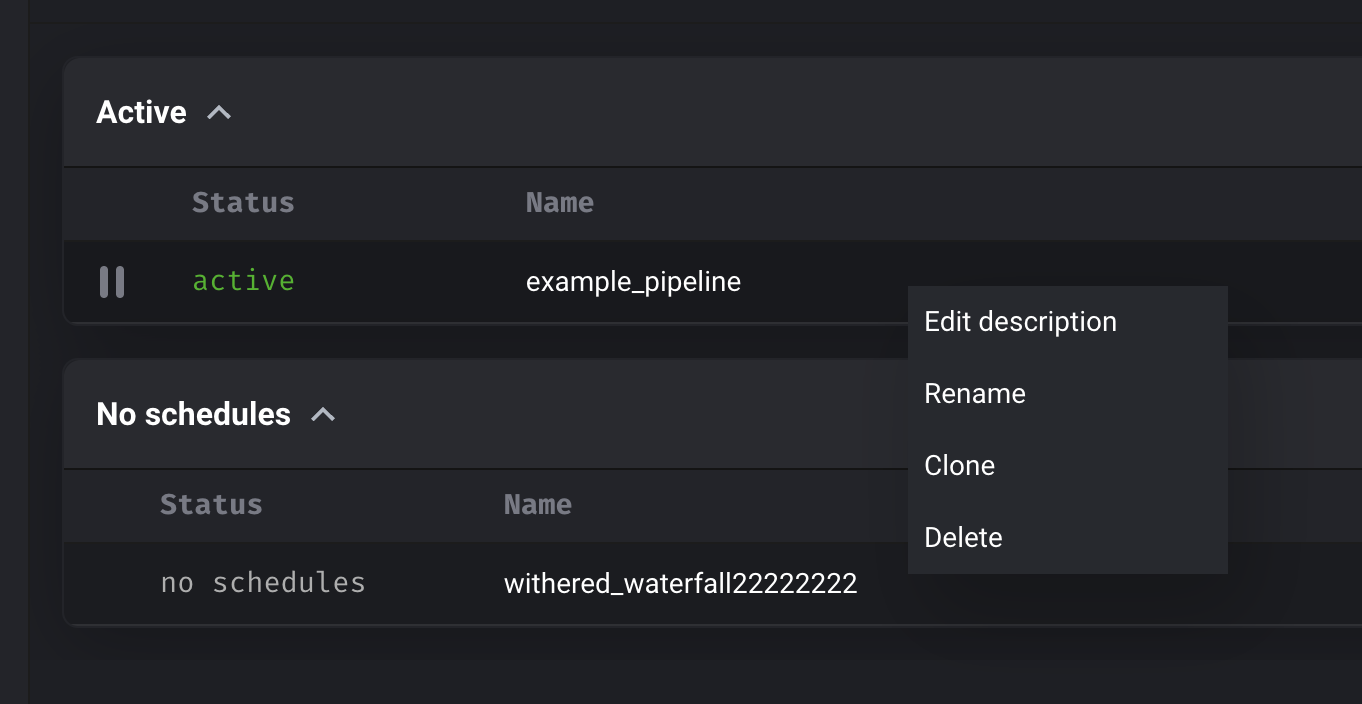

Right click context menu

Add right click context menu for row on pipeline list page for pipeline actions (e.g. rename).

Navigation improvements

When hovering over left and right vertical navigation, expand it to show navigation title like BigQuery’s UI.

Use Great Expectations suite from JSON object or JSON file

Doc: https://docs.mage.ai/development/testing/great-expectations#json-object

Support DBT incremental models

Doc: https://docs.mage.ai/dbt/incremental-models

Data integration pipeline

New destination: Google Cloud Storage

Shout out to André Ventura for his contribution of adding the Google Cloud Storage destination to data integration pipeline.

Other improvements

- Use bookmarks properly in Intercom incremental streams.

- Support IAM role based authentication in the Amazon S3 source and Amazon S3 destination for data integration pipelines

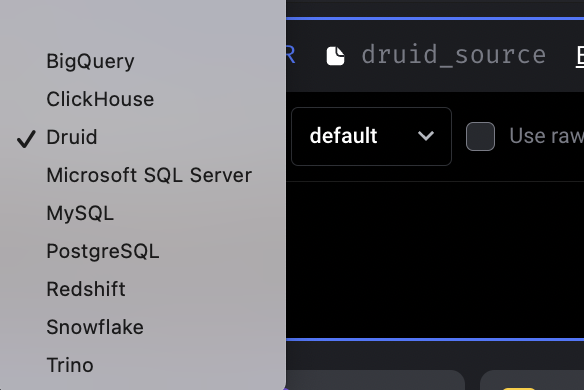

SQL module improvements

Add Apache Druid data source

Shout out to Dhia Eddine Gharsallaoui again for his contribution of adding Druid data source to Mage.

Doc: https://docs.mage.ai/integrations/databases/Druid

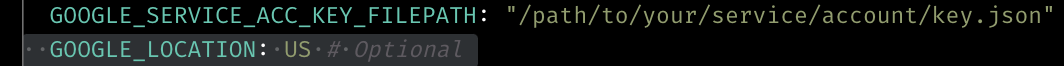

Add location as a config in BigQuery IO

Speed up Postgres IO export method

Use COPY command in mage_ai.io.postgres.Postgres export method to speed up writing data to Postgres.

Streaming pipeline

New source: Google Cloud PubSub

Doc: https://docs.mage.ai/guides/streaming/sources/google-cloud-pubsub

Deserialize message with Avro schema in Confluent schema registry

Kubernetes executor

Add config to set all pipelines use K8s executor

Setting the environment variable DEFAULT_EXECUTOR_TYPE to k8s to use K8s executor by default for all pipelines. Doc: https://docs.mage.ai/production/configuring-production-settings/compute-resource#2-set-executor-type-and-customize-the-compute-resource-of-the-mage-executor

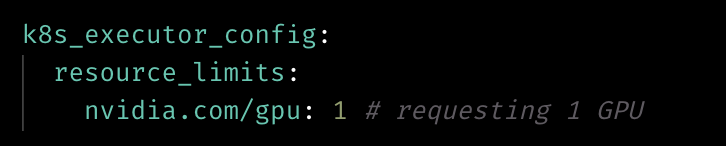

Add the k8s_executor_config to project’s metadata.yaml to apply the config to all the blocks that use k8s executor in this project. Doc: https://docs.mage.ai/production/configuring-production-settings/compute-resource#kubernetes-executor

Support configuring GPU for k8s executor

Allow specifying GPU resource in k8s_executor_config.

Not use default as service account namespace in Helm chart

Fix service account permission for creating Kubernetes jobs by not using default namespace.

Doc for deploying with Helm: https://docs.mage.ai/production/deploying-to-cloud/using-helm

Other bug fixes & polish

- Fix error: When selecting or filtering data from parent block, error occurs: "AttributeError: list object has no attribute tolist".

- Fix bug: Web UI crashes when entering edit page (github issue).

- Fix bug: Hidden folder (.mage_temp_profiles) disabled in File Browser and not able to be minimized

- Support configuring Mage server public host used in the email alerts by setting environment variable

MAGE_PUBLIC_HOST. - Speed up PipelineSchedule DB query by adding index to column.

- Fix EventRulesResource AWS permissions error

- Fix bug: Bar chart shows too many X-axis ticks

View full Changelog

0.8.58

1 year ago

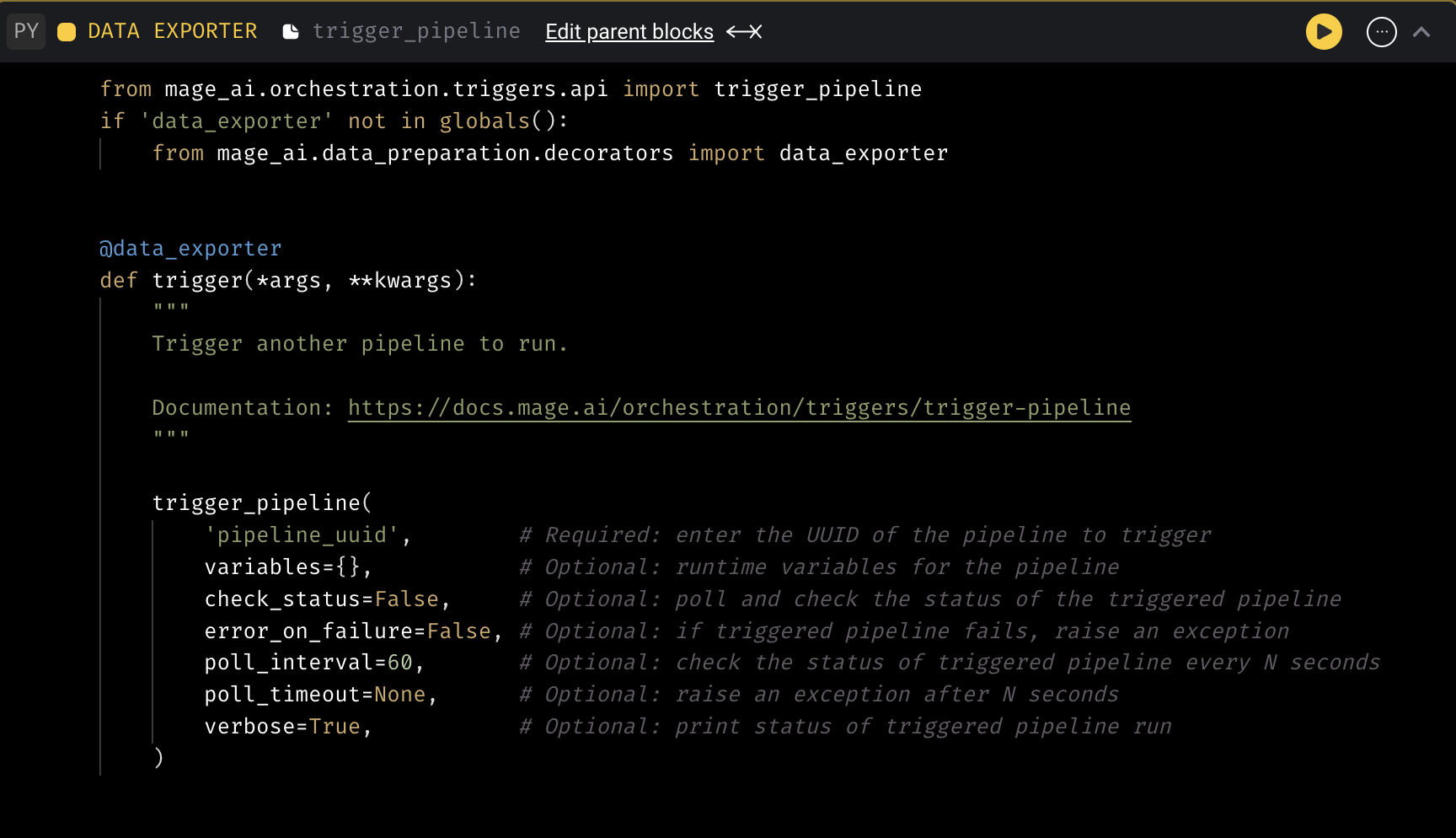

Trigger pipeline from a block

Provide code template to trigger another pipeline from a block within a different pipeline.****

Doc: https://docs.mage.ai/orchestration/triggers/trigger-pipeline

Data integration pipeline

New source: Twitter Ads

Streaming pipeline

New sink: MongoDB

Doc: https://docs.mage.ai/guides/streaming/destinations/mongodb

Allow deleting SQS message manually

Mage supports two ways to delete messages:

- Delete the message in the data loader automatically after deserializing the message body.

- Manually delete the message in transformer after processing the message.

Doc: https://docs.mage.ai/guides/streaming/sources/amazon-sqs#message-deletion-method

Allow running multiple executors for streaming pipeline

Set executor_count variable in the pipeline’s metadata.yaml file to run multiple executors at the same time to scale the streaming pipeline execution

Doc: https://docs.mage.ai/guides/streaming/overview#run-pipeline-in-production

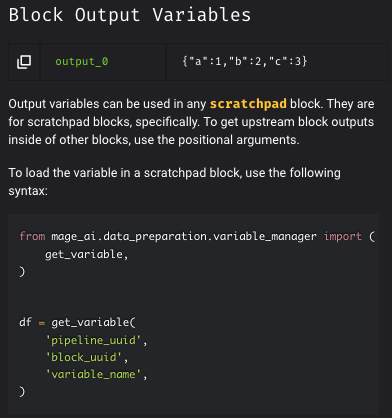

Improve instructions in the sidebar for getting block output variables

- Update generic block templates for custom, transformer, and data exporter blocks so it's easier for users to pass the output from upstream blocks.

- Clarified language for block output variables in Sidekick.

Paginate block runs in Pipeline Detail page and schedules on Trigger page

Added pagination to Triggers and Block Run pages

Automatically install requirements.txt file after git pulls

After pulling the code from git repository to local, automatically install the libraries in requirements.txt so that the pipelines can run successfully without manual installation of the packages.

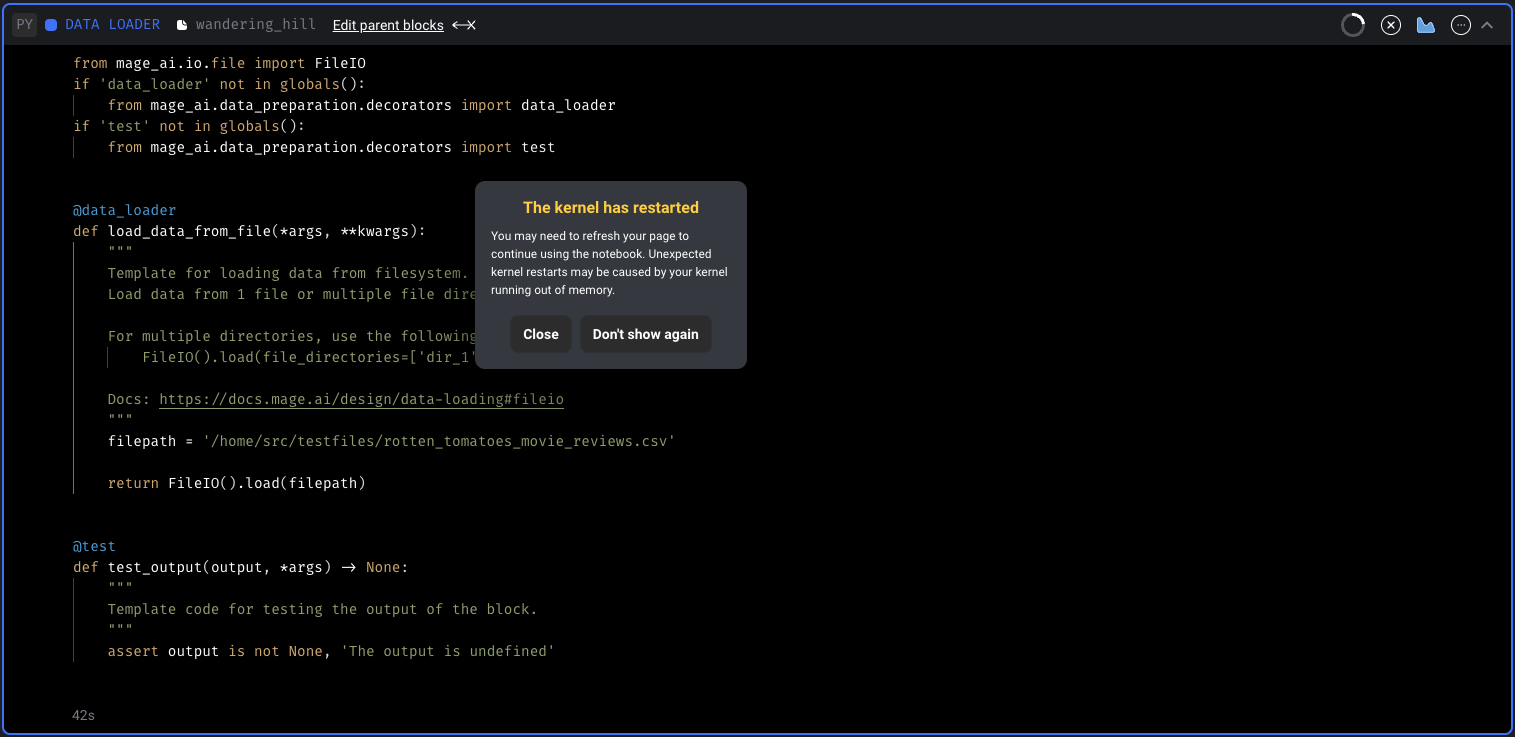

Add warning for kernel restarts and show kernel metrics

- Add warning for kernel if it unexpectedly restarts.

- Add memory and cpu metrics for the kernel.

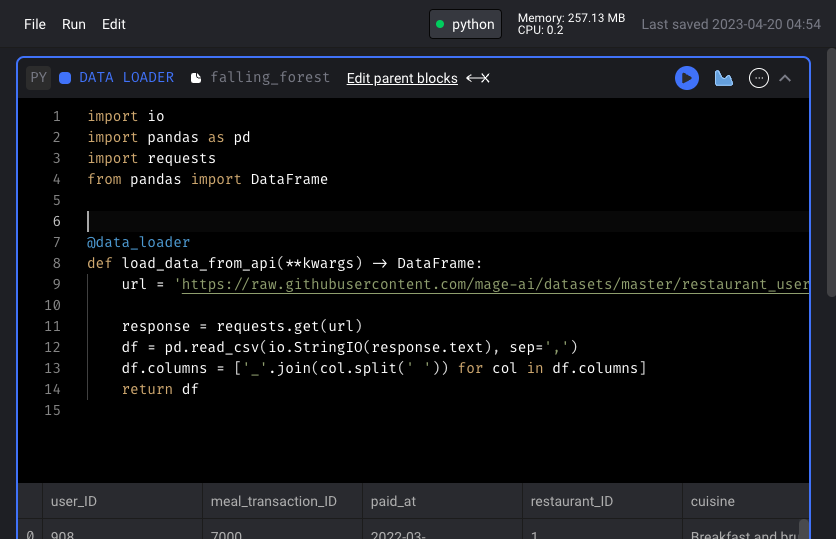

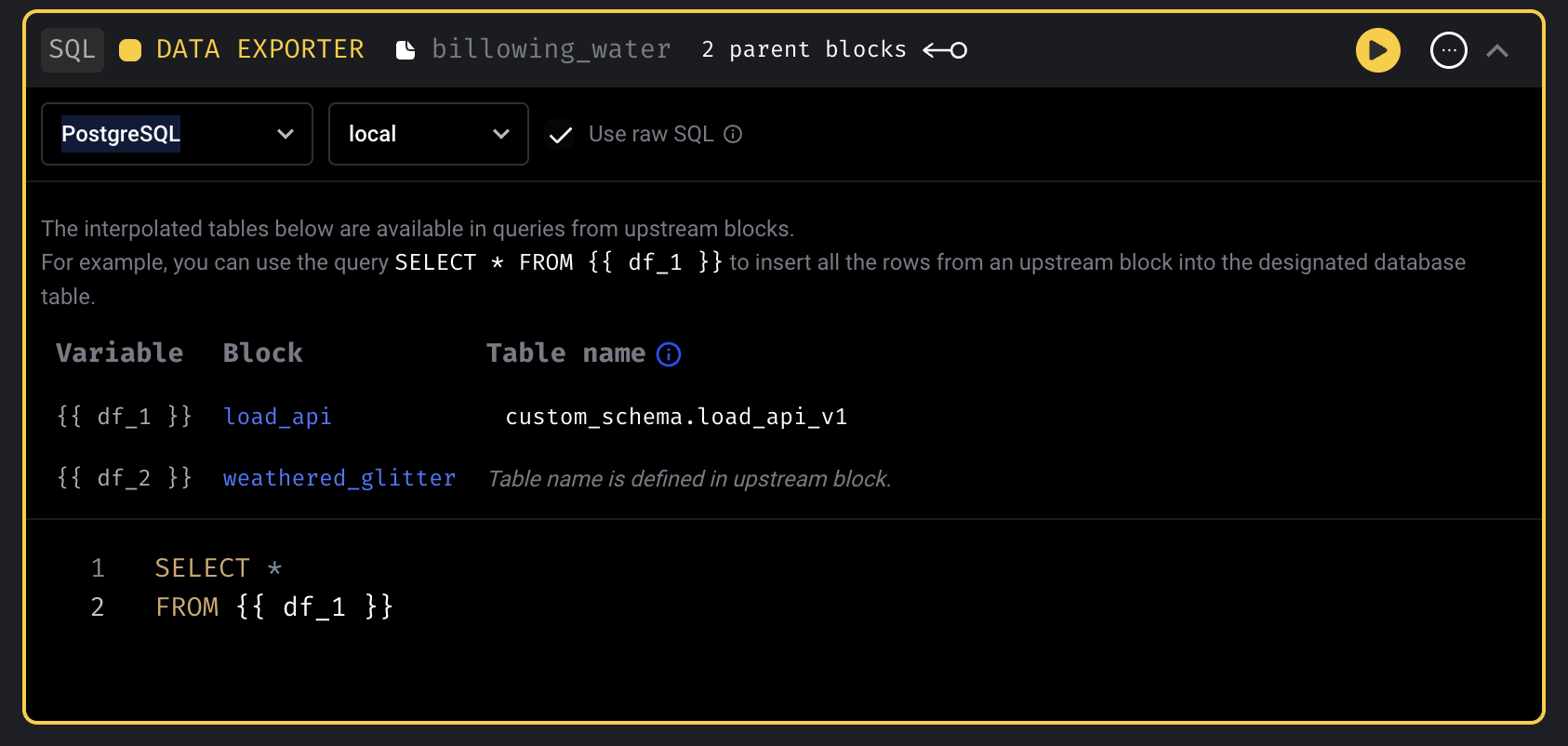

SQL block: Customize upstream table names

Allow setting the table names for upstream blocks when using SQL blocks.

Other bug fixes & polish

- Fix “Too many open files” error by providing the option to increase the “maximum number of open files” value: https://docs.mage.ai/production/configuring-production-settings/overview#ulimit

- Add

connect_timeoutto PostgreSQL IO - Add

locationto BigQuery IO - Mitigate race condition in Trino IO

- When clicking the sidekick navigation, don’t clear the URL params

- UI: support dynamic child if all dynamic ancestors eventually reduce before dynamic child block

- Fix PySpark pipeline deletion issue. Allow pipeline to be deleted without switching kernel.

- DBT block improvement and bug fixes

- Fix the bug of running all models of DBT

- Fix DBT test not reading profile

- Disable notebook shortcuts when adding new DBT model.

- Remove

.sqlextension in DBT model name if user includes it (the.sqlextension should not be included). - Dynamically size input as user types DBT model name with

.sqlsuffix trailing to emphasize that the.sqlextension should not be included. - Raise exception and display in UI when user tries to add a new DBT model to the same file location/path.

- Fix

onSuccesscallback logging issue - Fixed

mage runcommand. Set repo_path before initializing the DB so that we can get correct db_connection_url. - Fix bug: Code block running spinner keeps spinning when restarting kernel.

- Fix bug: Terminal doesn’t work in mage demo

- Automatically redirect users to the sign in page if they are signed in but can’t load anything.

- Add folder lines in file browser.

- Fix

ModuleNotFoundError: No module named 'aws_secretsmanager_caching'when running pipeline from command line

View full Changelog

0.8.52

1 year ago

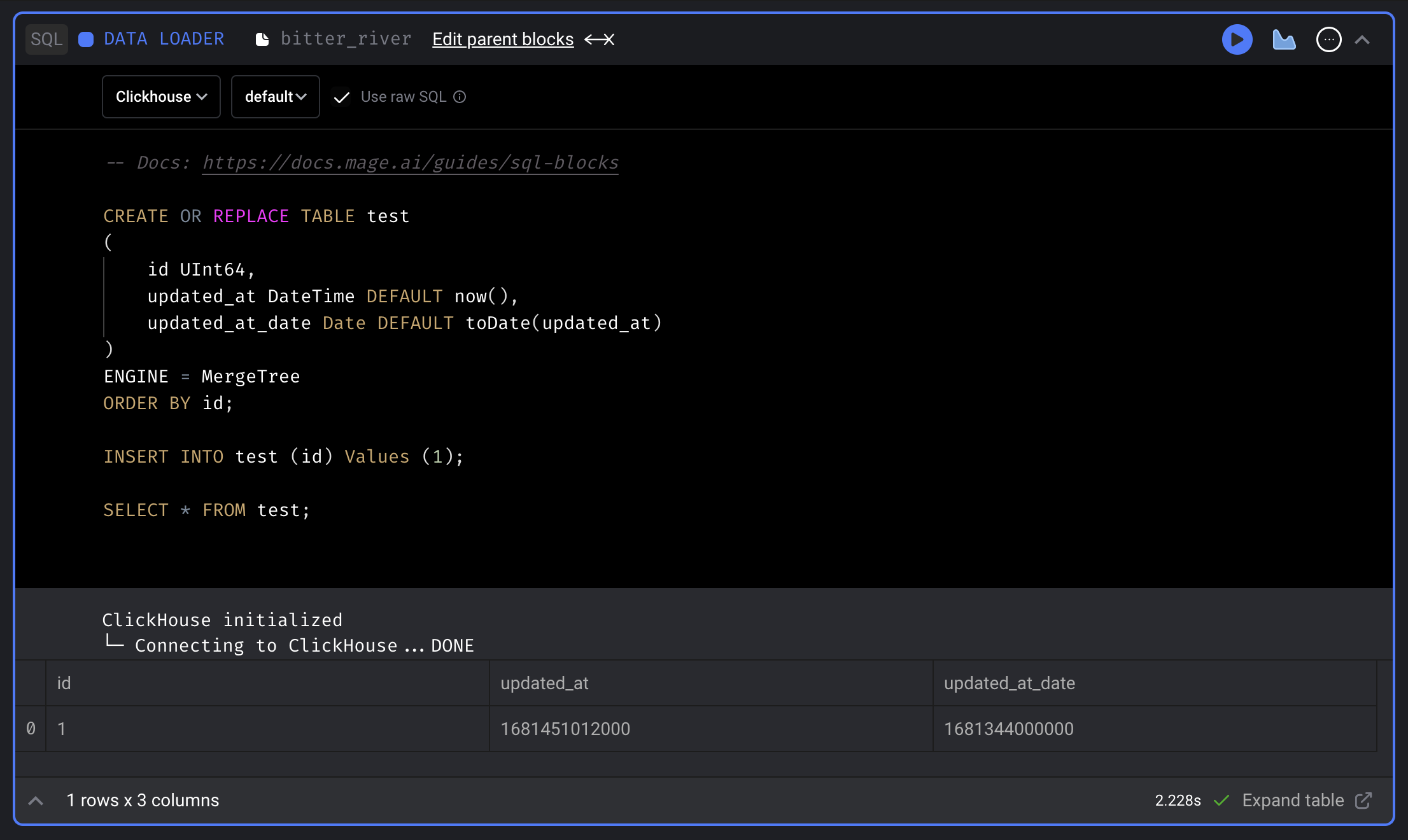

ClickHouse SQL block

Support using SQL block to fetch data from, transform data in and export data to ClickHouse.

Doc: https://docs.mage.ai/integrations/databases/ClickHouse

Trino SQL block

Support using SQL block to fetch data from, transform data in and export data to Trino.

Doc: https://docs.mage.ai/development/blocks/sql/trino

Sentry integration

Enable Sentry integration to track and monitor exceptions in Sentry dashboard. Doc: https://docs.mage.ai/production/observability/sentry

Drag and drop to re-order blocks in pipeline

Mage now supports dragging and dropping blocks to re-order blocks in pipelines.

Streaming pipeline

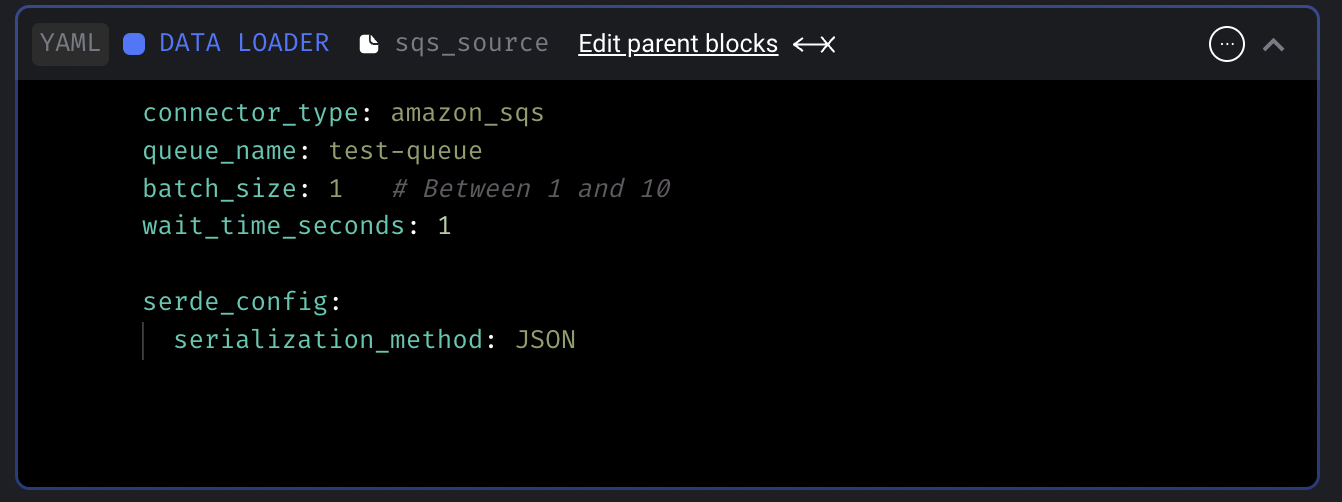

Add AWS SQS streaming source

Support consuming messages from SQS queues in streaming pipelines.

Doc: https://docs.mage.ai/guides/streaming/sources/amazon-sqs

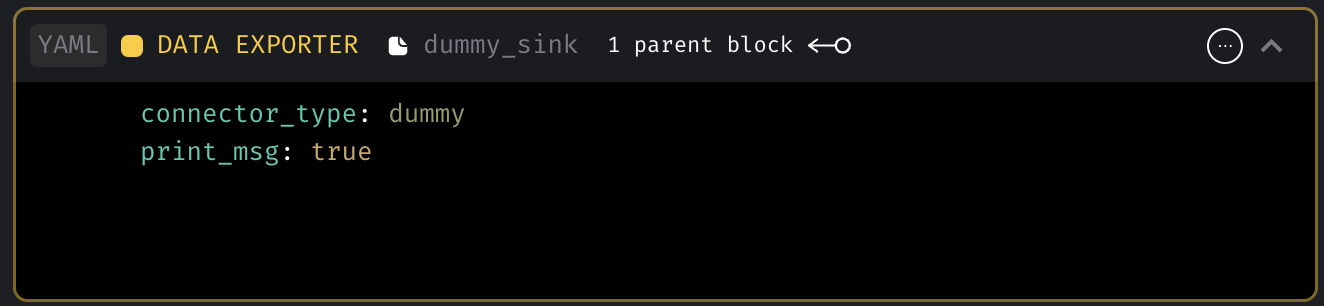

Add dummy streaming sink

Dummy sink will print the message optionally and discard the message. This dummy sink will be useful when users want to trigger other pipelines or 3rd party services using the ingested data in transformer.

Doc: https://docs.mage.ai/guides/streaming/destinations/dummy

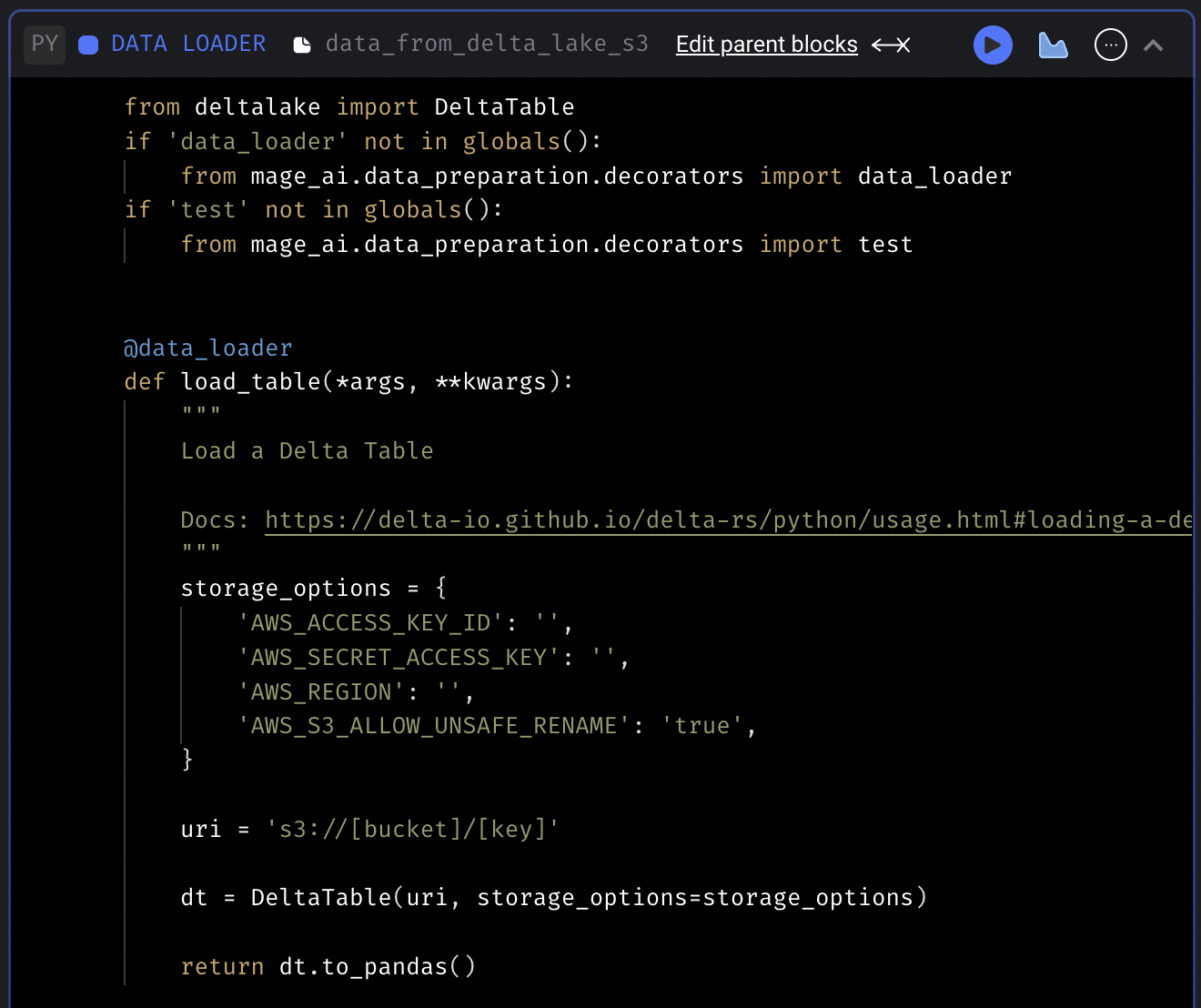

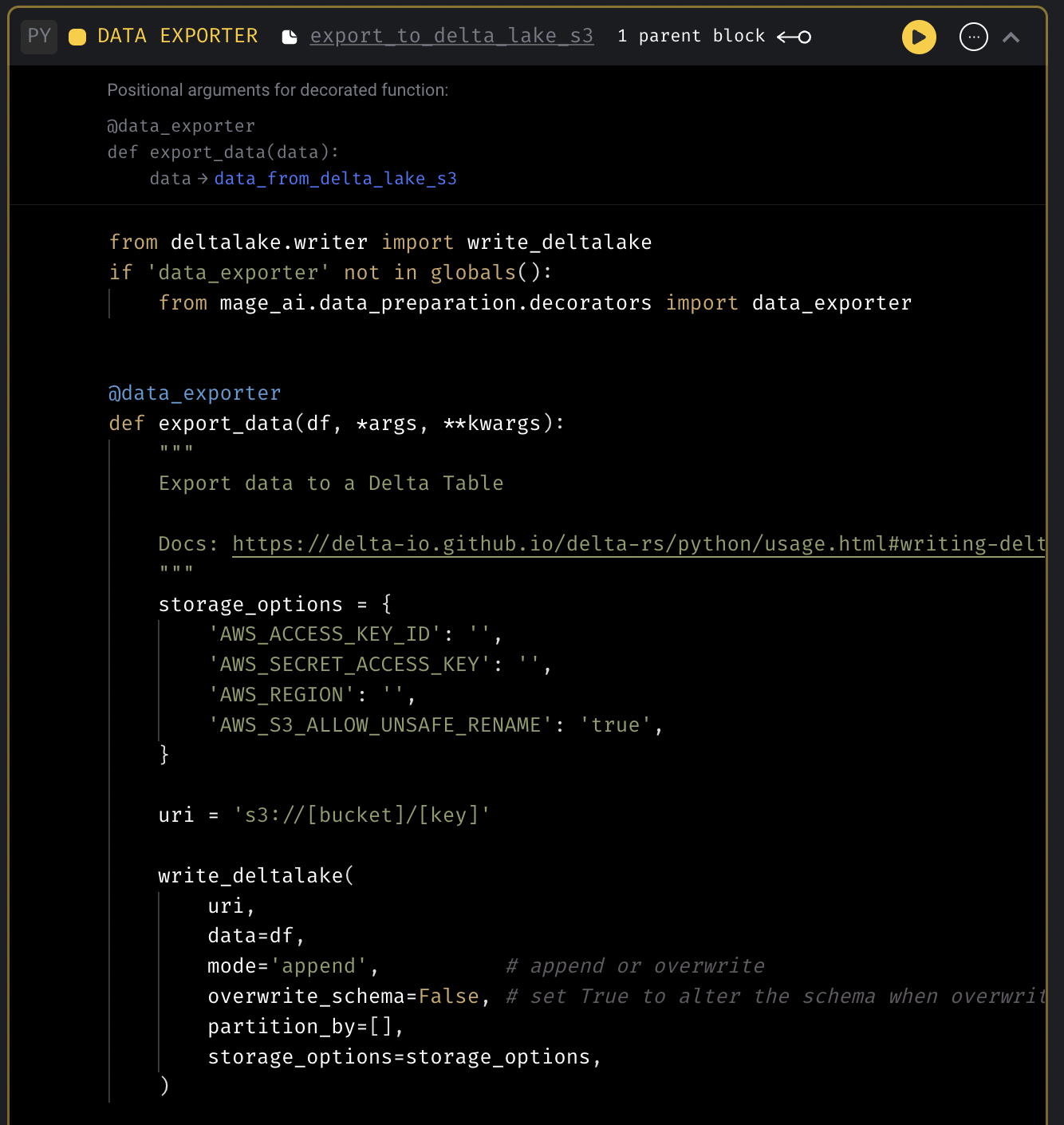

Delta Lake code templates

Add code templates to fetch data from and export data to Delta Lake.

Delta Lake data loader template

Delta Lake data exporter template

Unit tests for Mage pipelines

Support writing unit tests for Mage pipelines that run in the CI/CD pipeline using mock data.

Doc: https://docs.mage.ai/development/testing/unit-tests

Data integration pipeline

- Chargebee source: Fix load sample data issue

- Redshift destination: Handle unique constraints in destination tables.

DBT improvements

- If there are two DBT model files in the same directory with the same name but one has an extra

.sqlextension, the wrong file may get deleted if you try to delete the file with the double.sqlextension. - Support using Python block to transform data between DBT model blocks

- Support

+schemain DBT profile

Other bug fixes & polish

- SQL block

- Automatically limit SQL block data fetching while using the notebook but also provide manually override to adjust the limit while using the notebook. Remove these limits when running pipeline end-to-end outside the notebook.

- Only export upstream blocks if current block using raw SQL and its using the variable

- Update SQL block to use

io_config.yamldatabase and schema by default

- Fix timezone in pipeline run execution date.

- Show backfill preview dates in UTC time

- Raise exception when loading empty pipeline config.

- Fix dynamic block creation when reduced block has another dynamic block as downstream block

- Write Spark DataFrame in parquet format instead of csv format

- Disable user authentication when REQUIRE_USER_AUTHENTICATION=0

- Fix loggings for Callback blocks

- Git

- Import git only when the

Gitfeature is used. - Update git actions error message

- Import git only when the

- Notebook

- Fix Notebook page freezing issue

- Make Notebook right vertical navigation sticky

- More documentations

- Add architecture overview diagram and doc: https://docs.mage.ai/production/deploying-to-cloud/architecture

- Add doc for setting up event trigger lambda function: https://docs.mage.ai/guides/triggers/events/aws#set-up-lambda-function

View full Changelog

0.8.44

1 year ago

Configure trigger in code

In addition to configuring triggers in UI, Mage also supports configuring triggers in code now. Create a triggers.yaml file under your pipeline folder and enter the triggers config. The triggers will automatically be synced to DB and trigger UI.

Doc: https://docs.mage.ai/guides/triggers/configure-triggers-in-code

Centralize server logger and add verbosity control

Shout out to Dhia Eddine Gharsallaoui for his contribution of centralizing the server loggings and adding verbosity control. User can control the verbosity level of the server logging by setting the SERVER_VERBOSITY environment variables. For example, you can set SERVER_VERBOSITY environment variable to ERROR to only print out errors.

Doc: https://docs.mage.ai/production/observability/logging#server-logging

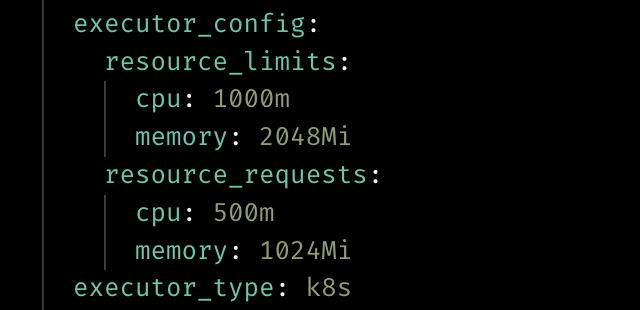

Customize resource for Kubernetes executor

User can customize the resource when using the Kubernetes executor now by adding the executor_config to the block config in pipeline’s metadata.yaml.

Doc: https://docs.mage.ai/production/configuring-production-settings/compute-resource#kubernetes-executor

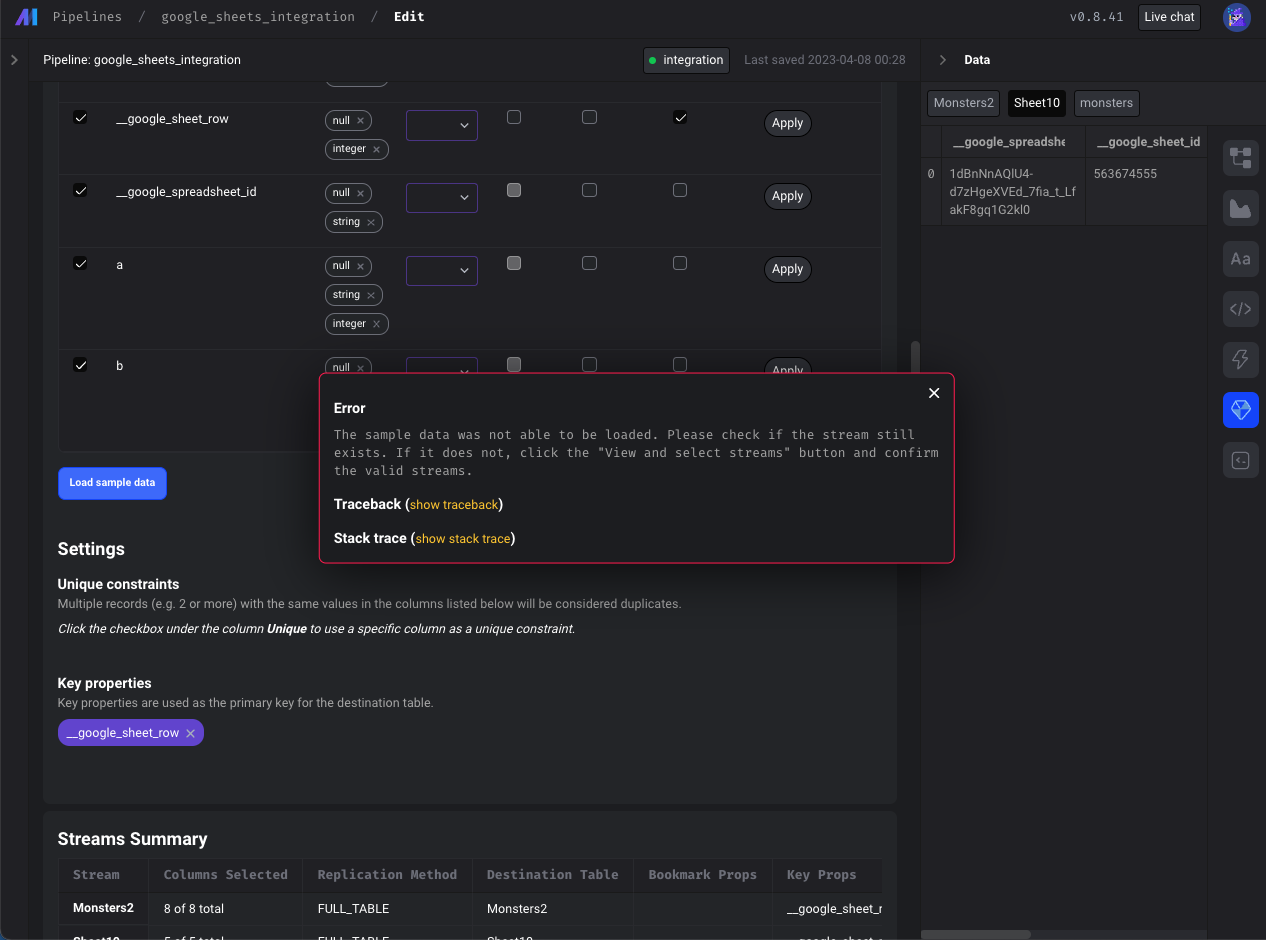

Data integration pipelines

- Google sheets source: Fix loading sample data from Google Sheets

- Postgres source: Allow customizing the publication name for logical replication

- Google search console source: Support email field in google_search_console config

- BigQuery destination: Limit the number of subqueries in BigQuery query

- Show more descriptive error (instead of

{}) when a stream that was previously selected may have been deleted or renamed. If a previously selected stream was deleted or renamed, it will still appear in theSelectStreamsmodal but will automatically be deselected and indicate that the stream is no longer available in red font. User needs to click "Confirm" to remove the deleted stream from the schema.

Terminal improvements

- Use named terminals instead of creating a unique terminal every time Mage connects to the terminal websocket.

- Update terminal for windows. Use

cmdshell command for windows instead of bash. Allow users to overwrite the shell command with theSHELL_COMMANDenvironment variable. - Support copy and pasting multiple commands in terminal at once.

- When changing the path in the terminal, don’t permanently change the path globally for all other processes.

- Show correct logs in terminal when installing requirements.txt.

DBT improvements

- Interpolate environment variables and secrets in DBT profile

Git improvements

- Update git to support multiple users

Postgres exporter improvements

- Support reordering columns when exporting a dataframe to Postgres

- Support specifying unique constraints when exporting the dataframe

with Postgres.with_config(ConfigFileLoader(config_path, config_profile)) as loader:

loader.export(

df,

schema_name,

table_name,

index=False,

if_exists='append',

allow_reserved_words=True,

unique_conflict_method='UPDATE',

unique_constraints=['col'],

)

Other bug fixes & polish

- Fix chart loading errors.

- Allow pipeline runs to be canceled from UI.

- Fix raw SQL block trying to export upstream python block.

- Don’t require metadata for dynamic blocks.

- When editing a file in the file editor, disable keyboard shortcuts for notebook pipeline blocks.

- Increase autosave interval from 5 to 10 seconds.

- Improve vertical navigation fixed scrolling.

- Allow users to force delete block files. When attempting to delete a block file with downstream dependencies, users can now override the safeguards in place and choose to delete the block regardless.

View full Changelog

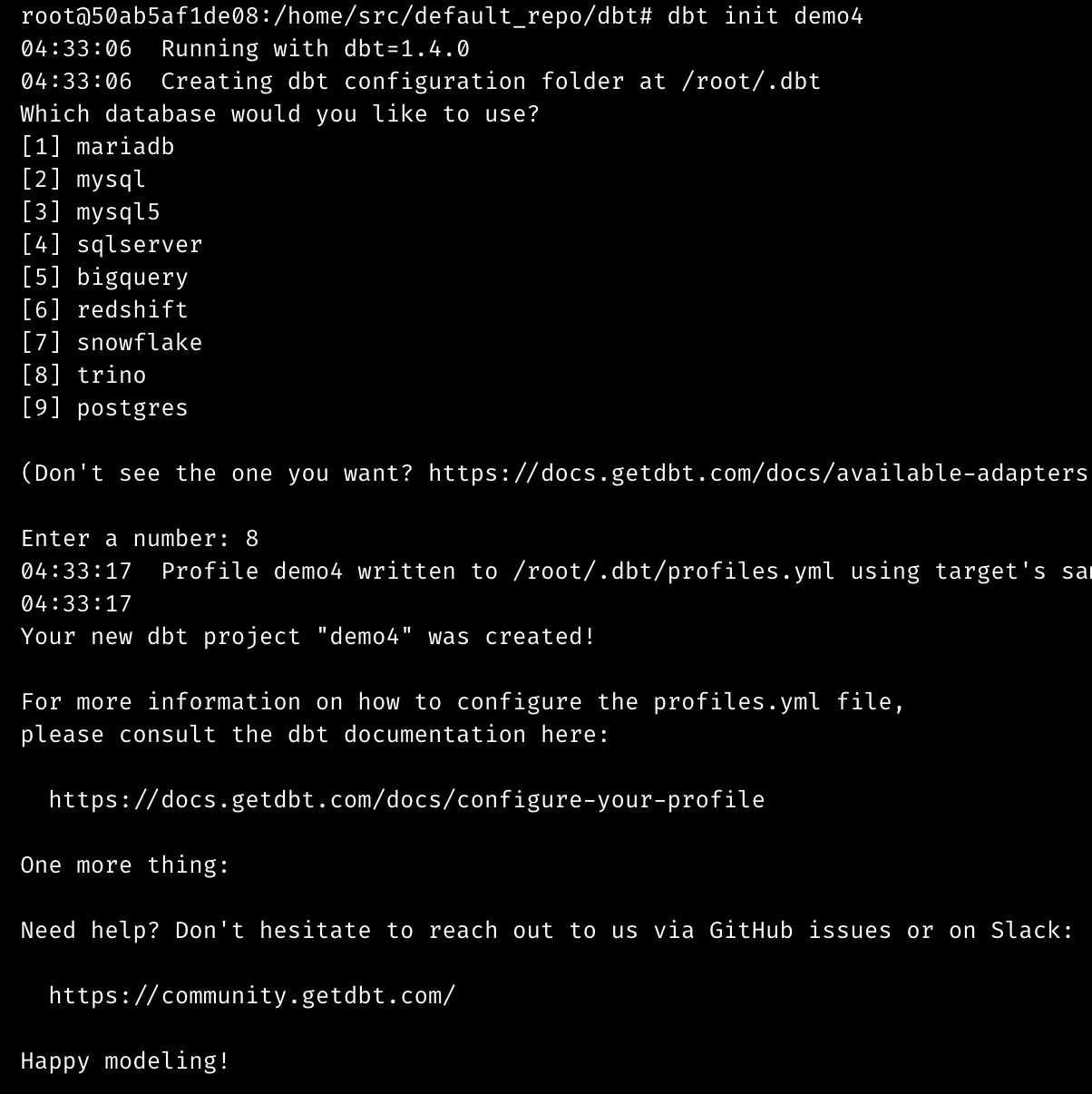

0.8.37

1 year ago

Interactive terminal

The terminal experience is improved in this release, which adds new interactive features and boosts performance. Now, you can use the following interactive commands and more:

-

git add -p -

dbt init demo -

great_expectations init

Data integration pipeline

New source: Google Ads

Shout out to Luis Salomão for adding the Google Ads source.

New source: Snowflake

New destination: Amazon S3

Bug Fixes

- In the MySQL source, map the Decimal type to Number.

- In the MySQL destination, use

DOUBLE PRECISIONinstead ofDECIMALas the column type for float/double numbers.

Streaming pipeline

New sink: Amazon S3

Doc: https://docs.mage.ai/guides/streaming/destinations/amazon-s3

Other improvements

-

Enable the logging of custom exceptions in the transformer of a streaming pipeline. Here is an example code snippet:

@transformer def transform(messages: List[Dict], *args, **kwargs): try: raise Exception('test') except Exception as err: kwargs['logger'].error('Test exception', error=err) return messages -

Support cancelling running streaming pipeline (when pipeline is executed in PipelineEditor) after page is refreshed.

Alerting option for Google Chat

Shout out to Tim Ebben for adding the option to send alerts to Google Chat in the same way as Teams/Slack using a webhook.

Example config in project’s metadata.yaml

notification_config:

alert_on:

- trigger_failure

- trigger_passed_sla

slack_config:

webhook_url: ...

How to create webhook url: https://developers.google.com/chat/how-tos/webhooks#create_a_webhook

Other bug fixes & polish

-

Prevent a user from editing a pipeline if it’s stale. A pipeline can go stale if there are multiple tabs open trying to edit the same pipeline or multiple people editing the pipeline at different times.

-

Fix bug: Code block scrolls out of view when focusing on the code block editor area and collapsing/expanding blocks within the code editor.

-

Fix bug: Sync UI is not updating the "rows processed" value.

-

Fix the path issue of running dynamic blocks on a Windows server.

-

Fix index out of range error in data integration transformer when filtering data in the transformer.

-

Fix issues of loading sample data in Google Sheets.

-

Fix chart blocks loading data.

-

Fix Git integration bugs:

- The confirm modal after clicking “synchronize data” was sometimes not actually running the sync, so removed that.

- Fix various git related user permission issues.

- Create local repo git path if it doesn’t exists already.

-

Add preventive measures for saving a pipeline:

- If the content that is about to be saved to a YAML file is invalid YAML, raise an exception.

- If the block UUIDs from the current pipeline and the content that is about to be saved differs, raise an exception.

-

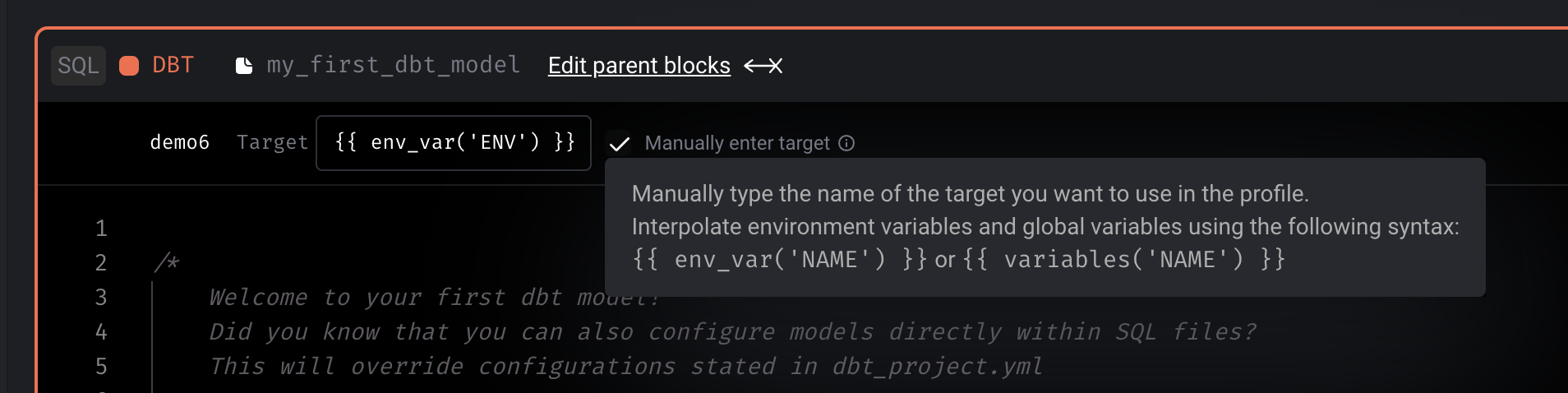

DBT block

- Support DBT staging. When a DBT model runs and if it’s configured to use a schema with a suffix, Mage will now take that into account when fetching a sample of the model at the end of the block run.

- Fix

Circular reference detectedissue with DBT variables. - Manually input DBT block profile to allow variable interpolation.

- Show DBT logs when running compile and preview.

-

SQL block

- Don’t limit raw SQL query; allow all rows to be retrieved.

- Support SQL blocks passing data to downstream SQL blocks with different data providers.

- Raise an exception if a raw SQL block is trying to interpolate an upstream raw SQL block from a different data provider.

- Fix date serialization from 1 block to another.

-

Add helper for using CRON syntax in trigger setting.

- Document internal API endpoints for development and contributions: https://docs.mage.ai/contributing/backend/api/overview

View full Changelog