Logparser Save

A machine learning toolkit for log parsing [ICSE'19, DSN'16]

Logparser

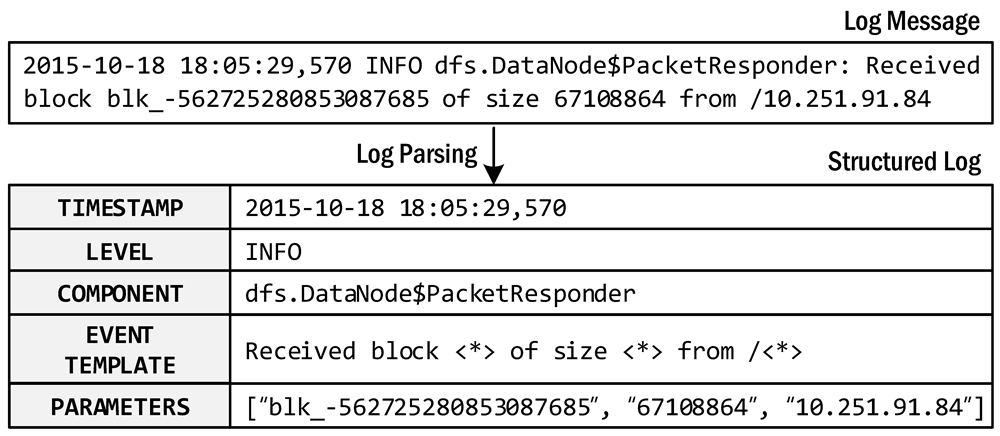

Logparser provides a machine learning toolkit and benchmarks for automated log parsing, which is a crucial step for structured log analytics. By applying logparser, users can automatically extract event templates from unstructured logs and convert raw log messages into a sequence of structured events. The process of log parsing is also known as message template extraction, log key extraction, or log message clustering in the literature.

An example of log parsing

🌈 New updates

- Since the first release of logparser, many PRs and issues have been submitted due to incompatibility with Python 3. Finally, we update logparser v1.0.0 with support for Python 3. Thanks for all the contributions (#PR86, #PR85, #PR83, #PR80, #PR65, #PR57, #PR53, #PR52, #PR51, #PR49, #PR18, #PR22)!

- We build the package wheel logparser3 and release it on pypi. Please install via

pip install logparser3. - We refactor the code structure and beautify the code via the Python code formatter black.

Log parsers available:

:bulb: Welcome to submit a PR to push your parser code to logparser and add your paper to the table.

Installation

We recommend installing the logparser package and requirements via pip install.

pip install logparser3

In particular, the package depends on the following requirements. Note that regex matching in Python is brittle, so we recommend fixing the regex library to version 2022.3.2.

- python 3.6+

- regex 2022.3.2

- numpy

- pandas

- scipy

- scikit-learn

Conditional requirements:

- If using MoLFI:

deap - If using SHISO:

nltk - If using SLCT:

gcc - If using LogCluster:

perl - If using NuLog:

torch,torchvision,keras_preprocessing - If using DivLog:

openai,tiktoken(require python 3.8+)

Get started

-

Run the demo:

For each log parser, we provide a demo to help you get started. Each demo shows the basic usage of a target log parser and the hyper-parameters to configure. For example, the following command shows how to run the demo for Drain.

cd logparser/Drain python demo.py -

Run the benchmark:

For each log parser, we provide a benchmark script to run log parsing on the loghub_2k datasets for evaluating parsing accuarcy. You can also use other benchmark datasets for log parsing.

cd logparser/Drain python benchmark.pyThe benchmarking results can be found at the readme file of each parser, e.g., https://github.com/logpai/logparser/tree/main/logparser/Drain#benchmark.

-

Parse your own logs:

It is easy to apply logparser to parsing your own log data. To do so, you need to install the logparser3 package first. Then you can develop your own script following the below code snippet to start log parsing. See the full example code at example/parse_your_own_logs.py.

from logparser.Drain import LogParser input_dir = 'PATH_TO_LOGS/' # The input directory of log file output_dir = 'result/' # The output directory of parsing results log_file = 'unknow.log' # The input log file name log_format = '<Date> <Time> <Level>:<Content>' # Define log format to split message fields # Regular expression list for optional preprocessing (default: []) regex = [ r'(/|)([0-9]+\.){3}[0-9]+(:[0-9]+|)(:|)' # IP ] st = 0.5 # Similarity threshold depth = 4 # Depth of all leaf nodes parser = LogParser(log_format, indir=input_dir, outdir=output_dir, depth=depth, st=st, rex=regex) parser.parse(log_file)After running logparser, you can obtain extracted event templates and parsed structured logs in the output folder.

-

*_templates.csv(See example HDFS_2k.log_templates.csv)EventId EventTemplate Occurrences dc2c74b7 PacketResponder <> for block <> terminating 311 e3df2680 Received block <> of size <> from <*> 292 09a53393 Receiving block <> src: <> dest: <*> 292 -

*_structured.csv(See example HDFS_2k.log_structured.csv)... Level Content EventId EventTemplate ParameterList ... INFO PacketResponder 1 for block blk_38865049064139660 terminating dc2c74b7 PacketResponder <> for block <> terminating ['1', 'blk_38865049064139660'] ... INFO Received block blk_3587508140051953248 of size 67108864 from /10.251.42.84 e3df2680 Received block <> of size <> from <*> ['blk_3587508140051953248', '67108864', '/10.251.42.84'] ... INFO Verification succeeded for blk_-4980916519894289629 32777b38 Verification succeeded for <*> ['blk_-4980916519894289629']

-

Production use

The main goal of logparser is used for research and benchmark purpose. Researchers can use logparser as a code base to develop new log parsers while practitioners could assess the performance and scalability of current log parsing methods through our benchmarking. We strongly recommend practitioners to try logparser in your production environment. But be aware that the current implementation of logparser is far from ready for production use. Whereas we currently have no plan to do that, we do have a few suggestions for developers who want to build an intelligent production-level log parser.

- Please be aware of the licenses of third-party libraries used in logparser. We suggest to keep one parser and delete the others and then re-build the package wheel. This would not break the use of logparser.

- Please enhance logparser with efficiency and scalability with multi-processing, add failure recovery, add persistence to disk or message queue Kafka.

- Drain3 provides a good example for your reference that is built with practical enhancements for production scenarios.

🔥 Citation

If you use our logparser tools or benchmarking results in your publication, please cite the following papers.

- [ICSE'19] Jieming Zhu, Shilin He, Jinyang Liu, Pinjia He, Qi Xie, Zibin Zheng, Michael R. Lyu. Tools and Benchmarks for Automated Log Parsing. International Conference on Software Engineering (ICSE), 2019.

- [DSN'16] Pinjia He, Jieming Zhu, Shilin He, Jian Li, Michael R. Lyu. An Evaluation Study on Log Parsing and Its Use in Log Mining. IEEE/IFIP International Conference on Dependable Systems and Networks (DSN), 2016.

🤗 Contributors

|

Pinjia He |

Zhujiem |

LIU, Jinyang |

Junjielong Xu |

Shilin HE |

Joseph Hit Hard |

|

Rustam Temirov |

Siyu Yu (Youth Yu) |

Thomas Ryckeboer |

Isuru Boyagane |

Discussion

Welcome to join our WeChat group for any question and discussion. Alternatively, you can open an issue here.