Kafka Streams Machine Learning Examples Save

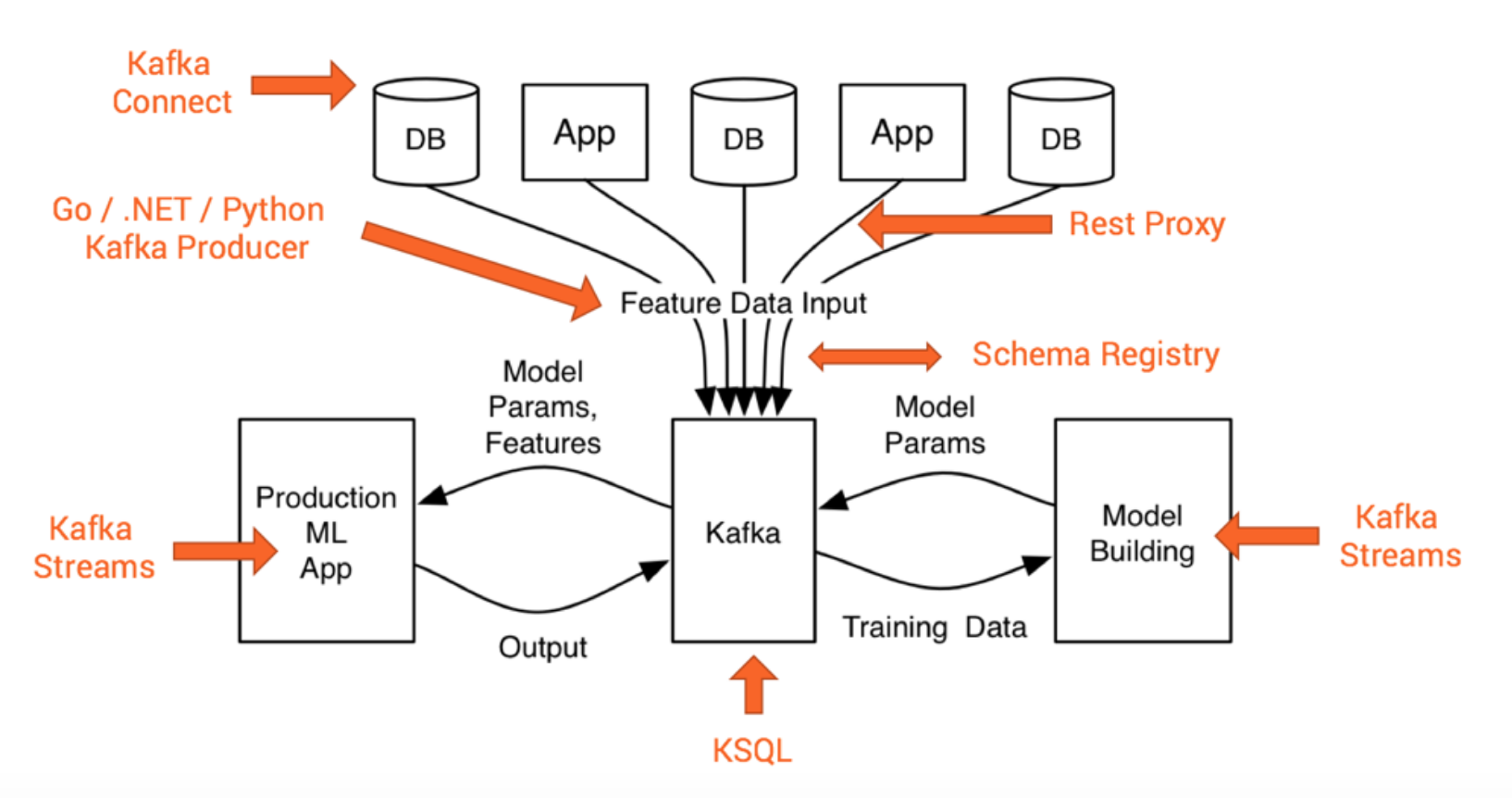

This project contains examples which demonstrate how to deploy analytic models to mission-critical, scalable production environments leveraging Apache Kafka and its Streams API. Models are built with Python, H2O, TensorFlow, Keras, DeepLearning4 and other technologies.

Machine Learning + Kafka Streams Examples

This project contains examples which demonstrate how to deploy analytic models to mission-critical, scalable production leveraging Apache Kafka and its Streams API. Examples will include analytic models built with TensorFlow, Keras, H2O, Python, DeepLearning4J and other technologies.

Material (Blogs Posts, Slides, Videos)

Here is some material about this topic if you want to read and listen to the theory instead of just doing hands-on:

- Blog Post: How to Build and Deploy Scalable Machine Learning in Production with Apache Kafka

- Slide Deck: Apache Kafka + Machine Learning => Intelligent Real Time Applications

- Slide Deck: Deep Learning at Extreme Scale (in the Cloud) with the Apache Kafka Open Source Ecosystem

- Video Recording: Deep Learning in Mission Critical and Scalable Real Time Applications with Open Source Frameworks

- Blog Post: Using Apache Kafka to Drive Cutting-Edge Machine Learning - Hybrid ML Architectures, AutoML, and more...

- Blog Post: Machine Learning with Python, Jupyter, KSQL and TensorFlow

- Blog Post: Streaming Machine Learning with Tiered Storage and Without a Data Lake

Use Cases and Technologies

The following examples are already available including unit tests:

- Deployment of a H2O GBM model to a Kafka Streams application for prediction of flight delays

- Deployment of a H2O Deep Learning model to a Kafka Streams application for prediction of flight delays

- Deployment of a pre-built TensorFlow CNN model for image recognition

- Deployment of a DL4J model to predict the species of Iris flowers

- Deployment of a Keras model (trained with TensorFlow backend) using the Import Model API from DeepLearning4J

More sophisticated use cases around Kafka Streams and other technologies will be added over time in this or related Github project. Some ideas:

- Image Recognition with H2O and TensorFlow (to show the difference of using H2O instead of using just low level TensorFlow APIs)

- Anomaly Detection with Autoencoders leveraging DeepLearning4J.

- Cross Selling and Customer Churn Detection using classical Machine Learning algorithms but also Deep Learning

- Stateful Stream Processing to combine different model execution steps into a more powerful workflow instead of "just" inferencing single events (a good example might be a streaming process with sliding or session windows).

- Keras to build different models with Python, TensorFlow, Theano and other Deep Learning frameworks under the hood + Kafka Streams as generic Machine Learning infrastructure to deploy, execute and monitor these different models.

Some other Github projects exist already with more ML + Kafka content:

The most exciting and powerful example first: Streaming Machine Learning at Scale from 100000 IoT Devices with HiveMQ, Apache Kafka and TensorFLow

Here some more demos:

- Deep Learning UDF for KSQL: Streaming Anomaly Detection of MQTT IoT Sensor Data using an Autoencoder

- End-to-End ML Integration Demo: Continuous Health Checks with Anomaly Detection using KSQL, Kafka Connect, Deep Learning and Elasticsearch

- TensorFlow Serving + gRPC + Kafka Streams on Github => Stream Processing and RPC / Request-Response concepts combined: Model inference with Apache Kafka, Kafka Streams and a TensorFlow model deployed on a TensorFlow Serving model server

- Solving the impedance mismatch between Data Scientist and Production Engineer: Python, Jupyter, TensorFlow, Keras, Apache Kafka, KSQL

Requirements, Installation and Usage

The code is developed and tested on Mac and Linux operating systems. As Kafka does not support and work well on Windows, this is not tested at all.

Java 8 and Maven 3 are required. Maven will download all required dependencies.

Just download the project and run

mvn clean package

You can do this in main directory or each module separately.

Apache Kafka 2.5 is currently used. The code is also compatible with Kafka and Kafka Streams 1.1 and 2.x.

Please make sure to run the Maven build without any changes first. If it works without errors, you can change library versions, Java version, etc. and see if it still works or if you need to adjust code.

Every examples includes an implementation and an unit test. The examples are very simple and lightweight. No further configuration is needed to build and run it. Though, for this reason, the generated models are also included (and increase the download size of the project).

The unit tests use some Kafka helper classes like EmbeddedSingleNodeKafkaCluster in package com.github.megachucky.kafka.streams.machinelearning.test.utils so that you can run it without any other configuration or Kafka setup. If you want to run an implementation of a main class in package com.github.megachucky.kafka.streams.machinelearning, you need to start a Kafka cluster (with at least one Zookeeper and one Kafka broker running) and also create the required topics. So check out the unit tests first.

Example 1 - Gradient Boosting with H2O.ai for Prediction of Flight Delays

Detailed info in h2o-gbm

Example 2 - Convolutional Neural Network (CNN) with TensorFlow for Image Recognition

Detailed info in tensorflow-image-recognition

Example 3 - Iris Prediction using a Neural Network with DeepLearning4J (DL4J)

Detailed info in dl4j-deeplearning-iris

Example 4 - Python + Keras + TensorFlow + DeepLearning4j

Detailed info in tensorflow-kerasm