Appleseed Versions Save

A modern open source rendering engine for animation and visual effects

2.1.0-beta

4 years agoThese are the release notes for appleseed 2.1.0-beta.

These notes are part of a larger release, check out the main announcement for details.

This release of appleseed has a DOI: https://zenodo.org/record/3456967

Contributors

This release is the result of more than ten months of work by the incredibly talented and dedicated appleseed development team.

Many thanks to our code contributors for this release, in alphabetical order:

- Stephen Agyemang

- Luis Barrancos

- Sagnik Basu

- François Beaune

- Mandeep Bhutani

- Lovro Bosnar

- Rafael Brune

- Matt Chan

- João Marcos Mororo Costa

- Herbert Crepaz

- Junchen Deng

- Jonathan Dent

- Mayank Dhiman

- Dorian Fevrier

- Karthik Ramesh Iyer

- Dibyadwati Lahiri

- Kevin Masson

- Gray Olson

- Achal Pandey

- Jino Park

- Sergo Pogosyan

- Bassem Samir

- Oleg Smolin

- Esteban Tovagliari

- Thibault Vergne

- Luke Wilimitis

- Lars Zawallich

Many thanks as well to our internal testers, feature specialists and artists, in particular:

- Richard Allen

- François Gilliot

- Juan Carlos Gutiérrez

Interested in joining the appleseed development team, or want to get in touch with the developers? Join us on Discord. Simply interested in following appleseed's development and staying informed about upcoming appleseed releases? Follow us on Twitter.

Changelog

⭐️ Cryptomatte AOVs

appleseed now has native support for Cryptomatte via a pair of new Cryptomatte AOVs. Cryptomatte is a system to generate ID maps that work even in the presence of transparency, depth of field and motion blur.

(IKEA Home Office scene by Chau Tran. Feel free to download the original OpenEXR file to inspect embedded metadata.)

(IKEA Home Office scene by Chau Tran. Feel free to download the original OpenEXR file to inspect embedded metadata.)

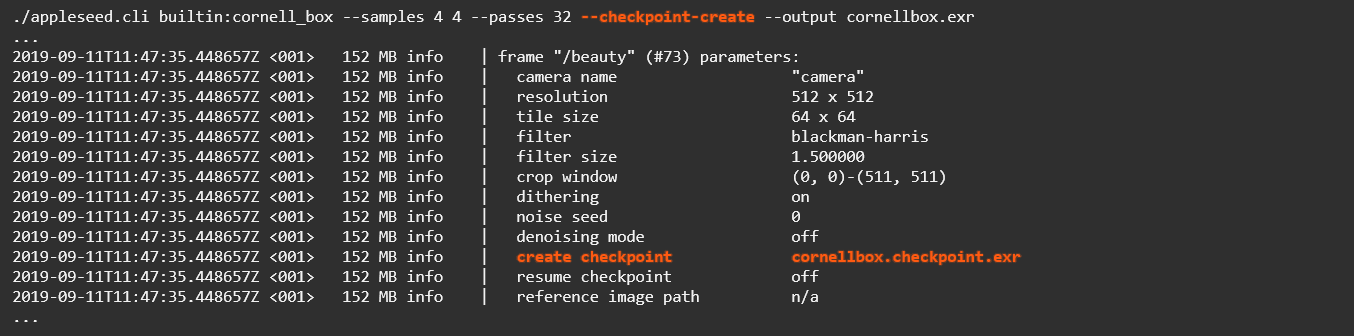

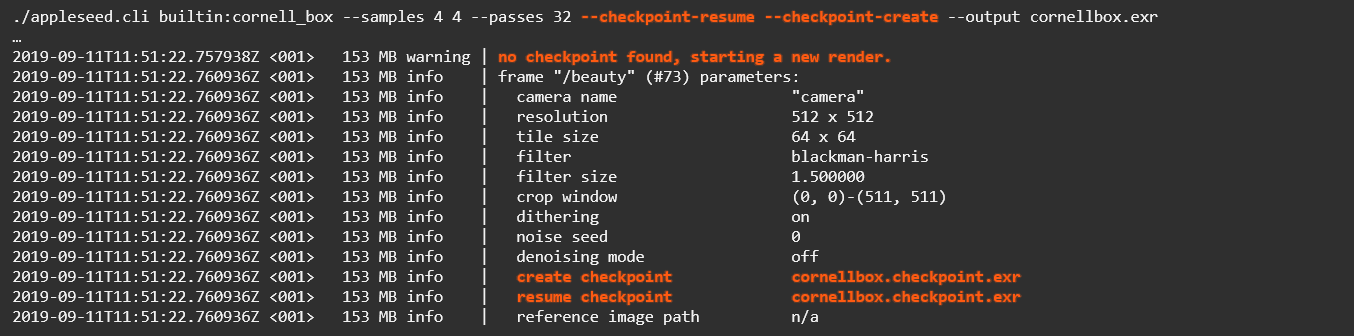

⭐️ Render Checkpointing

We've added render checkpointing, a mechanism to resume multi-pass renders after they were interrupted (voluntarily or not), and to add rendering passes to a finished render.

At the moment render checkpointing is only exposed in appleseed.cli. Eventually it should become available in appleseed.studio as well.

Here's an example workflow: when starting your multi-pass render (notice the --passes option), you add the --checkpoint-create option to create/update the checkpoint file after each render pass:

After you've interrupted the render with CTRL+C, or, Heaven forbid, after appleseed crashed, you can simply resume the render from the last complete render pass by adding the --checkpoint-resume option:

You can also pass both --checkpoint-create and --checkpoint-resume at the same time to simultaneously resume rendering from a checkpoint and continuing updating it as new passes are rendered:

Finally, you can pass both options even if no checkpoint exists yet, in which case rendering will simply start from the first pass:

⭐️ OSL Source Shaders Support

appleseed now has the ability to compile OSL source shaders on the fly. We currently exposed this feature in our Blender plugin. In the following screenshot, the user has written a small OSL shader that remaps its input to a color using a custom color map, then has connected it to the V texture coordinate of the object:

Future versions of the 3ds Max and Maya plugins will expose this feature in a similar manner.

⭐️ Fisheye Lens Camera

We've added a new fisheye lens camera model with support for equisolid angle, equidistance, stereographic and Thoby projections.

Here is a render of the Japanese Classroom scene with the fisheye lens using a 120° horizontal field of view and a stereographic projection:

(Japanese Classroom scene by Blend Swap user NovaZeeke, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the

(Japanese Classroom scene by Blend Swap user NovaZeeke, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the mitsuba2appleseed.py script that ships with appleseed.)

⭐️ Texture-Controlled Pixel Renderer

We've added a way to control how many samples each pixel will receive based on a user-provided black-and-white mask. This allows to get rid of sampling noise in specific parts of a render without adding samples in areas that are already smooth. This is yet another tool in the toolbox, complementing the new adaptive tile sampler introduced in appleseed 2.0.0-beta and the per-object shading quality control that has been present in appleseed since its early days.

In the following mosaic, the top-left image (1) is the base render using 128 samples for each pixel; the top-right image (2) is a user-painted mask where black corresponds to 128 samples/pixel, white corresponds to 2048 samples/pixel and gray levels correspond to intermediate values; the bottom-left image (3) is the render produced with the new texture-controlled pixel renderer using the mask; the bottom-right image (4) is the Pixel Time AOV where the color of each pixel reflects the relative amount of time spent rendering it (the brighter the pixel, the longer it took to render it):

⭐️ Filter Importance Sampling

We switched appleseed to use Filter Importance Sampling instead of filtered sample splatting. This new technique has three advantages over the previous one: lower noise for a given number of samples per pixel, less waste (tile borders are no longer required), and statistically independent pixels, meaning in practice that modern denoisers should work a lot better when applied to images produced by appleseed.

To illustrate this last point, here is an appleseed render of the Modern Hall scene denoised with Intel® Open Image Denoise, a set of open source, high quality, machine learning-based denoising filters:

(Modern Hall scene by Blend Swap user NewSee2l035, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the

(Modern Hall scene by Blend Swap user NewSee2l035, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the mitsuba2appleseed.py script that ships with appleseed.)

Other New Features and Improvements

appleseed

New Features and Improvements

- Added controls to fix or vary sampling patterns per frame.

- Implemented optional dithering of the frame (AOVs are not affected).

- Implemented new energy conservation algorithm.

- Allow to stop progressive rendering after a given amount of time.

- Print estimated remaining render time.

- Record light paths due to image-based lighting.

- Keep texture files open during a rendering session (in progressive mode or multi-pass final mode).

- Added

as_matte()closure and updated OSL shaders to use it. - Allow scaling render stamps.

- Allow flipping environment maps.

AOVs

- Added Screen-Space Velocity AOV.

- Added Pixel Error AOV.

- Use Inferno color map in diagnostic AOVs (Pixel Sample Count, Pixel Time, Pixel Variation and Pixel Error AOVs).

- Added an alpha channel to the Depth AOV, allowing background pixels to use depth = 0 instead of some extremely large value that causes problems with some applications.

- Normalize pixel variation with respect to the noise threshold set by the user in the Pixel Variation AOV.

Shading Overrides

- Added Objects, Assemblies and World-Space Velocity shading overrides.

- Prevent accidental shading override changes during final rendering.

SPPM

- Added Max Ray Intensity setting to SPPM lighting engine.

- Disallow progressive rendering with SPPM lighting engine.

Logging

- Display enabled library features as part of appleseed's version information.

- Print texture store settings when rendering starts.

- Improved tile statistics and log messages in Adaptive Tile Renderer.

- Properly handle and report I/O errors when loading a reference image.

- Increased average noise level precision in Adaptive Tile Renderer log message.

- Increased precision of floating point values in

*.objand*.appleseedfiles. - Print project writing and packing times.

- Emit log message when closing texture file.

- Emit log messages when writing AOVs to disk.

- Changed procedural assemblies expansion summary from debug to info messages.

OpenImageIO

- Allow saving frames in all formats supported by OpenImageIO.

- Look for OpenImageIO plugins in the project search paths.

- Added sample procedural texture OpenImageIO plugin.

- Bundled OpenImageIO's

idifftool.

Miscellaneous

- If a color entity has multiple alpha values, keep the first one.

- Improved rendering error analysis.

- Made Cornell Box's background solid black.

- Replaced Max Samples by Max Average Samples Per Pixel in interactive mode.

- Introduced disk, rectangle and sphere procedural objects.

- Entity paths now start with a

/. - Binary Curve file format specifications are now in the wiki.

- Binary Mesh file format specifications are now in the wiki.

Bug Fixes

- Fixed Oren-Nayar BRDF's diffuse model.

- Fixed Albedo AOV for Oren-Nayar BRDF when roughness is zero.

- Fixed Albedo AOV for Diffuse BTDF.

- Fixed Position AOV for background pixels.

- Handle multipass rendering in Pixel Sample Count AOV.

- Handle multipass rendering in Pixel Variation AOV.

- Don't set pixels outside the crop window in AOVs.

- Don't apply Max Ray Intensity after specular bounces.

- Load plugins from paths set in the

APPLESEED_SEARCHPATHenvironment variable even if a project does not define any explicit search path. - Fixed rendering of Disney built-in materials that have an EDF set.

- Fixed Sun orientation when bound to environment EDF with a transform.

- Fixed banding with low resolution environment maps.

- Fixed time displayed in render stamp when rendering has been paused.

- Fixed occasional crash with procedural objects.

- Fixed intersection of procedural objects.

- Honor visibility flags on instances of procedural objects.

- Fixed occasional NaN values in the latitude-longitude environment EDF.

- Fixed invalid samples warnings in diagnostic surface shader.

- Use case-insensitive comparison when checking file extensions.

- Fixed infinite loop when OSL shading system's initialization fails.

- Fixed Sampler combobox when loading project that uses adaptive sampling.

- Removed redundant

colorandalphaparameters from color entities. - Switch built-in color maps to linear RGB.

- Linearize custom color map image file if necessary.

- Fixed anisotropy when shading samples is greater than 1.

- Fixed

required parameter "volume_parameterization" not founderror message whenever an OSL shader uses theas_glass()closure. - Fixed crash in presence of light-emitting curves.

- Fixed World-Space Position diagnostic mode to show normalized coordinates relative to the scene's bounding box instead of absolute coordinates.

- Fixed World-Space Position diagnostic mode for flat (2D) or otherwise degenerate scenes.

- Include all pixels when computing average noise threshold in Adaptive Tile Renderer.

- Fixed Adaptive Tile Renderer metadata.

- Fixed small robustness issues in Physical Sun light.

Removed Features

- The Glossy, Plastic, Metal and Glass BSDFs are now exclusively using the GGX microfacet model.

- Removed deprecated Adaptive Pixel Renderer: it has been replaced by a new Adaptive Tile Renderer in appleseed 2.0.0-beta.

appleseed.studio

New Features and Improvements

- Switch to Qt 5.12. This may fix various issues on modern systems such as issues related to high DPI displays.

- Allow setting the default number of rendering threads in the global Settings dialog.

- Made Advanced sections in Rendering Settings dialog collapsible.

- Expose light importance sampling option in Rendering Settings dialog.

- Switch light paths visualization to OpenGL 3.3 (Core Profile).

- Use Reinhard tone mapping in light paths visualization widget.

- CTRL+Enter now closes the Search Paths dialog.

- Made View → Fullscreen menu item checkable.

- Allow drag and drop of materials to render widget.

- Disallow dropping text into the main window.

- Use OpenImageIO files filter instead of Qt one when saving renders.

- Print paths to Python's

site-packagesand appleseed Python module's directories on startup. - Print warnings if Python's

site-packagesor appleseed Python module's directories cannot be found. - Manually saving settings now also saves the state of the user interface.

- Print detailed diagnostic messages about the value of the

PYTHONHOMEenvironment variable and the likely consequences. - Adjust width of Noise Threshold widget.

Bug Fixes

- Fixed rare crash when quickly stopping then restarting a render.

- Fixed crash if a numeric input has more than one value in the Entity Editor.

- Search paths from the

APPLESEED_SEARCHPATHenvironment variable were saved as explicit search paths in projects. - The project's root path was not updated when the project was saved to another location.

- Fixed locale issues with OpenColorIO.

- Fixed push button style on macOS.

appleseed.cli

New Features and Improvements

- Added

--disable-abort-dialogsoption to disable abort dialogs (Windows only). - appleseed.cli now prints its exit code.

Bug Fixes

- Fixed convergence statistics when using appleseed.cli.

- Preserve post-processing stages when recreating frame in appleseed.cli.

Removed Features

- Removed

--to-mplayand--to-hrmanpipecommand line options.

Python Bindings

New Features and Improvements

-

Added the following class to Python bindings:

ShaderCompiler -

Added the following entry points to Python bindings:

AOV.get_cryptomatte_image() Project.get_post_processing_stage_factory_registrar() Project.get_volume_factory_registrar() ShaderGroup.add_source_shader() ShaderQuery.open_bytecode() get_lib_compilation_date() get_lib_compilation_time() get_lib_configuration() get_lib_cpu_features() get_lib_name() get_lib_version() get_synthetic_version_string() get_third_parties_versions() oiio_make_texture()

Removed Features

-

Removed the following entry point from Python bindings (breaking change):

Project.reinitialize_factory_registrars()

OSL Shaders

New Features and Improvements

- Added color inversion shader (

as_invert_color). - Added Color Decision List shader (

as_asc_cdl). - Increased default number of bounces in shaders from 4 or 8 to 100.

- Increased default number of bounces in

as_subsurfaceshader from 2 to 8. - Updated OSL language specification document (

docs/osl/osl-languagespec.pdf) to version 1.10. - Removed

blenderseed/as_closure2surface.oslshader as it is redundant withappleseed/as_closure2surface.osl. - Made

Energy Compensationparameter ofas_metalshader non-texturable.

Bug Fixes

- Fixed handling of Oren-Nayar BRDF's diffuse albedo in appleseed Standard Surface shader.

- Fixed Fresnel at grazing angles when normal mapping is used with the appleseed Standard Surface shader.

- Fixed normal blending in

as_triplanarshader.

Other Tools

- The

animatecameratool now varies the sampling pattern per frame. - Added

--motion-blurtoanimatecameratool. - Added

--disable-abort-dialogsoption to all command line tools to disable abort dialogs (Windows only). - Allow input file of

mitsuba2appleseed.pyto be anywhere.

2.0.0-beta

5 years agoThese are the release notes for appleseed 2.0.0-beta.

These notes are part of a larger release, check out the main announcement for details.

This release of appleseed has a DOI: https://zenodo.org/record/3384658

Contributors

This release is the result of six months of work by the incredibly talented and dedicated appleseed development team.

Many thanks to our code contributors for this release:

- Luis Barrancos

- François Beaune

- Artem Bishev

- David Coeurjolly

- Herbert Crepaz

- Jonathan Dent

- Alex Fuller

- Thomas Manceau

- Kevin Masson

- Fedor Matantsev

- Jino Park

- Sergo Pogosyan

- Girish Ramesh

- Esteban Tovagliari

Many thanks as well to our internal testers, feature specialists and artists, in particular:

- Richard Allen

- François Gilliot

- Juan Carlos Gutiérrez

Interested in joining the appleseed development team, or want to get in touch with the developers? Join us on Discord. Simply interested in following appleseed's development and staying informed about upcoming appleseed releases? Follow us on Twitter.

Changelog

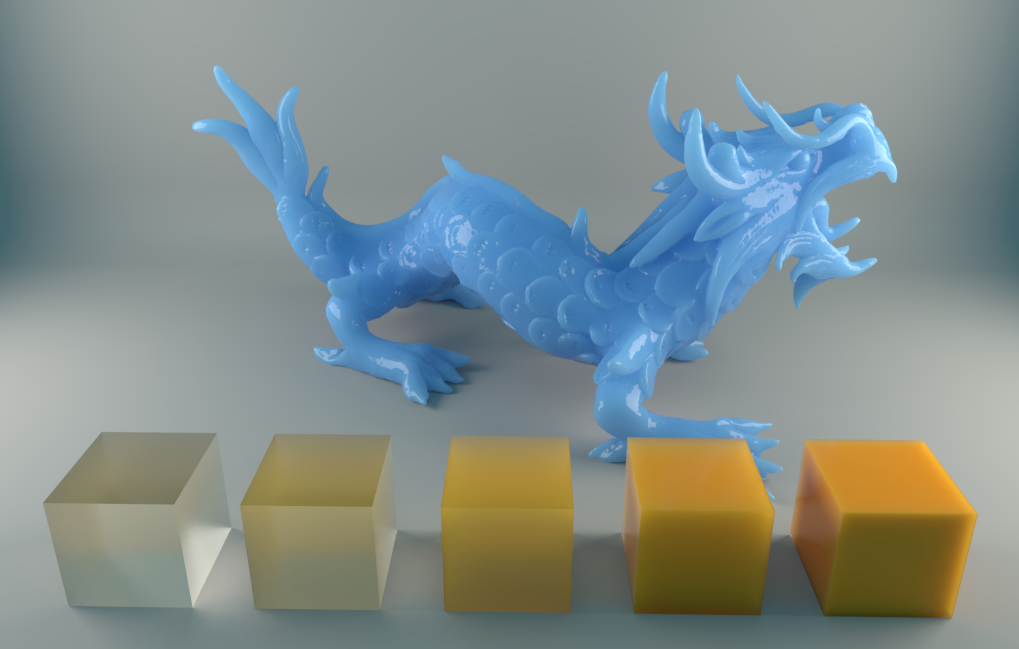

⛏ Improved: Random-Walk Subsurface Scattering

We made a number of improvements to our random-walk subsurface scattering implementation:

- Fixed white edges artifacts.

- Added volume anisotropy support.

- The Random-Walk BSSRDF now supports two surface models: Lambertian BTDF (perfectly diffuse transparency) and Glass BSDF.

- Fixed Fresnel term when using the Lambertian BTDF surface model.

- Exposed Random-Walk BSSRDF to OSL:

- For the Lambertian BTDF surface model: via a new

randomwalkSSS profile for theas_subsurface()closure; - For the Glass BSDF surface model: via a new

as_randomwalk_glass()closure.

- For the Lambertian BTDF surface model: via a new

(Geometry, textures and environment map by 3D Scan Store, scene reconstruction and skin shader by Juan Carlos Gutiérrez.)

(Geometry, textures and environment map by 3D Scan Store, scene reconstruction and skin shader by Juan Carlos Gutiérrez.)

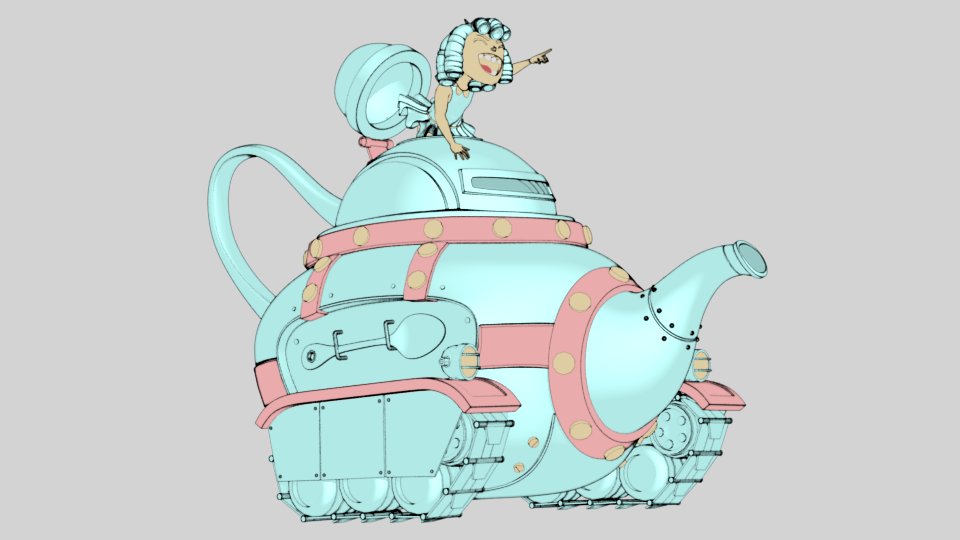

⭐️ New: Non-Photorealistic Rendering

We did some initial work on non-photorealistic rendering support in appleseed. We added two new OSL closures, as_npr_shading() and as_npr_contour(), as well as a new OSL shader, as_toon.

(Model by Brice Laville, concept by Tom Robinson, render by Esteban Tovagliari - RenderMan "Rolling Teapot" Art Challenge.)

(Model by Brice Laville, concept by Tom Robinson, render by Esteban Tovagliari - RenderMan "Rolling Teapot" Art Challenge.)

(Original model by Blend Swap user Ricardo28roi, rig by Blend Swap user daren, render by Luis Barrancos.)

(Original model by Blend Swap user Ricardo28roi, rig by Blend Swap user daren, render by Luis Barrancos.)

Finally, we added two new AOVs: NPR Shading (npr_shading_aov) and NPR Contour (npr_contour_aov).

⭐️ New: Post-Processing Pipeline

This release introduces a new post-processing pipeline that allows to apply treatments to a render without leaving appleseed.

The key (but still experimental) component of this new post-processing pipeline is the Color Map stage: it allows to visualize the Rec. 709 relative luminance of a render through a number of predefined color maps, or through a custom color map defined by an image file.

Five predefined color maps are available: the venerable Jet color map popularized by MATLAB, and four modern, "perceptually uniform sequential" color maps from Matplotlib: Inferno, Magma, Plasma and Viridis.

A color legend can also be included in the render.

A future version of the Color Map stage may allow to visualize other quantities such as photometric luminance (in cd/m²), radiance (in W/sr/m²) or irradiance (in W/m²).

Beauty render:

(Country Kitchen scene by Blend Swap user Jay-Artist, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the

(Country Kitchen scene by Blend Swap user Jay-Artist, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the mitsuba2appleseed.py script that ships with appleseed.)

False colors, Inferno color map:

False colors, Jet color map:

False colors, Magma color map:

False colors, Plasma color map:

False colors, Viridis color map:

In addition, the Color Map stage can render relative luminance isolines, that is, lines of equal relative luminance in the render:

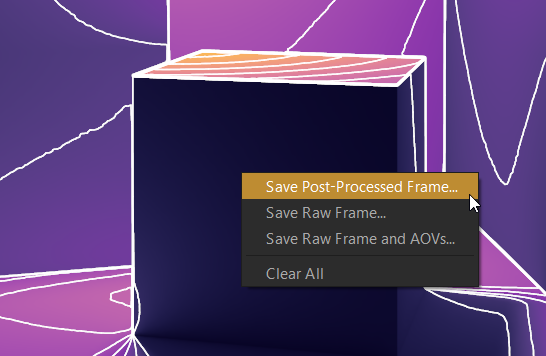

Color mapping and isolines can also be applied right from appleseed.studio without having to add them as post-processing stages and re-rendering the scene:

Post-processed renders can be saved to disk:

Finally, the render stamp feature introduced in appleseed 1.9.0-beta has been converted to a post-processing stage (projects are automatically updated).

⭐️ New: Adaptive Tile Sampling

During his Google Summer of Code 2018 participation, Kevin Masson implemented a new adaptive tile sampler that provides superior performance over the former adaptive pixel sampler (which is now deprecated). The new adaptive tile sampler is based on a number of recent papers. Please check out Kevin's GSoC 2018 report for details and references to relevant papers.

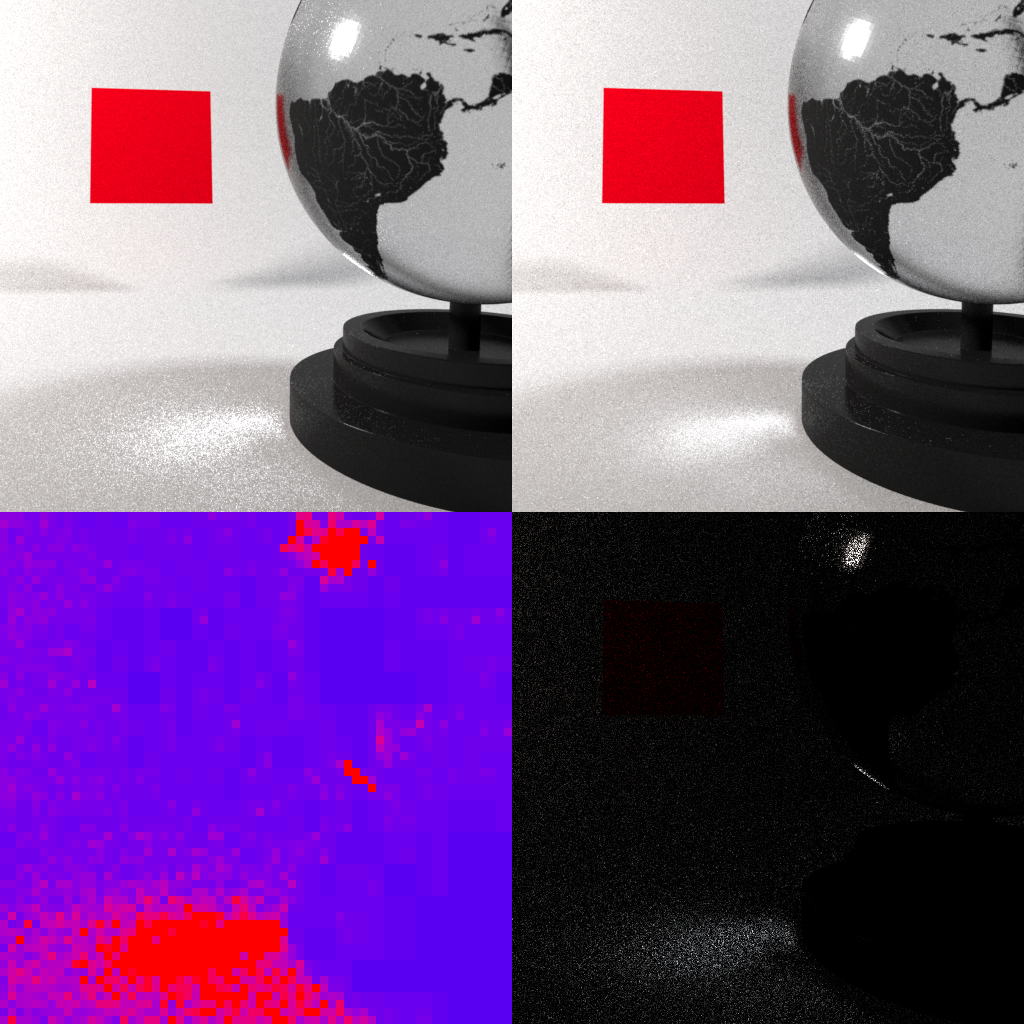

Here is an equal-time comparison between the uniform tile sampler and the new adaptive one:

(Earth texture from Shaded Relief, scene and renders by Kevin Masson. Top-left: uniform sampling; top-right: adaptive sampling; bottom-left: Pixel Sample Count AOV (see below); bottom-right: difference between uniform and adaptive sampling.)

(Earth texture from Shaded Relief, scene and renders by Kevin Masson. Top-left: uniform sampling; top-right: adaptive sampling; bottom-left: Pixel Sample Count AOV (see below); bottom-right: difference between uniform and adaptive sampling.)

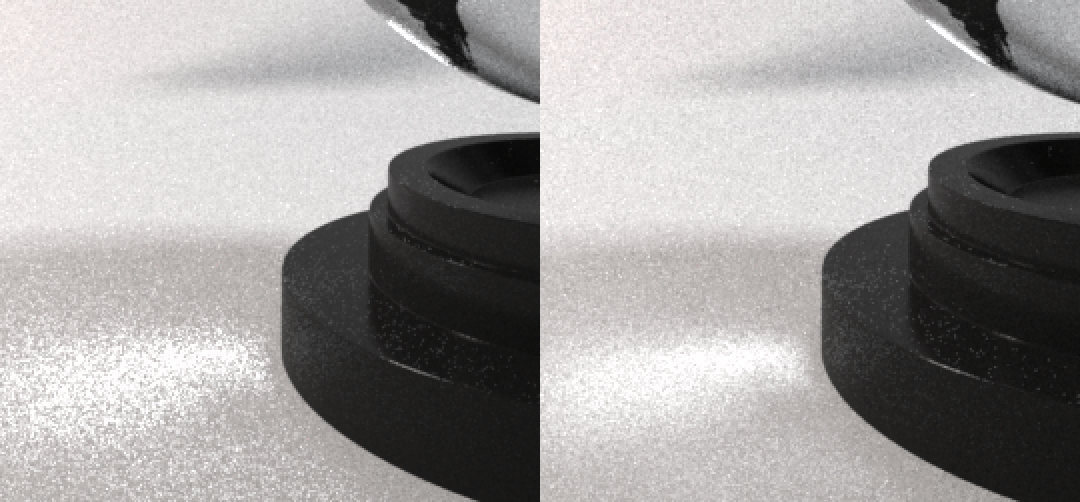

Close-up:

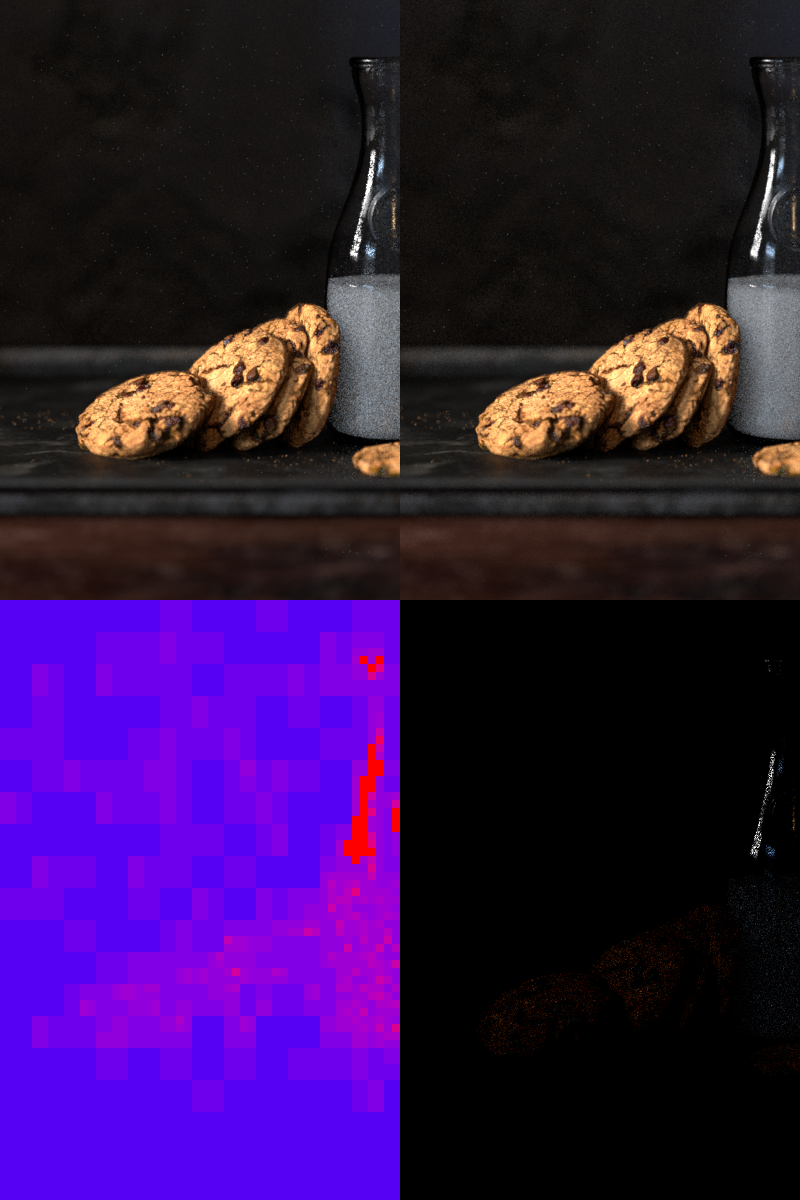

Here is another equal-time comparison:

(Cookies & Milk scene by Harsh Agrawal, render by Kevin Masson.)

(Cookies & Milk scene by Harsh Agrawal, render by Kevin Masson.)

Close-up:

Two AOVs have also been added:

- In the Pixel Sample Count AOV (

pixel_sample_count_aov), pixels in blue are those that received the fewest samples while pixels in red are those that received the most. - In the Pixel Variation AOV (

pixel_variation_aov), pixels in blue are those that contain the lowest noise while pixels in red are those that contain the highest.

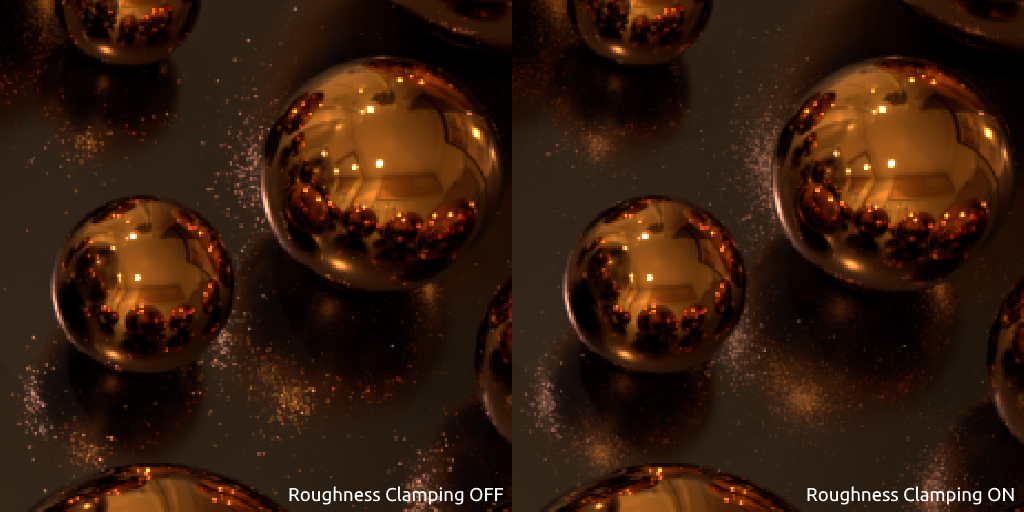

⭐️ New: Roughness Clamping

appleseed now supports Roughness Clamping, a trick popularized by Arnold that allows to reduce fireflies in scenes with lots of glossy and specular surfaces:

Roughness Clamping enabled:

Roughness Clamping disabled:

Close-ups:

⭐️ New: Albedo AOV

We added an Albedo AOV (albedo_aov) to capture the "base color" of surfaces. AOVs are not yet exposed in appleseed.studio, however the Albedo AOV is accessible via the new Albedo diagnostic mode that replaced the old Color one:

Here is the result of rendering the Wooden Staircase scene using the Albedo diagnostic mode:

(Wooden Staircase scene by Blend Swap user Wig42, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the

(Wooden Staircase scene by Blend Swap user Wig42, converted to Mitsuba format by Benedikt Bitterli, then to appleseed format via the mitsuba2appleseed.py script that ships with appleseed.)

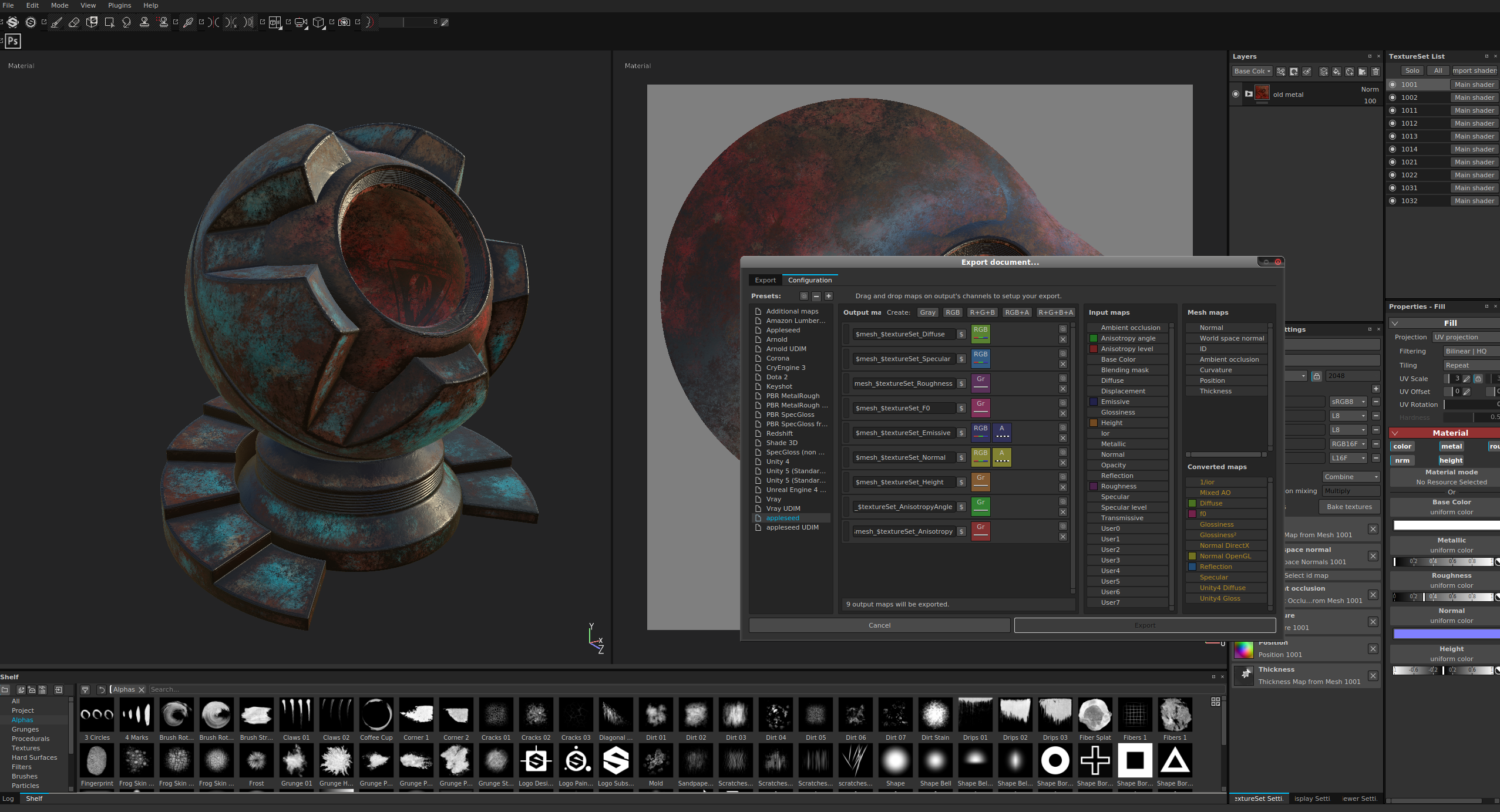

⭐️ New: Substance Painter-Compatible OSL Shader

This release includes as_sbs_pbrmaterial, a new OSL shader that matches Allegorithmic's Substance Painter shading model in the metallic/roughness workflow.

You can find all about this new shader in the documentation.

Here are a few renders using this shader:

(Renders by Luis Barrancos.)

(Renders by Luis Barrancos.)

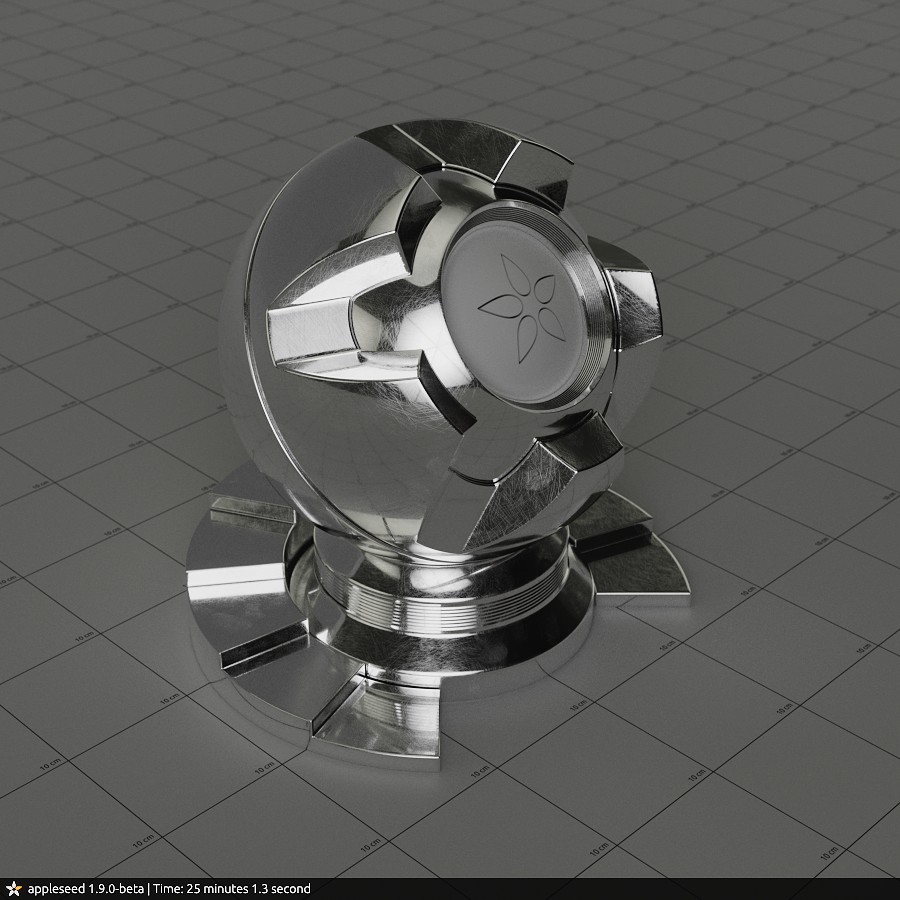

⭐️ New: Shaderball Version 5

We significantly redesigned and cleaned up our shaderball, now in version 5:

(Render by Juan Carlos Gutiérrez.)

(Render by Juan Carlos Gutiérrez.)

The shaderball scene is available in the following formats:

- Native appleseed project

- Autodesk® 3ds Max® 2016+ project

- Autodesk® Maya® 2017+ project

- OBJ file

- Alembic file

In addition, the shaderball is now also available as a low-poly model (15,140 triangles) in all formats.

⭐️ New: Search Paths Editor

We added a search paths editor to appleseed.studio. To open it, right-click on the top-level item (Project) of the Project Explorer and choose Edit Search Paths...:

In the search paths editor window, paths are ordered by ascending priority (paths lower in the list override those from higher up). Dark gray paths are those set via the APPLESEED_SEARCHPATH environment variable and cannot be edited while light gray ones are explicit paths that can be edited:

Other New Features and Improvements

appleseed

New Features and Improvements

- Experimental Intel® Embree-based intersection kernel for faster ray tracing.

- Added support for camera shift.

- Added appleseed Python 3 bindings.

- Added

fresnel_weightparameter toas_glossy()OSL closure (default is 1). - Added World Space Position AOV (

position_aov). - Improved Energy Compensation handling in Glossy and Metal BRDFs.

- appleseed's proprietary BinaryMesh geometry file format now supports single-precision geometry. All newly written BinaryMesh files will now use single-precision.

- Updated to the latest version of the BCD Denoiser which features several bug fixes.

- The

colormode has been replaced by thealbedomode in thediagnostic_surface_shadersurface shader. - If the

PYTHONHOMEenvironment variable is defined, appleseed.studio will use the Python release (which must be of the Python 2.7 variety) it points to instead of the Python release bundled with appleseed. It was already the case on Windows; it is now also the case on Linux and macOS. - Enabled alpha premultiplication for environment hits.

- Report environment map build time.

Bug Fixes

- Fixed view vector derivatives (

dIdxanddIdy) in OSL. - Fixed NaN warnings when using OSL transparency.

- Fixed Sun light intensity when using a custom Sun-scene distance.

- Fixed writing of non-opaque pixels in PNG files.

- Fixed minor alpha and color space issues in render stamp rendering code.

- Don't warn about reaching hard iteration limit when it is intended.

- Don't show AOV-related warning messages if the scene has no AOV.

appleseed.studio

New Features and Improvements

- Persist geometry of all windows across runs.

- Renders can now be paused and resumed.

- Rectangular selection of light paths is now supported.

- Changing the viewpoint in light paths visualization mode no longer affects the rendering camera. A toolbar button has been added to manually synchronize them.

- Allow panning and zooming light paths OpenGL widget.

- Allow capturing the contents of the light paths OpenGL widget to the clipboard with CTRL+C.

- Made geometry darker in light paths visualization mode.

- Allow to manually create and instantiate textures.

- Added third party libraries versions to About dialog.

- Add extension based on selected filter in file save dialogs.

- Adjusted font size in About dialog on macOS.

Bug Fixes

- Fixed some keyboard shortcuts in tooltips on macOS.

appleseed.cli

New Features and Improvements

- Light paths can now be saved to disk with the new

--save-light-pathscommand line option. - Replaced the

--select-object-instancescommand line option by--show-object-instancesand--hide-object-instances. - Added a

--librariescommand line option to print third party libraries versions.

Python Bindings

New Features and Improvements

-

Added the following classes to Python bindings:

BlenderProgressiveTileCallback IPostProcessingStageFactory IVolumeFactory MurmurHash PostProcessingStage PostProcessingStageContainer PostProcessingStageFactoryRegistrar ProjectPoints ShaderCompiler ShaderGroupContainer Vector2u Vector3u Vector4u Volume VolumeContainer VolumeFactorRegistrar -

Added the following methods to Python bindings:

AOV.get_channel_count() AOV.get_channel_names() AOV.get_image() AOV.has_color_data() Assembly.bssrdfs() Assembly.clear() Assembly.volumes() AssemblyInstance.set_transform_sequence() BaseGroup.clear() Camera.set_transform_sequence() EnvironmentEDF.set_transform_sequence() Frame.get_input_metadata() Frame.post_processing_stages() MeshObject.reserve_vertex_tangents() MeshObject.push_vertex_tangent() MeshObject.get_vertex_tangent_count() MeshObject.get_vertex_tangent() ShaderQueryWrapper.open_bytecode() Tile.get_storage() TransformSequence.optimize()

Bug Fixes

- Don't clear search paths set via the

APPLESEED_SEARCHPATHenvironment variable when setting search paths from Python.

Removed Features

-

Removed the following methods from Python bindings (breaking changes):

Frame.aov_images() Tile.blender_tile_data() Tile.copy_data_to()

OSL Shaders

New Features and Improvements

-

Added shaders:

as_closure2surface as_manifold2D as_sbs_pbrmaterial as_texture2surface -

Added Blender metadata to all appleseed shaders.

-

Tweaked and improved help strings.

Bug Fixes

- Fixed texture wrap modes in

as_textureshader.

Removed Features

-

Removed shaders (breaking changes):

as_maya_closure2Surface as_maya_texture2Surface -

Removed all Gaffer-specific shaders.

Miscellaneous

- Added

THIRDPARTIES.txtfile with the full license text of all third party libraries used in appleseed. - Added

aspaths2json.py, a Python script that can convert Light Paths files (*.aspaths) to JSON.

Known Issues

Linux

With some Linux distributions (Fedora 29, Arch Linux), appleseed.studio may fail to start with the following error:

./bin/appleseed.studio: symbol lookup error: /usr/lib/libfontconfig.so.1: undefined symbol: FT_Done_MM_Var

The reason appears to be newer libfontconfig and libz libraries in the system compared to those that ship with appleseed.

One solution is to force using the system's one:

env LD_PRELOAD="/usr/lib64/libfreetype.so /usr/lib64/libz.so" ./appleseed.studio

Another solution is simply to delete libfreetype.so.6 and libz.so.1 from appleseed's lib/ directory.

1.9.0-beta

6 years agoThese are the release notes for appleseed 1.9.0-beta.

These notes are part of a larger release, check out the main announcement for details.

Contributors

This release is the result of four intense months of work by the incredibly talented and dedicated appleseed development team.

Many thanks to our code contributors for this release:

- Muhamed Ali

- Aytek Aman

- Luis Barrancos

- François Beaune

- Artem Bishev

- Junchen Deng

- Jonathan Dent

- Sayali Deshpande

- Dorian Fevrier

- Eyal Landau

- Thomas Manceau

- Kevin Masson

- Fedor Matantsev

- Jino Park

- Sergo Pogosyan

- Girish Ramesh

- Jamorn Sriwasansak

- Phillip Thomas

- Esteban Tovagliari

- Neil You

And to our internal testers, feature specialists and artists, in particular:

- Richard Allen

- François Gilliot

- Juan Carlos Gutiérrez

- Luke Kliber

- Giuseppe Lucido

Interested in joining the appleseed development team, or want to get in touch with the developers? Join us on Discord. Simply interested in following appleseed's development and staying informed about upcoming appleseed releases? Follow us on Twitter.

Changes

Random-Walk Subsurface Scattering

Artem Bishev was one of our Google Summer of Code participants last year. He took up the rather intimidating project of implementing volumetric rendering in appleseed, and did an admirable job. Make sure to check out his final report for a detailed account of the features he implemented during the summer and the results he obtained.

Artem did not stop contributing once the summer was over. Instead, he kept pushing forward and implemented the much anticipated "Random Walk Subsurface Scattering", a more accurate method of computing subsurface scattering that actually simulates the travel of light under the surface of objects.

The results are nothing short of amazing, especially since Random Walk SSS appears to be as fast or sometimes faster than diffusion-based SSS (the traditional, less accurate method). Here are a couple renders showing Random Walk SSS in action:

(Stanford Dragon, render by Artem Bishev.)

(Stanford Dragon, render by Artem Bishev.)

(Statue of Lu-Yu by Artec 3D, render by Giuseppe Lucido.)

(Statue of Lu-Yu by Artec 3D, render by Giuseppe Lucido.)

Denoiser

Esteban integrated the BCD denoiser in appleseed. BCD stands for Bayesian Collaborative Denoiser: it's a denoising algorithm designed to remove the last bits of noise from high quality renders rendered with many samples per pixel (unlike other denoising techniques that basically reconstruct an image from a very small number of samples per pixel).

Here is the denoiser in action (click for the original uncompressed image):

Light Paths Recording and Visualization

Light paths can now be efficiently recorded during rendering, without significantly impacting render times. This is part of a larger project where appleseed is being used in an industrial context to analyze how light is scattering on or inside machines and instruments.

When light paths recording is enabled in the rendering settings, it becomes possible (once rendering is stopped) to explore and visualize all light paths that contributed to any given pixel of the image. Light paths can be recorded for the whole image or for a given region when a render window is defined. Light paths can then be visualized interactively via an OpenGL render of the scene. Light paths can also be exported to disk in an open and efficient binary file format.

Here is a screenshot of appleseed.studio showing one of the many light paths that contributed to a pixel that was chosen in the render of a 3D model of the Hubble Space Telescope made freely available by NASA:

Here is a short video showing the process of enabling light paths capture and visualizing light paths in appleseed.studio:

Shutter Opening/Closing Curves

Jino Park and Fedor Matantsev implemented support for non-instantaneous opening and closing of camera shutters: it is now possible to specify how long a camera's shutter takes to open or close, as well as the rate at which it opens and closes.

Here is an illustration showing three cases:

- "Instantaneous" means that the camera's shutter opens and closes instantaneously.

- "Linear" means that the camera's shutter opens and closes linearly (or, said differently, the camera's shutter opens and closes at a constant rate).

- "Bézier" means that the camera's shutter opening/closing position is defined by an arbitrary Bézier curve.

Render Stamp

It is now possible to have appleseed add a "render stamp" to renders. The render stamp is fully configurable and can contain a mixture of arbitrary text and predefined variables such as appleseed version, render time, etc. Here is what the default render stamp looks like:

Pixel Time AOV

Esteban also added a new Pixel Time AOV (or "render layer") that holds per-pixel render times (technically, the median time it took to render one sample inside each pixel). This new AOV can help figure out which areas of the image are most expensive to render, and thus help optimize renders.

Here is an example of the pixel time AOV (with exposure adjusted) that shows that grooves and other concave areas of the shaderball are more expensive to render than convex ones (the scattered white dots are likely due to the operating system interrupting the rendering process once in a while):

Other New Features and Improvements

appleseed

- Considerably sped up OSL texturing.

- Considerably reduced noise when using diffusion-based BSSRDFs.

- Improved the Directional Dipole BSSRDF.

- Fixed occasional infinite samples warning with diffusion-based BSSRDFs.

- Added OSL vector transforms.

- Fixed OSL NDC transforms.

- Fixed the behavior of OSL's

printf()function. - Fixed crash with Light Tree and light-emitting OSL shaders.

- Added support for writing grayscale + alpha PNG files.

- Improved logging of rendering settings.

- Allow to save extra (internal) AOVs to disk.

- Added support for energy compensation to a number of BSDFs.

- Improved sampling of OSL's

as_glossy()closure. - Added exposure multiplier inputs to lat-long environment EDF, mirrorball environment EDF and spot light.

- Fixed and improved detection of alpha mapping.

- Fixed self-intersections when rendering curves.

- AOVs are now always saved as OpenEXR files, regardless of the file format chosen for the main image.

- Added an Invalid Samples AOV.

- Use Blackman-Harris instead of Gaussian when an invalid pixel filter is specified.

- Fixed default OIIO texture wrap modes in Disney material.

appleseed.studio

- Added Settings dialog.

- Fixed crash when plugins directory does not exist.

- Fixed crash when mouse is dragged out of canvas if the pixel inspection is toggled on.

- Preserve environment EDFs transforms across edits.

- Update color preview when typing values in color field of a color entity.

- Small user interface improvements and tweaks.

- Tweaked default bounce limits.

- Fixed missing project file extensions in File → Open Recent menu.

- Added a Clear Missing Files entry in File → Open Recent menu.

- Fixed navigation during interactive rendering after having edited the frame entity.

- Added "Bitmap Files" filter to image file dialogs.

appleseed.cli

- AOVs are now sent to stdout when using

--to-stdout. - Fixed AOVs disappearing when command line arguments alter the frame.

Others

-

projecttoolcan now remove unused entities and print dependencies between entities.

OSL Shaders

- New shaders:

as_blackbody,as_blend_color,as_bump,as_composite_color,as_fresnel,as_subsurface,as_switch_surface,as_switch_texture,as_texture,as_texture_info,as_texture3d,as_triplanar. - Renamed shader

as_layer_shadertoas_blend_shader. - Fixed anisotropy rotation in

as_disney_material. - Added

Pref,Nrefand UV coordinates toas_globals. - Increased max ray depth in OSL shaders from 32 to 100.

- Enabled coating over transmission in

as_standard_surface. - Allow to choose the SSS profile in

as_standard_surface. - Don't limit incandescence amount to 1 in

as_standard_surface. - The source code of the OSL shaders is now included with appleseed.

1.8.1-beta

6 years agoThese are the release notes for appleseed 1.8.1-beta.

appleseed 1.8.1-beta is a minor release fixing issues discovered in appleseed 1.8.0-beta.

Changes

- Added energy compensation to glossy and metal BRDFs.

- Fixed Max Ray Intensity which was partially broken in the previous release.

- Fixed occasional warnings during rendering (NaN, negative or infinite pixel samples).

- Improved handling of errors during loading of geometry assets.

- Exposed Plastic BRDF to OSL via the

as_plastic()closure. - Updated and improved

dumpmetadatacommand line tool. - Light importance multiplier is now a numeric input.

- Make appleseed.studio's Windows icon multi-resolution.

- Emit log message once packing project is complete.

- Cleaned up appleseed OSL extensions (

as_osl_extensions.h) file.

1.8.0-beta

6 years agoThese are the release notes for appleseed 1.8.0-beta.

These notes are part of a larger release, check out the main announcement for details.

Contributors

This release is the fruit of the formidable work of the appleseed development team over the last six months.

Many thanks to our code contributors:

- Aytek Aman

- Luis Barrancos

- François Beaune

- Artem Bishev

- Petra Gospodnetic

- Roger Leigh

- Gleb Mishchenko

- Jino Park

- Sergo Pogosyan

- Esteban Tovagliari

And to our internal testers, feature specialists and artists, in particular:

- Richard Allen

- Dorian Fevrier

- François Gilliot

- JC Gutiérrez

- Luke Kliber

- Nathan Vegdahl

Interested in joining the appleseed development team, or want to get in touch with the developers? Join us on Slack. Simply interested in following appleseed's development and staying informed about upcoming appleseed releases? Follow us on Twitter.

Changes

Support for Procedural Objects

We introduced support for procedurally-defined objects, that is objects whose surface is defined by a function implemented in C++. This opens up a vast array of possibilities, from rendering fractal surfaces such as the Mandelbulb shown below, to rendering raw CAD models based on Constructive Solid Geometry without tessellating them into triangle meshes. One could even use this mechanism to prototype new acceleration structures with appleseed.

appleseed ships with three samples procedural objects in samples/cpp/: an infinite plane object, a sphere object and the Mandelbulb fractal. This last sample is particularly interesting: it's actually a generic Signed Distance Field raymarcher and a great toy to tinker with if you like procedural graphics!

Some good resources about Signed Distance Fields:

- Ray Marching and Signed Distance Functions by Jamie Wong

- Modeling with Distance Functions by Íñigo Quílez

Support for Procedural Assemblies

We also introduced long-awaited support for procedural assemblies. In appleseed's terminology, an assembly is a "package" that represents a part of the scene. A procedural assembly is one that gets populated at render time instead of being described in the scene file. Somewhat schematically, a procedural assembly is defined by a C++ plugin which is invoked by the renderer when it needs to know the content of the assembly.

Procedural assemblies allow to procedurally populate a scene, for instance to generate thousands of instances of a single object based on rules, without having to store all those instances on disk.

Below is an example of a procedural assembly: it is a modern recreation of the sphereflake object designed by Eric Haines et al. and described in the 1987's article A Proposal for Standard Graphics Environments, rendered using subsurface scattering (using the Normalized Diffusion BSSRDF), a Disney BRDF and image-based lighting:

For reference (and for fun; who doesn't love retro CG!) here is one early rendering of the Sphereflake by Eric Haines:

(Source: https://www.flickr.com/photos/101332032@N06/10865071225)

A particular type of procedural assembly supported by appleseed is an archive assembly. An archive assembly is defined by a bounding box and a reference to another appleseed scene file (extension .appleseed or .appleseedz). The referenced scene file is loaded by the renderer at the appropriate time. This is a common but powerful mechanism to assemble large and complex scenes out of smaller parts.

One important use of procedural assemblies in production is reading parts of scenes from Alembic archives, Pixar's USD scenes and other custom file formats. We recently started working on adding support for loading geometry directly from Alembic archives.

AOV Subsystem Rewrite

This release saw the previous AOV mechanism completely ripped out and reimplemented from scratch. While flexible, the previous AOV mechanism made it hard or in some cases impossible to composite AOVs back together. Moreover it lacked fundamental features such as splitting direct lighting from indirect lighting contributions or splitting diffuse from glossy scattering modes.

The new AOV subsystem implements what you expect from a modern renderer. appleseed currently supports the following AOVs:

- Direct Diffuse, Indirect Diffuse, Combined Diffuse

- Direct Glossy, Indirect Glossy, Combined Glossy

- Emission

- Shading Normals

- UVs

- Depth

Here is an example of AOVs rendered from our shaderball scene:

And the beauty render:

AOVs must currently be declared manually in the scene file:

<output>

<frame name="beauty">

<parameter name="camera" value="/group/camera" />

<parameter name="resolution" value="640 480" />

<parameter name="crop_window" value="0 0 639 479" />

<aovs>

<aov model="diffuse_aov" />

<aov model="glossy_aov" />

</aovs>

</frame>

</output>

The next release of appleseed will allow adding/removing AOVs directly from appleseed.studio. It will also add AOVs for our render denoiser, and if time allows, it will introduce native Cryptomatte support. We are also considering adding a motion vectors AOV in a future release.

Color Pipeline Overhaul

The color pipeline is another area that received a ton of attention in this release.

First of all, remember that appleseed is fully capable of spectral rendering, currently using 31 equidistant wavelengths in the 400 nm to 700 nm visible light range.

In the name of speed and accuracy, we no longer switch on-the-fly between RGB and spectral representations during rendering. We had a neat mechanism that supported this feature for many years but it had three main problems: it prevented us from being completely rigorous about color management, it incurred tremendous complexity in the very core of the renderer and it had nasty edge cases causing severe performance issues. Instead, we now let the user decide which color pipeline to use: RGB or spectral:

The RGB pipeline is the default and for most purposes there is no point in changing it. However appleseed is one of the rare renderers with spectral capabilities and we will continue pushing and extending this mechanism over the releases to come. In particular the next release will extend the wavelength range to 380-780 nm and should feature a much improved RGB-to-spectral conversion algorithm.

We also removed a lot of settings from Frame entities that didn't make much sense anymore. appleseed's internal framebuffer is now always linear, unclamped, premultiplied and uses 32-bit floating point precision.

Finally, appleseed.studio is now using OpenColorIO for color management. There is now a dropdown menu in appleseed.studio to choose a display transform (an Output Device Transform in ACES terminology) at any point during or after rendering:

appleseed.studio will populate this dropdown menu with transforms listed in OpenColorIO's configuration on the user's machine.

Support for Plugins (work-in-progress)

In addition to the procedural objects and procedural assemblies described above, this release also allows to extend all "scene modeling components" of appleseed via external plugins written in C++. This includes cameras, BSDFs, BSSRDFs, EDFs, lights, materials, textures, environments... There are technicalities that make this work incomplete in this release; we will gradually fix them as the need arises.

Substance Painter Export Presets

We now include Substance Painter export presets in the share/ folder inside appleseed's package.

Maps exported using these presets can be used in appleseed-maya and Gaffer with appleseed's new standard material:

Python Console in appleseed.studio (work-in-progress)

Our Google Summer of Code 2017 student Gleb Mishchenko implemented a Python console inside appleseed.studio, our scene tweaking, rendering, inspecting and debugging application:

Here is a closeup of the Python console loaded with a simple script that prints the name of all objects in the scene:

appleseed.studio also supports Python plugins: any directory with a __init__.py file inside studio/plugins/ will be imported and registered at startup. There are example plugins in samples/python/studio/plugins/.

Our motivation behind the Python console is threefold: simplify and speed up the development of appleseed.studio; let users extend appleseed.studio using Python plugins, and let users automate tedious tasks using one-off Python scripts directly inside appleseed.studio, with immediate feedback on their scene.

This work is still very much in progress: our Python API is quite low-level and not as comfortable to use as it could be, and we have yet to bundle PySide to let scripts extend the user interface and add menus, panels and dialogs (however we did play with this and it already works on Linux).

Details of Gleb's GSoC project can be found in his report.

Support for Participating Media (work-in-progress)

Artem Bishev, another of our GSoC 2017 students, took upon himself the rather intricate task of implementing physically-based volumetric rendering in appleseed.

In this release, appleseed supports fully raytraced single-scattering and multiple-scattering inside homogeneous participating media. Two phase functions are currently supported: a fully isotropic phase function and the Henyey-Greenstein phase function.

A volumetric bunny in the Cornell Box (spectral render):

This work is very much in progress. Main upcoming features are heterogeneous media and OpenVDB support.

Details of Artem's project can be found in his report.

New Light Sampling Algorithm (work-in-progress)

Petra Gospodnetić, our third GSoC student this year, spent her summer implementing a new light sampling strategy in appleseed based on Nathan Vegdahl's Light Tree algorithm. The Light Tree is a simple yet clever unbiased sampling algorithm that picks better candidate points on area/mesh light sources.

To illustrate the benefits of the Light Tree, here is a comparison between the existing light sampling algorithm and the new one on a scene making heavy use of mesh lights (both images were rendered with 16 samples/pixel):

And here is the converged render:

Full details of Petra's work can be found in her report.

Other New Features and Improvements

appleseed

- The physical sun light can now cast soft shadows, and the observer-Sun distance can be changed.

- Significantly improved our bump mapping implementation.

- Fixed a number of artifacts on silhouettes of coarsely tessellated meshes.

- Fixed artifacts due to high geometric complexity close to SSS materials.

- Lookup environment after glossy bounces even if IBL is disabled.

- Added instruction sets detection and release/host compatibility checks at startup.

- Switched from CIE 1964 10° color matching funtions to CIE 1931 2° color matching functions.

- Removed all color settings from Frame entities: pixel format, color space, clamping, premultiplied alpha, gamma correction.

- Removed Shade Alpha Cutouts option.

- Removed color and alpha multipliers from Physical Surface Shader.

- Removed Velvet BRDF as it has been superseded by the Sheen BRDF.

- Removed deprecated Microfacet BRDF in favor of higher-level ones such as Glass, Glossy, Metal or Plastic.

- Save chromaticities in OpenEXR renders (currently: scene-referred linear sRGB / Rec. 709 primaries).

- Made medium priorities signed (-128 to 127).

- Fixed incorrect rendering when the number of direct lighting samples is fractional.

appleseed.studio

- Improved the Entity Editor, in particular formatting and validation of numeric inputs.

- Fixed bug where the Entity Editor would close after renaming the entity.

- Fixed bug in material assignment editor window.

- Automatically realign camera target if it is off the viewing direction.

- Filtering regular expressions are now case-insensitive.

- Added ability to close the project via File → Close.

- Fixed multiple filtering bugs in the Tests dialog.

- Added button in Tests dialog to check only visible items.

- Improved handling of rendering failures such as out-of-memory errors.

- Persist file filters in image/texture file dialogs.

appleseed.cli

- Added

--to-stdoutmode to send rendered tiles to the standard output (in binary). - Removed

--continuous-savingmode since it fundamentally could not work reliably. - Message coloring and verbosity settings set on the command line now have precedence over those set in

settings/appleseed.cli.xml.

1.7.1-beta

6 years agoThis minor release fixes appleseed.python (appleseed's Python bindings) on macOS.

1.7.0-beta

6 years agoThese are the release notes for appleseed 1.7.0-beta.

Contributors

The following people contributed code for this release, in alphabetical order:

- Aytek Aman

- Luis Barrancos

- François Beaune

- Artem Bishev

- Andreea Dincu

- Petra Gospodnetic

- Andrei Ivashchenko

- Kutay Macit

- Nabil Miri

- Gleb Mishchenko

- Aakash Praliya

- Animesh Tewari

- Esteban Tovagliari

Changes

appleseed

Subsurface Scattering Overhaul

We made deep improvements to our ray traced subsurface scattering implementation. The new code gives more accurate and consistent results. We also took this opportunity to add support for SSS sets:

(On the left, each object is in its own SSS set; on the right, all objects are in the same SSS set.)

We also modified the Gaussian BSSRDF to expose the same parameters as other BSSRDF models. This makes finding the right BSSRDF model much easier.

Finally, we added Fresnel weight parameters to all BSSRDF models and we exposed the Gaussian BSSRDF to OSL.

New Path Tracing Controls

We added a few new controls to appleseed's unidirectional path tracer:

-

Low Light Threshold allows the path tracer to skip low contribution samples (thus saving the cost of expensive shadow rays) without introducing bias. By default the threshold is set to 0 (not skipping any sample) but we might change it in the future.

-

Separate diffuse, glossy and specular bounce limits, in addition to the existing global bounce limit.

New Plastic BRDF

appleseed now features a physically-based plastic BRDF:

(Coffee Maker scene by Blend Swap user cekuhnen, lighting and material setup by Benedikt Bitterli, converted to appleseed using our new mitsuba2appleseed.py tool.)

(Coffee Maker scene by Blend Swap user cekuhnen, lighting and material setup by Benedikt Bitterli, converted to appleseed using our new mitsuba2appleseed.py tool.)

OSL Shader Library

We added OSL shaders that expose some of appleseed' built-in BSDFs such as the Disney BRDF and our physically-based Glass BSDF, as well as Voronoi texture nodes (2D & 3D). We're continually growing and expanding this shader library.

In addition, we have now high quality OSL implementations of many Maya shading nodes. They are used in appleseed-maya, our soon to be released integration plugin for Autodesk Maya, to translate Maya shading networks to appleseed.

Other New Features and Improvements

- The AOV subsystem has been rewritten to be more correct and to offer more features.

- Added transparent support for packed project files (

*.appleseedz). - Added initial support for archive assemblies.

- Texture loading is now fully based on OpenImageIO, vastly expanding our image file format support.

- Warn about invalid (NaN or negative) during interactive rendering.

- Adjusted default rendering settings.

- Added cube mesh primitive.

- Added support for *.mitshair files (Mitsuba's curves file format).

- Added alternative volume parameterization to glass BSDF.

- Improved RGB <-> spectra conversions.

- Improved bump mapping implementation.

- Switched to OpenImageIO 1.7.10.

- Use same defaults in Disney BRDF and Disney material.

- Improved handling of project update errors.

- Rely on the geometric normal for side determination when vertex normals are absent.

- Allow to flip normals of an object instance with

<parameter name="flip_normals" value="true" />. - Use faster probe ray mode in more cases.

- Added highlight falloff controls to several BSDF models.

- Added support for

NDCandrasterOSL coordinate systems.

Bug Fixes

- Fixed sampling of Disney BRDF (also affects Disney material). The results are now more accurate.

- Fixed rendering of textured area lights.

- Fixed a number of cases where NaN values would be generated.

- Properly close texture files when rendering ends.

- Fixed bug in Unidirectional Path Tracer when the last bounce of a path is purely specular.

- Properly handle infinite values in environment maps.

- Fixed incorrect warning when using object alpha maps.

- Fixed incorrect alpha mapping detection when OSL materials have alpha maps.

- Fixed missing intersection filters on first renders.

- Fixed ray differentials after transparent (alpha) intersections.

- Fixed crash with OSL and nested dielectrics.

Removed Features

- Removed experimental Frozen Display interactive rendering mode.

- Removed fake translucency support from physical surface shader.

- Removed aerial perspective support from physical surface shader.

- Removed the Distributed Ray Tracing engine as it is now fully redundant with the Unidirectional Path Tracing engine configured with zero diffuse bounces.

- Removed the systematic depth AOV that was generated with every render.

appleseed.python

- Exposed creation of mesh primitives.

- Exposed environment EDF transforms.

- Exposed logger verbosity levels.

- Improved progress update in basic.py sample.

appleseed.studio

- Lock appleseed.studio down in final rendering mode.

- Fixed rare bug where aborting a render was not working.

- Fixed corner cases in camera controller.

- Don't show irrelevant inputs in entity editor.

- The scene picker now prints more information about the picking point.

- Fixed color value not updating in entity editor when cancelling color picker dialog.

- Update crop window in entity editor when setting or clearing crop window in render widget.

- Added tooltips in rendering settings dialog.

- Added File → Pack Project As... to pack a project to a single

*.appleseedzfile. - Added regular expression-based filter for tests in the Tests dialog.

- Added keyboard shortcuts to tooltips.

Others

- Replaced

updateprojectfilebyprojecttool, a new command line tool with more features. - Added

mitsuba2appleseed.pyto convert Mitsuba scenes to appleseed.

1.7.0-beta-preview-1

7 years agoThis is a preview version of appleseed 1.7.0-beta.

1.6.0-beta

7 years agoThese are the release notes for appleseed 1.6.0-beta.

The following people contributed code for this release, in alphabetical order:

- Luis Barrancos

- François Beaune

- Esteban Tovagliari

Note: We are providing builds relying on the SSE 4.2 SIMD instruction set. Please contact us if your machine does not support SSE 4.2 and you would like a build relying on SSE 2 instead.

appleseed

- Fixed color shifting problems in SSS.

- Fixed rare hanging in presence of SSS materials.

- Fixed rare problems when mixing RGB colors and spectra.

- Fixed rare problems with lat-long environment maps with fully black rows.

- Fixed bug leading to absolute texture paths in projects when saving projects in place.

- Reimplemented built-in mesh primitives to have better UV parameterizations.

- Added support for multiple cameras.

- We're now providing Visual Studio 2015 builds, in addition to Visual Studio 2013 ones.

- Fixed bug when interpolated normals/tangents were null.

OSL

- Added

matrix[]andint[]parameters support. - Fixed a number of bugs in the

background()closure. - Fixed crash with empty string parameters.

- Improved shader compatibility with other renderers.

- Added first set of shaders matching Maya shading nodes.

- Removed mean cosine (

g) parameter from appleseed subsurface closures.

Others

- Added missing

ObjectandMeshObjectmethods to Python bindings.

1.5.1-beta

7 years agoappleseed 1.5.1-beta is a new build of appleseed 1.5.0-beta that restores Windows 7 compatibility.

There are no other changes.